安装必要的库

import requests import pandas as pd import os import sys import io import urllib3 import json

测试数据

网页上的数据结构如下

{

"success": true,

"code": "cifm_0000",

"encode": null,

"message": "ok",

"url": null,

"total": 3,

"items": [

{

"summarydate": "20240611",

"summarytype": "naturalday",

"workday": true,

"newcustnum": 1,

"havecustnum": 1691627,

"newaccountnum": 2,

"haveaccountnum": 1692934,

"totalshare": 4947657341.69,

"netcash": -3523387.25,

"yield": 0.01386

},

{

"summarydate": "20240612",

"summarytype": "naturalday",

"workday": true,

"newcustnum": 5,

"havecustnum": 1672766,

"newaccountnum": 5,

"haveaccountnum": 1674071,

"totalshare": 4927109080.29,

"netcash": -20735233.55,

"yield": 0.01387

},

{

"summarydate": "20240613",

"summarytype": "naturalday",

"workday": true,

"newcustnum": 4,

"havecustnum": 1662839,

"newaccountnum": 5,

"haveaccountnum": 1664146,

"totalshare": 4927405885.59,

"netcash": 110659.8,

"yield": 0.01389

}

],

"data": null,

"info": null

}

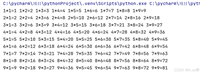

详细逻辑代码

import requests

import pandas as pd

import os

import sys

import io

import urllib3

import json

urllib3.disable_warnings(urllib3.exceptions.insecurerequestwarning)

sys.stdout = io.textiowrapper(sys.stdout.buffer, encoding='utf-8')

url = "https://ip/ma/web/trade/dailysummary?startdate={pi_startdate}&enddate={pi_enddate}"

headers = {

"accept": "text/html,application/xhtml+xml,application/xml;q=0.9,image/avif,image/webp,image/apng,*/*;q=0.8,application/signed-exchange;v=b3;q=0.7",

"accept-language": "zh-cn,zh;q=0.9",

"user-agent": "mozilla/5.0 (windows nt 10.0; win64; x64) applewebkit/537.36 (khtml, like gecko) chrome/119.0.0.0 safari/537.36 edg/119.0.0.0",

}

def save_data(data, columns, excel_path, sheet_name):

df = pd.dataframe(data, columns=columns)

if not os.path.exists(excel_path):

df.to_excel(excel_path, sheet_name=sheet_name, index=false)

else:

with pd.excelwriter(excel_path, engine='openpyxl', mode='a') as writer:

df.to_excel(writer, sheet_name=sheet_name, index=false)

def json2list(response_text):

# 把json数据转化为python用的类型

json_dict = json.loads(response_text)

src_total = json_dict["total"]

print("src_total: {}".format(src_total))

items = json_dict["items"]

excel_columns = ['summarydate',

'summarytype',

'workday',

'newcustnum',

'havecustnum',

'newaccountnum',

'haveaccountnum',

'totalshare',

'netcash',

'yield'

]

excel_data = []

# 使用xpath定位元素并打印内容

for item in items:

excel_row_data = []

for column_index in range(len(excel_columns)):

data = str(item[excel_columns[column_index]])

if excel_columns[column_index] == 'workday':

data = str(0 if data == "false" else 1)

excel_row_data.append(data)

excel_data.append(excel_row_data)

trg_total = len(excel_data)

# 稽核

print("trg_total: {}".format(trg_total))

vn_biasval = trg_total - src_total

if vn_biasval != 0:

print("this audit-rule is not passed,diff: {}".format(vn_biasval))

exit(-1)

else:

print("this audit-rule is passed,diff: {}".format(vn_biasval))

return excel_columns, excel_data

if __name__ == '__main__':

try:

excel_path = "c:/xxx/temp/ylb_dailysummary_{pi_startdate}_{pi_enddate}.xlsx"

sheet_name = 'result_data'

pi_startdate = 20240611

pi_enddate = 20240613

excel_path = excel_path.format(pi_startdate=pi_startdate, pi_enddate=pi_enddate)

url = url.format(pi_startdate=pi_startdate, pi_enddate=pi_enddate)

print("url:{}".format(url))

print("excel_path:{}".format(excel_path))

response_text = requests.get(url, headers=headers, timeout=(21, 300), verify=false).content.decode("utf8")

excel_columns, excel_data = json2list(response_text)

print("=================excel_columns=======================")

print(excel_columns)

print("=================excel_data==========================")

for x in excel_data:

print(x)

print("=====================================================")

# 文件存在,则删除

if os.path.exists(excel_path):

os.remove(excel_path)

# 保存文件

save_data(excel_data, excel_columns, excel_path, sheet_name)

print("save_data is end.")

except exception as e:

print("[error]:" + str(e))

exit(-1)

代码解析

1.请求头

构造请求头

urllib3.disable_warnings(urllib3.exceptions.insecurerequestwarning)

sys.stdout = io.textiowrapper(sys.stdout.buffer, encoding='utf-8')

url = "https://ip/ma/web/trade/dailysummary?startdate={pi_startdate}&enddate={pi_enddate}"

headers = {

"accept": "text/html,application/xhtml+xml,application/xml;q=0.9,image/avif,image/webp,image/apng,*/*;q=0.8,application/signed-exchange;v=b3;q=0.7",

"accept-language": "zh-cn,zh;q=0.9",

"user-agent": "mozilla/5.0 (windows nt 10.0; win64; x64) applewebkit/537.36 (khtml, like gecko) chrome/119.0.0.0 safari/537.36 edg/119.0.0.0",

}

2.数据保存到excel

如果excel已经存在,那么则会将数据追加到excel中

def save_data(data, columns, excel_path, sheet_name):

df = pd.dataframe(data, columns=columns)

if not os.path.exists(excel_path):

df.to_excel(excel_path, sheet_name=sheet_name, index=false)

else:

with pd.excelwriter(excel_path, engine='openpyxl', mode='a') as writer:

df.to_excel(writer, sheet_name=sheet_name, index=false)

解析json数据获取字段名称以及对应的数据list列表

def json2list(response_text):

# 把json数据转化为python用的类型

json_dict = json.loads(response_text)

src_total = json_dict["total"]

print("src_total: {}".format(src_total))

items = json_dict["items"]

excel_columns = ['summarydate',

'summarytype',

'workday',

'newcustnum',

'havecustnum',

'newaccountnum',

'haveaccountnum',

'totalshare',

'netcash',

'yield'

]

excel_data = []

# 使用xpath定位元素并打印内容

for item in items:

excel_row_data = []

for column_index in range(len(excel_columns)):

data = str(item[excel_columns[column_index]])

if excel_columns[column_index] == 'workday':

data = str(0 if data == "false" else 1)

excel_row_data.append(data)

excel_data.append(excel_row_data)

trg_total = len(excel_data)

# 稽核

print("trg_total: {}".format(trg_total))

vn_biasval = trg_total - src_total

if vn_biasval != 0:

print("this audit-rule is not passed,diff: {}".format(vn_biasval))

exit(-1)

else:

print("this audit-rule is passed,diff: {}".format(vn_biasval))

return excel_columns, excel_data

3.测试方法入口

if __name__ == '__main__':

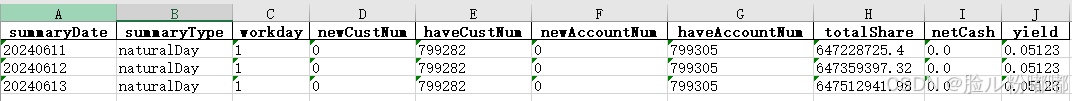

测试结果

会生成ylb_dailysummary_20240611_20240613.xlsx文件

以上就是python解析网页上的json数据并保存到excel的详细内容,更多关于python解析网页json数据的资料请关注代码网其它相关文章!

发表评论