docker部署kafka遇到问题

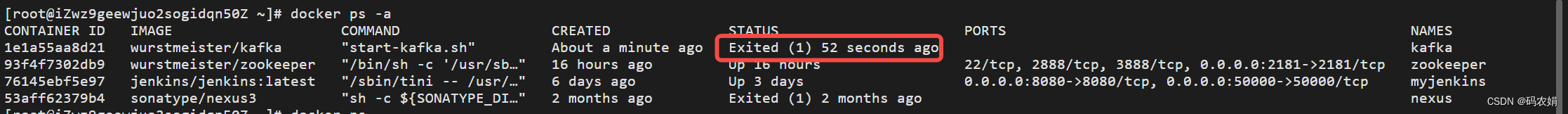

启动容器之后,状态为`exited

如图:

查看日志

docker logs 1e1a55aa8d21

日志详细信息

[root@izwz9geewjuo2sogidqn50z ~]# docker logs 1e1a55aa8d21

[configuring] 'advertised.listeners' in '/opt/kafka/config/server.properties'

[configuring] 'port' in '/opt/kafka/config/server.properties'

excluding kafka_home from broker config

[configuring] 'log.dirs' in '/opt/kafka/config/server.properties'

[configuring] 'listeners' in '/opt/kafka/config/server.properties'

excluding kafka_version from broker config

[configuring] 'zookeeper.connect' in '/opt/kafka/config/server.properties'

[configuring] 'broker.id' in '/opt/kafka/config/server.properties'

[2022-05-13 03:22:36,754] info registered kafka:type=kafka.log4jcontroller mbean (kafka.utils.log4jcontrollerregistration$)

[2022-05-13 03:22:37,474] info setting -d jdk.tls.rejectclientinitiatedrenegotiation=true to disable client-initiated tls renegotiation (org.apache.zookeeper.common.x509util)

[2022-05-13 03:22:37,644] info registered signal handlers for term, int, hup (org.apache.kafka.common.utils.loggingsignalhandler)

[2022-05-13 03:22:37,652] info starting (kafka.server.kafkaserver)

[2022-05-13 03:22:37,652] info connecting to zookeeper on 39.108.99.163:2181 (kafka.server.kafkaserver)

[2022-05-13 03:22:37,687] info [zookeeperclient kafka server] initializing a new session to 39.108.99.163:2181. (kafka.zookeeper.zookeeperclient)

[2022-05-13 03:22:37,695] info client environment:zookeeper.version=3.5.9-83df9301aa5c2a5d284a9940177808c01bc35cef, built on 01/06/2021 20:03 gmt (org.apache.zookeeper.zookeeper)

[2022-05-13 03:22:37,695] info client environment:host.name=1e1a55aa8d21 (org.apache.zookeeper.zookeeper)

[2022-05-13 03:22:37,695] info client environment:java.version=11.0.15 (org.apache.zookeeper.zookeeper)

[2022-05-13 03:22:37,695] info client environment:java.vendor=oracle corporation (org.apache.zookeeper.zookeeper)

[2022-05-13 03:22:37,695] info client environment:java.home=/usr/local/openjdk-11 (org.apache.zookeeper.zookeeper)

[2022-05-13 03:22:37,695] info client environment:java.class.path=/opt/kafka/bin/../libs/activation-1.1.1.jar:/opt/kafka/bin/../libs/aopalliance-repackaged-2.6.1.jar:/opt/kafka/bin/../libs/argparse4j-0.7.0.jar:/opt/kafka/bin/../libs/audience-annotations-0.5.0.jar:/opt/kafka/bin/../libs/commons-cli-1.4.jar:/opt/kafka/bin/../libs/commons-lang3-3.8.1.jar:/opt/kafka/bin/../libs/connect-api-2.8.1.jar:/opt/kafka/bin/../libs/connect-basic-auth-extension-2.8.1.jar:/opt/kafka/bin/../libs/connect-file-2.8.1.jar:/opt/kafka/bin/../libs/connect-json-2.8.1.jar:/opt/kafka/bin/../libs/connect-mirror-2.8.1.jar:/opt/kafka/bin/../libs/connect-mirror-client-2.8.1.jar:/opt/kafka/bin/../libs/connect-runtime-2.8.1.jar:/opt/kafka/bin/../libs/connect-transforms-2.8.1.jar:/opt/kafka/bin/../libs/hk2-api-2.6.1.jar:/opt/kafka/bin/../libs/hk2-locator-2.6.1.jar:/opt/kafka/bin/../libs/hk2-utils-2.6.1.jar:/opt/kafka/bin/../libs/jackson-annotations-2.10.5.jar:/opt/kafka/bin/../libs/jackson-core-2.10.5.jar:/opt/kafka/bin/../libs/jackson-databind-2.10.5.1.jar:/opt/kafka/bin/../libs/jackson-dataformat-csv-2.10.5.jar:/opt/kafka/bin/../libs/jackson-datatype-jdk8-2.10.5.jar:/opt/kafka/bin/../libs/jackson-jaxrs-base-2.10.5.jar:/opt/kafka/bin/../libs/jackson-jaxrs-json-provider-2.10.5.jar:/opt/kafka/bin/../libs/jackson-module-jaxb-annotations-2.10.5.jar:/opt/kafka/bin/../libs/jackson-module-paranamer-2.10.5.jar:/opt/kafka/bin/../libs/jackson-module-scala_2.13-2.10.5.jar:/opt/kafka/bin/../libs/jakarta.activation-api-1.2.1.jar:/opt/kafka/bin/../libs/jakarta.annotation-api-1.3.5.jar:/opt/kafka/bin/../libs/jakarta.inject-2.6.1.jar:/opt/kafka/bin/../libs/jakarta.validation-api-2.0.2.jar:/opt/kafka/bin/../libs/jakarta.ws.rs-api-2.1.6.jar:/opt/kafka/bin/../libs/jakarta.xml.bind-api-2.3.2.jar:/opt/kafka/bin/../libs/javassist-3.27.0-ga.jar:/opt/kafka/bin/../libs/javax.servlet-api-3.1.0.jar:/opt/kafka/bin/../libs/javax.ws.rs-api-2.1.1.jar:/opt/kafka/bin/../libs/jaxb-api-2.3.0.jar:/opt/kafka/bin/../libs/jersey-client-2.34.jar:/opt/kafka/bin/../libs/jersey-common-2.34.jar:/opt/kafka/bin/../libs/jersey-container-servlet-2.34.jar:/opt/kafka/bin/../libs/jersey-container-servlet-core-2.34.jar:/opt/kafka/bin/../libs/jersey-hk2-2.34.jar:/opt/kafka/bin/../libs/jersey-server-2.34.jar:/opt/kafka/bin/../libs/jetty-client-9.4.43.v20210629.jar:/opt/kafka/bin/../libs/jetty-continuation-9.4.43.v20210629.jar:/opt/kafka/bin/../libs/jetty-http-9.4.43.v20210629.jar:/opt/kafka/bin/../libs/jetty-io-9.4.43.v20210629.jar:/opt/kafka/bin/../libs/jetty-security-9.4.43.v20210629.jar:/opt/kafka/bin/../libs/jetty-server-9.4.43.v20210629.jar:/opt/kafka/bin/../libs/jetty-servlet-9.4.43.v20210629.jar:/opt/kafka/bin/../libs/jetty-servlets-9.4.43.v20210629.jar:/opt/kafka/bin/../libs/jetty-util-9.4.43.v20210629.jar:/opt/kafka/bin/../libs/jetty-util-ajax-9.4.43.v20210629.jar:/opt/kafka/bin/../libs/jline-3.12.1.jar:/opt/kafka/bin/../libs/jopt-simple-5.0.4.jar:/opt/kafka/bin/../libs/kafka-clients-2.8.1.jar:/opt/kafka/bin/../libs/kafka-log4j-appender-2.8.1.jar:/opt/kafka/bin/../libs/kafka-metadata-2.8.1.jar:/opt/kafka/bin/../libs/kafka-raft-2.8.1.jar:/opt/kafka/bin/../libs/kafka-shell-2.8.1.jar:/opt/kafka/bin/../libs/kafka-streams-2.8.1.jar:/opt/kafka/bin/../libs/kafka-streams-examples-2.8.1.jar:/opt/kafka/bin/../libs/kafka-streams-scala_2.13-2.8.1.jar:/opt/kafka/bin/../libs/kafka-streams-test-utils-2.8.1.jar:/opt/kafka/bin/../libs/kafka-tools-2.8.1.jar:/opt/kafka/bin/../libs/kafka_2.13-2.8.1-sources.jar:/opt/kafka/bin/../libs/kafka_2.13-2.8.1.jar:/opt/kafka/bin/../libs/log4j-1.2.17.jar:/opt/kafka/bin/../libs/lz4-java-1.7.1.jar:/opt/kafka/bin/../libs/maven-artifact-3.8.1.jar:/opt/kafka/bin/../libs/metrics-core-2.2.0.jar:/opt/kafka/bin/../libs/netty-buffer-4.1.62.final.jar:/opt/kafka/bin/../libs/netty-codec-4.1.62.final.jar:/opt/kafka/bin/../libs/netty-common-4.1.62.final.jar:/opt/kafka/bin/../libs/netty-handler-4.1.62.final.jar:/opt/kafka/bin/../libs/netty-resolver-4.1.62.final.jar:/opt/kafka/bin/../libs/netty-transport-4.1.62.final.jar:/opt/kafka/bin/../libs/netty-transport-native-epoll-4.1.62.final.jar:/opt/kafka/bin/../libs/netty-transport-native-unix-common-4.1.62.final.jar:/opt/kafka/bin/../libs/osgi-resource-locator-1.0.3.jar:/opt/kafka/bin/../libs/paranamer-2.8.jar:/opt/kafka/bin/../libs/plexus-utils-3.2.1.jar:/opt/kafka/bin/../libs/reflections-0.9.12.jar:/opt/kafka/bin/../libs/rocksdbjni-5.18.4.jar:/opt/kafka/bin/../libs/scala-collection-compat_2.13-2.3.0.jar:/opt/kafka/bin/../libs/scala-java8-compat_2.13-0.9.1.jar:/opt/kafka/bin/../libs/scala-library-2.13.5.jar:/opt/kafka/bin/../libs/scala-logging_2.13-3.9.2.jar:/opt/kafka/bin/../libs/scala-reflect-2.13.5.jar:/opt/kafka/bin/../libs/slf4j-api-1.7.30.jar:/opt/kafka/bin/../libs/slf4j-log4j12-1.7.30.jar:/opt/kafka/bin/../libs/snappy-java-1.1.8.1.jar:/opt/kafka/bin/../libs/zookeeper-3.5.9.jar:/opt/kafka/bin/../libs/zookeeper-jute-3.5.9.jar:/opt/kafka/bin/../libs/zstd-jni-1.4.9-1.jar (org.apache.zookeeper.zookeeper)

[2022-05-13 03:22:37,695] info client environment:java.library.path=/usr/java/packages/lib:/usr/lib64:/lib64:/lib:/usr/lib (org.apache.zookeeper.zookeeper)

[2022-05-13 03:22:37,695] info client environment:java.io.tmpdir=/tmp (org.apache.zookeeper.zookeeper)

[2022-05-13 03:22:37,695] info client environment:java.compiler=<na> (org.apache.zookeeper.zookeeper)

[2022-05-13 03:22:37,695] info client environment:os.name=linux (org.apache.zookeeper.zookeeper)

[2022-05-13 03:22:37,695] info client environment:os.arch=amd64 (org.apache.zookeeper.zookeeper)

[2022-05-13 03:22:37,695] info client environment:os.version=3.10.0-862.14.4.el7.x86_64 (org.apache.zookeeper.zookeeper)

[2022-05-13 03:22:37,695] info client environment:user.name=root (org.apache.zookeeper.zookeeper)

[2022-05-13 03:22:37,695] info client environment:user.home=/root (org.apache.zookeeper.zookeeper)

[2022-05-13 03:22:37,696] info client environment:user.dir=/ (org.apache.zookeeper.zookeeper)

[2022-05-13 03:22:37,696] info client environment:os.memory.free=977mb (org.apache.zookeeper.zookeeper)

[2022-05-13 03:22:37,696] info client environment:os.memory.max=1024mb (org.apache.zookeeper.zookeeper)

[2022-05-13 03:22:37,696] info client environment:os.memory.total=1024mb (org.apache.zookeeper.zookeeper)

[2022-05-13 03:22:37,699] info initiating client connection, connectstring=39.108.99.163:2181 sessiontimeout=18000 watcher=kafka.zookeeper.zookeeperclient$zookeeperclientwatcher$@205d38da (org.apache.zookeeper.zookeeper)

[2022-05-13 03:22:37,704] info jute.maxbuffer value is 4194304 bytes (org.apache.zookeeper.clientcnxnsocket)

[2022-05-13 03:22:37,712] info zookeeper.request.timeout value is 0. feature enabled= (org.apache.zookeeper.clientcnxn)

[2022-05-13 03:22:37,715] info [zookeeperclient kafka server] waiting until connected. (kafka.zookeeper.zookeeperclient)

[2022-05-13 03:22:37,784] info opening socket connection to server 39.108.99.163/39.108.99.163:2181. will not attempt to authenticate using sasl (unknown error) (org.apache.zookeeper.clientcnxn)

[2022-05-13 03:22:55,716] info [zookeeperclient kafka server] closing. (kafka.zookeeper.zookeeperclient)

[2022-05-13 03:22:55,720] warn client session timed out, have not heard from server in 18003ms for sessionid 0x0 (org.apache.zookeeper.clientcnxn)

[2022-05-13 03:22:55,830] info session: 0x0 closed (org.apache.zookeeper.zookeeper)

[2022-05-13 03:22:55,832] info [zookeeperclient kafka server] closed. (kafka.zookeeper.zookeeperclient)

[2022-05-13 03:22:55,834] info eventthread shut down for session: 0x0 (org.apache.zookeeper.clientcnxn)

[2022-05-13 03:22:55,836] error fatal error during kafkaserver startup. prepare to shutdown (kafka.server.kafkaserver)

kafka.zookeeper.zookeeperclienttimeoutexception: timed out waiting for connection while in state: connecting

at kafka.zookeeper.zookeeperclient.waituntilconnected(zookeeperclient.scala:271)

at kafka.zookeeper.zookeeperclient.<init>(zookeeperclient.scala:125)

at kafka.zk.kafkazkclient$.apply(kafkazkclient.scala:1948)

at kafka.server.kafkaserver.createzkclient$1(kafkaserver.scala:431)

at kafka.server.kafkaserver.initzkclient(kafkaserver.scala:456)

at kafka.server.kafkaserver.startup(kafkaserver.scala:191)

at kafka.kafka$.main(kafka.scala:109)

at kafka.kafka.main(kafka.scala)

[2022-05-13 03:22:55,840] info shutting down (kafka.server.kafkaserver)

[2022-05-13 03:22:55,850] info app info kafka.server for 0 unregistered (org.apache.kafka.common.utils.appinfoparser)

[2022-05-13 03:22:55,851] info shut down completed (kafka.server.kafkaserver)

[2022-05-13 03:22:55,851] error exiting kafka. (kafka.kafka$)

[2022-05-13 03:22:55,854] info shutting down (kafka.server.kafkaserver)

kafka.zookeeper.zookeeperclienttimeoutexception: timed out waiting for connection while in state: connecting

连接超时

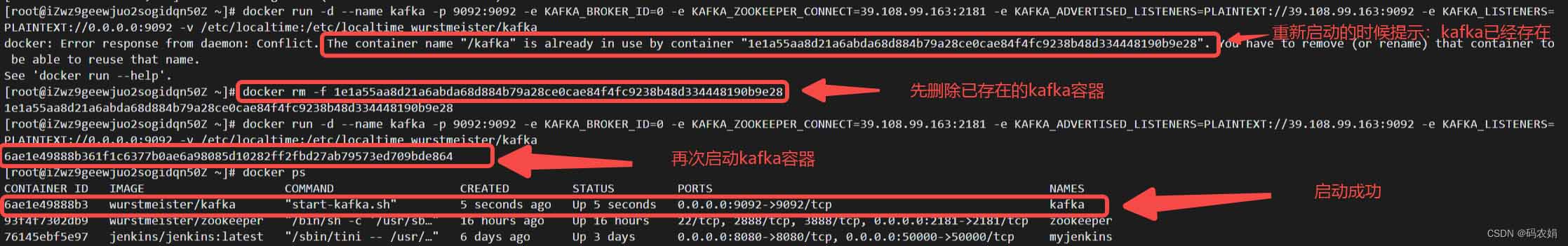

排查原因端口不通,安全组配置相关端口,重新启动成功:

再次查看日志

[root@izwz9geewjuo2sogidqn50z ~]# docker logs 6ae1e49888b3

[configuring] 'advertised.listeners' in '/opt/kafka/config/server.properties'

[configuring] 'port' in '/opt/kafka/config/server.properties'

excluding kafka_home from broker config

[configuring] 'log.dirs' in '/opt/kafka/config/server.properties'

[configuring] 'listeners' in '/opt/kafka/config/server.properties'

excluding kafka_version from broker config

[configuring] 'zookeeper.connect' in '/opt/kafka/config/server.properties'

[configuring] 'broker.id' in '/opt/kafka/config/server.properties'

[2022-05-13 03:41:21,371] info registered kafka:type=kafka.log4jcontroller mbean (kafka.utils.log4jcontrollerregistration$)

[2022-05-13 03:41:22,119] info setting -d jdk.tls.rejectclientinitiatedrenegotiation=true to disable client-initiated tls renegotiation (org.apache.zookeeper.common.x509util)

[2022-05-13 03:41:22,334] info registered signal handlers for term, int, hup (org.apache.kafka.common.utils.loggingsignalhandler)

[2022-05-13 03:41:22,342] info starting (kafka.server.kafkaserver)

[2022-05-13 03:41:22,342] info connecting to zookeeper on 39.108.99.163:2181 (kafka.server.kafkaserver)

[2022-05-13 03:41:22,376] info [zookeeperclient kafka server] initializing a new session to 39.108.99.163:2181. (kafka.zookeeper.zookeeperclient)

[2022-05-13 03:41:22,384] info client environment:zookeeper.version=3.5.9-83df9301aa5c2a5d284a9940177808c01bc35cef, built on 01/06/2021 20:03 gmt (org.apache.zookeeper.zookeeper)

[2022-05-13 03:41:22,384] info client environment:host.name=6ae1e49888b3 (org.apache.zookeeper.zookeeper)

[2022-05-13 03:41:22,384] info client environment:java.version=11.0.15 (org.apache.zookeeper.zookeeper)

[2022-05-13 03:41:22,385] info client environment:java.vendor=oracle corporation (org.apache.zookeeper.zookeeper)

[2022-05-13 03:41:22,385] info client environment:java.home=/usr/local/openjdk-11 (org.apache.zookeeper.zookeeper)

[2022-05-13 03:41:22,385] info client environment:java.class.path=/opt/kafka/bin/../libs/activation-1.1.1.jar:/opt/kafka/bin/../libs/aopalliance-repackaged-2.6.1.jar:/opt/kafka/bin/../libs/argparse4j-0.7.0.jar:/opt/kafka/bin/../libs/audience-annotations-0.5.0.jar:/opt/kafka/bin/../libs/commons-cli-1.4.jar:/opt/kafka/bin/../libs/commons-lang3-3.8.1.jar:/opt/kafka/bin/../libs/connect-api-2.8.1.jar:/opt/kafka/bin/../libs/connect-basic-auth-extension-2.8.1.jar:/opt/kafka/bin/../libs/connect-file-2.8.1.jar:/opt/kafka/bin/../libs/connect-json-2.8.1.jar:/opt/kafka/bin/../libs/connect-mirror-2.8.1.jar:/opt/kafka/bin/../libs/connect-mirror-client-2.8.1.jar:/opt/kafka/bin/../libs/connect-runtime-2.8.1.jar:/opt/kafka/bin/../libs/connect-transforms-2.8.1.jar:/opt/kafka/bin/../libs/hk2-api-2.6.1.jar:/opt/kafka/bin/../libs/hk2-locator-2.6.1.jar:/opt/kafka/bin/../libs/hk2-utils-2.6.1.jar:/opt/kafka/bin/../libs/jackson-annotations-2.10.5.jar:/opt/kafka/bin/../libs/jackson-core-2.10.5.jar:/opt/kafka/bin/../libs/jackson-databind-2.10.5.1.jar:/opt/kafka/bin/../libs/jackson-dataformat-csv-2.10.5.jar:/opt/kafka/bin/../libs/jackson-datatype-jdk8-2.10.5.jar:/opt/kafka/bin/../libs/jackson-jaxrs-base-2.10.5.jar:/opt/kafka/bin/../libs/jackson-jaxrs-json-provider-2.10.5.jar:/opt/kafka/bin/../libs/jackson-module-jaxb-annotations-2.10.5.jar:/opt/kafka/bin/../libs/jackson-module-paranamer-2.10.5.jar:/opt/kafka/bin/../libs/jackson-module-scala_2.13-2.10.5.jar:/opt/kafka/bin/../libs/jakarta.activation-api-1.2.1.jar:/opt/kafka/bin/../libs/jakarta.annotation-api-1.3.5.jar:/opt/kafka/bin/../libs/jakarta.inject-2.6.1.jar:/opt/kafka/bin/../libs/jakarta.validation-api-2.0.2.jar:/opt/kafka/bin/../libs/jakarta.ws.rs-api-2.1.6.jar:/opt/kafka/bin/../libs/jakarta.xml.bind-api-2.3.2.jar:/opt/kafka/bin/../libs/javassist-3.27.0-ga.jar:/opt/kafka/bin/../libs/javax.servlet-api-3.1.0.jar:/opt/kafka/bin/../libs/javax.ws.rs-api-2.1.1.jar:/opt/kafka/bin/../libs/jaxb-api-2.3.0.jar:/opt/kafka/bin/../libs/jersey-client-2.34.jar:/opt/kafka/bin/../libs/jersey-common-2.34.jar:/opt/kafka/bin/../libs/jersey-container-servlet-2.34.jar:/opt/kafka/bin/../libs/jersey-container-servlet-core-2.34.jar:/opt/kafka/bin/../libs/jersey-hk2-2.34.jar:/opt/kafka/bin/../libs/jersey-server-2.34.jar:/opt/kafka/bin/../libs/jetty-client-9.4.43.v20210629.jar:/opt/kafka/bin/../libs/jetty-continuation-9.4.43.v20210629.jar:/opt/kafka/bin/../libs/jetty-http-9.4.43.v20210629.jar:/opt/kafka/bin/../libs/jetty-io-9.4.43.v20210629.jar:/opt/kafka/bin/../libs/jetty-security-9.4.43.v20210629.jar:/opt/kafka/bin/../libs/jetty-server-9.4.43.v20210629.jar:/opt/kafka/bin/../libs/jetty-servlet-9.4.43.v20210629.jar:/opt/kafka/bin/../libs/jetty-servlets-9.4.43.v20210629.jar:/opt/kafka/bin/../libs/jetty-util-9.4.43.v20210629.jar:/opt/kafka/bin/../libs/jetty-util-ajax-9.4.43.v20210629.jar:/opt/kafka/bin/../libs/jline-3.12.1.jar:/opt/kafka/bin/../libs/jopt-simple-5.0.4.jar:/opt/kafka/bin/../libs/kafka-clients-2.8.1.jar:/opt/kafka/bin/../libs/kafka-log4j-appender-2.8.1.jar:/opt/kafka/bin/../libs/kafka-metadata-2.8.1.jar:/opt/kafka/bin/../libs/kafka-raft-2.8.1.jar:/opt/kafka/bin/../libs/kafka-shell-2.8.1.jar:/opt/kafka/bin/../libs/kafka-streams-2.8.1.jar:/opt/kafka/bin/../libs/kafka-streams-examples-2.8.1.jar:/opt/kafka/bin/../libs/kafka-streams-scala_2.13-2.8.1.jar:/opt/kafka/bin/../libs/kafka-streams-test-utils-2.8.1.jar:/opt/kafka/bin/../libs/kafka-tools-2.8.1.jar:/opt/kafka/bin/../libs/kafka_2.13-2.8.1-sources.jar:/opt/kafka/bin/../libs/kafka_2.13-2.8.1.jar:/opt/kafka/bin/../libs/log4j-1.2.17.jar:/opt/kafka/bin/../libs/lz4-java-1.7.1.jar:/opt/kafka/bin/../libs/maven-artifact-3.8.1.jar:/opt/kafka/bin/../libs/metrics-core-2.2.0.jar:/opt/kafka/bin/../libs/netty-buffer-4.1.62.final.jar:/opt/kafka/bin/../libs/netty-codec-4.1.62.final.jar:/opt/kafka/bin/../libs/netty-common-4.1.62.final.jar:/opt/kafka/bin/../libs/netty-handler-4.1.62.final.jar:/opt/kafka/bin/../libs/netty-resolver-4.1.62.final.jar:/opt/kafka/bin/../libs/netty-transport-4.1.62.final.jar:/opt/kafka/bin/../libs/netty-transport-native-epoll-4.1.62.final.jar:/opt/kafka/bin/../libs/netty-transport-native-unix-common-4.1.62.final.jar:/opt/kafka/bin/../libs/osgi-resource-locator-1.0.3.jar:/opt/kafka/bin/../libs/paranamer-2.8.jar:/opt/kafka/bin/../libs/plexus-utils-3.2.1.jar:/opt/kafka/bin/../libs/reflections-0.9.12.jar:/opt/kafka/bin/../libs/rocksdbjni-5.18.4.jar:/opt/kafka/bin/../libs/scala-collection-compat_2.13-2.3.0.jar:/opt/kafka/bin/../libs/scala-java8-compat_2.13-0.9.1.jar:/opt/kafka/bin/../libs/scala-library-2.13.5.jar:/opt/kafka/bin/../libs/scala-logging_2.13-3.9.2.jar:/opt/kafka/bin/../libs/scala-reflect-2.13.5.jar:/opt/kafka/bin/../libs/slf4j-api-1.7.30.jar:/opt/kafka/bin/../libs/slf4j-log4j12-1.7.30.jar:/opt/kafka/bin/../libs/snappy-java-1.1.8.1.jar:/opt/kafka/bin/../libs/zookeeper-3.5.9.jar:/opt/kafka/bin/../libs/zookeeper-jute-3.5.9.jar:/opt/kafka/bin/../libs/zstd-jni-1.4.9-1.jar (org.apache.zookeeper.zookeeper)

[2022-05-13 03:41:22,386] info client environment:java.library.path=/usr/java/packages/lib:/usr/lib64:/lib64:/lib:/usr/lib (org.apache.zookeeper.zookeeper)

[2022-05-13 03:41:22,386] info client environment:java.io.tmpdir=/tmp (org.apache.zookeeper.zookeeper)

[2022-05-13 03:41:22,386] info client environment:java.compiler=<na> (org.apache.zookeeper.zookeeper)

[2022-05-13 03:41:22,386] info client environment:os.name=linux (org.apache.zookeeper.zookeeper)

[2022-05-13 03:41:22,386] info client environment:os.arch=amd64 (org.apache.zookeeper.zookeeper)

[2022-05-13 03:41:22,386] info client environment:os.version=3.10.0-862.14.4.el7.x86_64 (org.apache.zookeeper.zookeeper)

[2022-05-13 03:41:22,386] info client environment:user.name=root (org.apache.zookeeper.zookeeper)

[2022-05-13 03:41:22,386] info client environment:user.home=/root (org.apache.zookeeper.zookeeper)

[2022-05-13 03:41:22,386] info client environment:user.dir=/ (org.apache.zookeeper.zookeeper)

[2022-05-13 03:41:22,386] info client environment:os.memory.free=978mb (org.apache.zookeeper.zookeeper)

[2022-05-13 03:41:22,386] info client environment:os.memory.max=1024mb (org.apache.zookeeper.zookeeper)

[2022-05-13 03:41:22,386] info client environment:os.memory.total=1024mb (org.apache.zookeeper.zookeeper)

[2022-05-13 03:41:22,395] info initiating client connection, connectstring=39.108.99.163:2181 sessiontimeout=18000 watcher=kafka.zookeeper.zookeeperclient$zookeeperclientwatcher$@205d38da (org.apache.zookeeper.zookeeper)

[2022-05-13 03:41:22,405] info jute.maxbuffer value is 4194304 bytes (org.apache.zookeeper.clientcnxnsocket)

[2022-05-13 03:41:22,414] info zookeeper.request.timeout value is 0. feature enabled= (org.apache.zookeeper.clientcnxn)

[2022-05-13 03:41:22,417] info [zookeeperclient kafka server] waiting until connected. (kafka.zookeeper.zookeeperclient)

[2022-05-13 03:41:22,459] info opening socket connection to server 39.108.99.163/39.108.99.163:2181. will not attempt to authenticate using sasl (unknown error) (org.apache.zookeeper.clientcnxn)

[2022-05-13 03:41:22,470] info socket connection established, initiating session, client: /172.17.0.4:33848, server: 39.108.99.163/39.108.99.163:2181 (org.apache.zookeeper.clientcnxn)

[2022-05-13 03:41:22,522] info session establishment complete on server 39.108.99.163/39.108.99.163:2181, sessionid = 0x1004f2b9d3a0000, negotiated timeout = 18000 (org.apache.zookeeper.clientcnxn)

[2022-05-13 03:41:22,527] info [zookeeperclient kafka server] connected. (kafka.zookeeper.zookeeperclient)

[2022-05-13 03:41:22,760] info [feature-zk-node-event-process-thread]: starting (kafka.server.finalizedfeaturechangelistener$changenotificationprocessorthread)

[2022-05-13 03:41:22,779] info feature zk node at path: /feature does not exist (kafka.server.finalizedfeaturechangelistener)

[2022-05-13 03:41:22,780] info cleared cache (kafka.server.finalizedfeaturecache)

[2022-05-13 03:41:23,158] info cluster id = fspfkgcstqss5hep0e355g (kafka.server.kafkaserver)

[2022-05-13 03:41:23,163] warn no meta.properties file under dir /kafka/kafka-logs-6ae1e49888b3/meta.properties (kafka.server.brokermetadatacheckpoint)

[2022-05-13 03:41:23,246] info kafkaconfig values:

advertised.host.name = null

advertised.listeners = plaintext://39.108.99.163:9092

advertised.port = null

alter.config.policy.class.name = null

alter.log.dirs.replication.quota.window.num = 11

alter.log.dirs.replication.quota.window.size.seconds = 1

authorizer.class.name =

auto.create.topics.enable = true

auto.leader.rebalance.enable = true

background.threads = 10

broker.heartbeat.interval.ms = 2000

broker.id = 0

broker.id.generation.enable = true

broker.rack = null

broker.session.timeout.ms = 9000

client.quota.callback.class = null

compression.type = producer

connection.failed.authentication.delay.ms = 100

connections.max.idle.ms = 600000

connections.max.reauth.ms = 0

control.plane.listener.name = null

controlled.shutdown.enable = true

controlled.shutdown.max.retries = 3

controlled.shutdown.retry.backoff.ms = 5000

controller.listener.names = null

controller.quorum.append.linger.ms = 25

controller.quorum.election.backoff.max.ms = 1000

controller.quorum.election.timeout.ms = 1000

controller.quorum.fetch.timeout.ms = 2000

controller.quorum.request.timeout.ms = 2000

controller.quorum.retry.backoff.ms = 20

controller.quorum.voters = []

controller.quota.window.num = 11

controller.quota.window.size.seconds = 1

controller.socket.timeout.ms = 30000

create.topic.policy.class.name = null

default.replication.factor = 1

delegation.token.expiry.check.interval.ms = 3600000

delegation.token.expiry.time.ms = 86400000

delegation.token.master.key = null

delegation.token.max.lifetime.ms = 604800000

delegation.token.secret.key = null

delete.records.purgatory.purge.interval.requests = 1

delete.topic.enable = true

fetch.max.bytes = 57671680

fetch.purgatory.purge.interval.requests = 1000

group.initial.rebalance.delay.ms = 0

group.max.session.timeout.ms = 1800000

group.max.size = 2147483647

group.min.session.timeout.ms = 6000

host.name =

initial.broker.registration.timeout.ms = 60000

inter.broker.listener.name = null

inter.broker.protocol.version = 2.8-iv1

kafka.metrics.polling.interval.secs = 10

kafka.metrics.reporters = []

leader.imbalance.check.interval.seconds = 300

leader.imbalance.per.broker.percentage = 10

listener.security.protocol.map = plaintext:plaintext,ssl:ssl,sasl_plaintext:sasl_plaintext,sasl_ssl:sasl_ssl

listeners = plaintext://0.0.0.0:9092

log.cleaner.backoff.ms = 15000

log.cleaner.dedupe.buffer.size = 134217728

log.cleaner.delete.retention.ms = 86400000

log.cleaner.enable = true

log.cleaner.io.buffer.load.factor = 0.9

log.cleaner.io.buffer.size = 524288

log.cleaner.io.max.bytes.per.second = 1.7976931348623157e308

log.cleaner.max.compaction.lag.ms = 9223372036854775807

log.cleaner.min.cleanable.ratio = 0.5

log.cleaner.min.compaction.lag.ms = 0

log.cleaner.threads = 1

log.cleanup.policy = [delete]

log.dir = /tmp/kafka-logs

log.dirs = /kafka/kafka-logs-6ae1e49888b3

log.flush.interval.messages = 9223372036854775807

log.flush.interval.ms = null

log.flush.offset.checkpoint.interval.ms = 60000

log.flush.scheduler.interval.ms = 9223372036854775807

log.flush.start.offset.checkpoint.interval.ms = 60000

log.index.interval.bytes = 4096

log.index.size.max.bytes = 10485760

log.message.downconversion.enable = true

log.message.format.version = 2.8-iv1

log.message.timestamp.difference.max.ms = 9223372036854775807

log.message.timestamp.type = createtime

log.preallocate = false

log.retention.bytes = -1

log.retention.check.interval.ms = 300000

log.retention.hours = 168

log.retention.minutes = null

log.retention.ms = null

log.roll.hours = 168

log.roll.jitter.hours = 0

log.roll.jitter.ms = null

log.roll.ms = null

log.segment.bytes = 1073741824

log.segment.delete.delay.ms = 60000

max.connection.creation.rate = 2147483647

max.connections = 2147483647

max.connections.per.ip = 2147483647

max.connections.per.ip.overrides =

max.incremental.fetch.session.cache.slots = 1000

message.max.bytes = 1048588

metadata.log.dir = null

metric.reporters = []

metrics.num.samples = 2

metrics.recording.level = info

metrics.sample.window.ms = 30000

min.insync.replicas = 1

node.id = -1

num.io.threads = 8

num.network.threads = 3

num.partitions = 1

num.recovery.threads.per.data.dir = 1

num.replica.alter.log.dirs.threads = null

num.replica.fetchers = 1

offset.metadata.max.bytes = 4096

offsets.commit.required.acks = -1

offsets.commit.timeout.ms = 5000

offsets.load.buffer.size = 5242880

offsets.retention.check.interval.ms = 600000

offsets.retention.minutes = 10080

offsets.topic.compression.codec = 0

offsets.topic.num.partitions = 50

offsets.topic.replication.factor = 1

offsets.topic.segment.bytes = 104857600

password.encoder.cipher.algorithm = aes/cbc/pkcs5padding

password.encoder.iterations = 4096

password.encoder.key.length = 128

password.encoder.keyfactory.algorithm = null

password.encoder.old.secret = null

password.encoder.secret = null

port = 9092

principal.builder.class = null

process.roles = []

producer.purgatory.purge.interval.requests = 1000

queued.max.request.bytes = -1

queued.max.requests = 500

quota.consumer.default = 9223372036854775807

quota.producer.default = 9223372036854775807

quota.window.num = 11

quota.window.size.seconds = 1

replica.fetch.backoff.ms = 1000

replica.fetch.max.bytes = 1048576

replica.fetch.min.bytes = 1

replica.fetch.response.max.bytes = 10485760

replica.fetch.wait.max.ms = 500

replica.high.watermark.checkpoint.interval.ms = 5000

replica.lag.time.max.ms = 30000

replica.selector.class = null

replica.socket.receive.buffer.bytes = 65536

replica.socket.timeout.ms = 30000

replication.quota.window.num = 11

replication.quota.window.size.seconds = 1

request.timeout.ms = 30000

reserved.broker.max.id = 1000

sasl.client.callback.handler.class = null

sasl.enabled.mechanisms = [gssapi]

sasl.jaas.config = null

sasl.kerberos.kinit.cmd = /usr/bin/kinit

sasl.kerberos.min.time.before.relogin = 60000

sasl.kerberos.principal.to.local.rules = [default]

sasl.kerberos.service.name = null

sasl.kerberos.ticket.renew.jitter = 0.05

sasl.kerberos.ticket.renew.window.factor = 0.8

sasl.login.callback.handler.class = null

sasl.login.class = null

sasl.login.refresh.buffer.seconds = 300

sasl.login.refresh.min.period.seconds = 60

sasl.login.refresh.window.factor = 0.8

sasl.login.refresh.window.jitter = 0.05

sasl.mechanism.controller.protocol = gssapi

sasl.mechanism.inter.broker.protocol = gssapi

sasl.server.callback.handler.class = null

security.inter.broker.protocol = plaintext

security.providers = null

socket.connection.setup.timeout.max.ms = 30000

socket.connection.setup.timeout.ms = 10000

socket.receive.buffer.bytes = 102400

socket.request.max.bytes = 104857600

socket.send.buffer.bytes = 102400

ssl.cipher.suites = []

ssl.client.auth = none

ssl.enabled.protocols = [tlsv1.2, tlsv1.3]

ssl.endpoint.identification.algorithm = https

ssl.engine.factory.class = null

ssl.key.password = null

ssl.keymanager.algorithm = sunx509

ssl.keystore.certificate.chain = null

ssl.keystore.key = null

ssl.keystore.location = null

ssl.keystore.password = null

ssl.keystore.type = jks

ssl.principal.mapping.rules = default

ssl.protocol = tlsv1.3

ssl.provider = null

ssl.secure.random.implementation = null

ssl.trustmanager.algorithm = pkix

ssl.truststore.certificates = null

ssl.truststore.location = null

ssl.truststore.password = null

ssl.truststore.type = jks

transaction.abort.timed.out.transaction.cleanup.interval.ms = 10000

transaction.max.timeout.ms = 900000

transaction.remove.expired.transaction.cleanup.interval.ms = 3600000

transaction.state.log.load.buffer.size = 5242880

transaction.state.log.min.isr = 1

transaction.state.log.num.partitions = 50

transaction.state.log.replication.factor = 1

transaction.state.log.segment.bytes = 104857600

transactional.id.expiration.ms = 604800000

unclean.leader.election.enable = false

zookeeper.clientcnxnsocket = null

zookeeper.connect = 39.108.99.163:2181

zookeeper.connection.timeout.ms = 18000

zookeeper.max.in.flight.requests = 10

zookeeper.session.timeout.ms = 18000

zookeeper.set.acl = false

zookeeper.ssl.cipher.suites = null

zookeeper.ssl.client.enable = false

zookeeper.ssl.crl.enable = false

zookeeper.ssl.enabled.protocols = null

zookeeper.ssl.endpoint.identification.algorithm = https

zookeeper.ssl.keystore.location = null

zookeeper.ssl.keystore.password = null

zookeeper.ssl.keystore.type = null

zookeeper.ssl.ocsp.enable = false

zookeeper.ssl.protocol = tlsv1.2

zookeeper.ssl.truststore.location = null

zookeeper.ssl.truststore.password = null

zookeeper.ssl.truststore.type = null

zookeeper.sync.time.ms = 2000

(kafka.server.kafkaconfig)

[2022-05-13 03:41:23,269] info kafkaconfig values:

advertised.host.name = null

advertised.listeners = plaintext://39.108.99.163:9092

advertised.port = null

alter.config.policy.class.name = null

alter.log.dirs.replication.quota.window.num = 11

alter.log.dirs.replication.quota.window.size.seconds = 1

authorizer.class.name =

auto.create.topics.enable = true

auto.leader.rebalance.enable = true

background.threads = 10

broker.heartbeat.interval.ms = 2000

broker.id = 0

broker.id.generation.enable = true

broker.rack = null

broker.session.timeout.ms = 9000

client.quota.callback.class = null

compression.type = producer

connection.failed.authentication.delay.ms = 100

connections.max.idle.ms = 600000

connections.max.reauth.ms = 0

control.plane.listener.name = null

controlled.shutdown.enable = true

controlled.shutdown.max.retries = 3

controlled.shutdown.retry.backoff.ms = 5000

controller.listener.names = null

controller.quorum.append.linger.ms = 25

controller.quorum.election.backoff.max.ms = 1000

controller.quorum.election.timeout.ms = 1000

controller.quorum.fetch.timeout.ms = 2000

controller.quorum.request.timeout.ms = 2000

controller.quorum.retry.backoff.ms = 20

controller.quorum.voters = []

controller.quota.window.num = 11

controller.quota.window.size.seconds = 1

controller.socket.timeout.ms = 30000

create.topic.policy.class.name = null

default.replication.factor = 1

delegation.token.expiry.check.interval.ms = 3600000

delegation.token.expiry.time.ms = 86400000

delegation.token.master.key = null

delegation.token.max.lifetime.ms = 604800000

delegation.token.secret.key = null

delete.records.purgatory.purge.interval.requests = 1

delete.topic.enable = true

fetch.max.bytes = 57671680

fetch.purgatory.purge.interval.requests = 1000

group.initial.rebalance.delay.ms = 0

group.max.session.timeout.ms = 1800000

group.max.size = 2147483647

group.min.session.timeout.ms = 6000

host.name =

initial.broker.registration.timeout.ms = 60000

inter.broker.listener.name = null

inter.broker.protocol.version = 2.8-iv1

kafka.metrics.polling.interval.secs = 10

kafka.metrics.reporters = []

leader.imbalance.check.interval.seconds = 300

leader.imbalance.per.broker.percentage = 10

listener.security.protocol.map = plaintext:plaintext,ssl:ssl,sasl_plaintext:sasl_plaintext,sasl_ssl:sasl_ssl

listeners = plaintext://0.0.0.0:9092

log.cleaner.backoff.ms = 15000

log.cleaner.dedupe.buffer.size = 134217728

log.cleaner.delete.retention.ms = 86400000

log.cleaner.enable = true

log.cleaner.io.buffer.load.factor = 0.9

log.cleaner.io.buffer.size = 524288

log.cleaner.io.max.bytes.per.second = 1.7976931348623157e308

log.cleaner.max.compaction.lag.ms = 9223372036854775807

log.cleaner.min.cleanable.ratio = 0.5

log.cleaner.min.compaction.lag.ms = 0

log.cleaner.threads = 1

log.cleanup.policy = [delete]

log.dir = /tmp/kafka-logs

log.dirs = /kafka/kafka-logs-6ae1e49888b3

log.flush.interval.messages = 9223372036854775807

log.flush.interval.ms = null

log.flush.offset.checkpoint.interval.ms = 60000

log.flush.scheduler.interval.ms = 9223372036854775807

log.flush.start.offset.checkpoint.interval.ms = 60000

log.index.interval.bytes = 4096

log.index.size.max.bytes = 10485760

log.message.downconversion.enable = true

log.message.format.version = 2.8-iv1

log.message.timestamp.difference.max.ms = 9223372036854775807

log.message.timestamp.type = createtime

log.preallocate = false

log.retention.bytes = -1

log.retention.check.interval.ms = 300000

log.retention.hours = 168

log.retention.minutes = null

log.retention.ms = null

log.roll.hours = 168

log.roll.jitter.hours = 0

log.roll.jitter.ms = null

log.roll.ms = null

log.segment.bytes = 1073741824

log.segment.delete.delay.ms = 60000

max.connection.creation.rate = 2147483647

max.connections = 2147483647

max.connections.per.ip = 2147483647

max.connections.per.ip.overrides =

max.incremental.fetch.session.cache.slots = 1000

message.max.bytes = 1048588

metadata.log.dir = null

metric.reporters = []

metrics.num.samples = 2

metrics.recording.level = info

metrics.sample.window.ms = 30000

min.insync.replicas = 1

node.id = -1

num.io.threads = 8

num.network.threads = 3

num.partitions = 1

num.recovery.threads.per.data.dir = 1

num.replica.alter.log.dirs.threads = null

num.replica.fetchers = 1

offset.metadata.max.bytes = 4096

offsets.commit.required.acks = -1

offsets.commit.timeout.ms = 5000

offsets.load.buffer.size = 5242880

offsets.retention.check.interval.ms = 600000

offsets.retention.minutes = 10080

offsets.topic.compression.codec = 0

offsets.topic.num.partitions = 50

offsets.topic.replication.factor = 1

offsets.topic.segment.bytes = 104857600

password.encoder.cipher.algorithm = aes/cbc/pkcs5padding

password.encoder.iterations = 4096

password.encoder.key.length = 128

password.encoder.keyfactory.algorithm = null

password.encoder.old.secret = null

password.encoder.secret = null

port = 9092

principal.builder.class = null

process.roles = []

producer.purgatory.purge.interval.requests = 1000

queued.max.request.bytes = -1

queued.max.requests = 500

quota.consumer.default = 9223372036854775807

quota.producer.default = 9223372036854775807

quota.window.num = 11

quota.window.size.seconds = 1

replica.fetch.backoff.ms = 1000

replica.fetch.max.bytes = 1048576

replica.fetch.min.bytes = 1

replica.fetch.response.max.bytes = 10485760

replica.fetch.wait.max.ms = 500

replica.high.watermark.checkpoint.interval.ms = 5000

replica.lag.time.max.ms = 30000

replica.selector.class = null

replica.socket.receive.buffer.bytes = 65536

replica.socket.timeout.ms = 30000

replication.quota.window.num = 11

replication.quota.window.size.seconds = 1

request.timeout.ms = 30000

reserved.broker.max.id = 1000

sasl.client.callback.handler.class = null

sasl.enabled.mechanisms = [gssapi]

sasl.jaas.config = null

sasl.kerberos.kinit.cmd = /usr/bin/kinit

sasl.kerberos.min.time.before.relogin = 60000

sasl.kerberos.principal.to.local.rules = [default]

sasl.kerberos.service.name = null

sasl.kerberos.ticket.renew.jitter = 0.05

sasl.kerberos.ticket.renew.window.factor = 0.8

sasl.login.callback.handler.class = null

sasl.login.class = null

sasl.login.refresh.buffer.seconds = 300

sasl.login.refresh.min.period.seconds = 60

sasl.login.refresh.window.factor = 0.8

sasl.login.refresh.window.jitter = 0.05

sasl.mechanism.controller.protocol = gssapi

sasl.mechanism.inter.broker.protocol = gssapi

sasl.server.callback.handler.class = null

security.inter.broker.protocol = plaintext

security.providers = null

socket.connection.setup.timeout.max.ms = 30000

socket.connection.setup.timeout.ms = 10000

socket.receive.buffer.bytes = 102400

socket.request.max.bytes = 104857600

socket.send.buffer.bytes = 102400

ssl.cipher.suites = []

ssl.client.auth = none

ssl.enabled.protocols = [tlsv1.2, tlsv1.3]

ssl.endpoint.identification.algorithm = https

ssl.engine.factory.class = null

ssl.key.password = null

ssl.keymanager.algorithm = sunx509

ssl.keystore.certificate.chain = null

ssl.keystore.key = null

ssl.keystore.location = null

ssl.keystore.password = null

ssl.keystore.type = jks

ssl.principal.mapping.rules = default

ssl.protocol = tlsv1.3

ssl.provider = null

ssl.secure.random.implementation = null

ssl.trustmanager.algorithm = pkix

ssl.truststore.certificates = null

ssl.truststore.location = null

ssl.truststore.password = null

ssl.truststore.type = jks

transaction.abort.timed.out.transaction.cleanup.interval.ms = 10000

transaction.max.timeout.ms = 900000

transaction.remove.expired.transaction.cleanup.interval.ms = 3600000

transaction.state.log.load.buffer.size = 5242880

transaction.state.log.min.isr = 1

transaction.state.log.num.partitions = 50

transaction.state.log.replication.factor = 1

transaction.state.log.segment.bytes = 104857600

transactional.id.expiration.ms = 604800000

unclean.leader.election.enable = false

zookeeper.clientcnxnsocket = null

zookeeper.connect = 39.108.99.163:2181

zookeeper.connection.timeout.ms = 18000

zookeeper.max.in.flight.requests = 10

zookeeper.session.timeout.ms = 18000

zookeeper.set.acl = false

zookeeper.ssl.cipher.suites = null

zookeeper.ssl.client.enable = false

zookeeper.ssl.crl.enable = false

zookeeper.ssl.enabled.protocols = null

zookeeper.ssl.endpoint.identification.algorithm = https

zookeeper.ssl.keystore.location = null

zookeeper.ssl.keystore.password = null

zookeeper.ssl.keystore.type = null

zookeeper.ssl.ocsp.enable = false

zookeeper.ssl.protocol = tlsv1.2

zookeeper.ssl.truststore.location = null

zookeeper.ssl.truststore.password = null

zookeeper.ssl.truststore.type = null

zookeeper.sync.time.ms = 2000

(kafka.server.kafkaconfig)

[2022-05-13 03:41:23,371] info [throttledchannelreaper-fetch]: starting (kafka.server.clientquotamanager$throttledchannelreaper)

[2022-05-13 03:41:23,371] info [throttledchannelreaper-request]: starting (kafka.server.clientquotamanager$throttledchannelreaper)

[2022-05-13 03:41:23,371] info [throttledchannelreaper-produce]: starting (kafka.server.clientquotamanager$throttledchannelreaper)

[2022-05-13 03:41:23,372] info [throttledchannelreaper-controllermutation]: starting (kafka.server.clientquotamanager$throttledchannelreaper)

[2022-05-13 03:41:23,412] info log directory /kafka/kafka-logs-6ae1e49888b3 not found, creating it. (kafka.log.logmanager)

[2022-05-13 03:41:23,463] info loading logs from log dirs arrayseq(/kafka/kafka-logs-6ae1e49888b3) (kafka.log.logmanager)

[2022-05-13 03:41:23,466] info attempting recovery for all logs in /kafka/kafka-logs-6ae1e49888b3 since no clean shutdown file was found (kafka.log.logmanager)

[2022-05-13 03:41:23,515] info loaded 0 logs in 52ms. (kafka.log.logmanager)

[2022-05-13 03:41:23,520] info starting log cleanup with a period of 300000 ms. (kafka.log.logmanager)

[2022-05-13 03:41:23,551] info starting log flusher with a default period of 9223372036854775807 ms. (kafka.log.logmanager)

[2022-05-13 03:41:24,767] info updated connection-accept-rate max connection creation rate to 2147483647 (kafka.network.connectionquotas)

[2022-05-13 03:41:24,786] info awaiting socket connections on 0.0.0.0:9092. (kafka.network.acceptor)

[2022-05-13 03:41:24,962] info [socketserver listenertype=zk_broker, nodeid=0] created data-plane acceptor and processors for endpoint : listenername(plaintext) (kafka.network.socketserver)

[2022-05-13 03:41:25,125] info [broker-0-to-controller-send-thread]: starting (kafka.server.brokertocontrollerrequestthread)

[2022-05-13 03:41:25,240] info [expirationreaper-0-fetch]: starting (kafka.server.delayedoperationpurgatory$expiredoperationreaper)

[2022-05-13 03:41:25,240] info [expirationreaper-0-deleterecords]: starting (kafka.server.delayedoperationpurgatory$expiredoperationreaper)

[2022-05-13 03:41:25,242] info [expirationreaper-0-produce]: starting (kafka.server.delayedoperationpurgatory$expiredoperationreaper)

[2022-05-13 03:41:25,249] info [expirationreaper-0-electleader]: starting (kafka.server.delayedoperationpurgatory$expiredoperationreaper)

[2022-05-13 03:41:25,312] info [logdirfailurehandler]: starting (kafka.server.replicamanager$logdirfailurehandler)

[2022-05-13 03:41:25,423] info creating /brokers/ids/0 (is it secure? false) (kafka.zk.kafkazkclient)

[2022-05-13 03:41:25,512] info stat of the created znode at /brokers/ids/0 is: 25,25,1652413285479,1652413285479,1,0,0,72144642777939968,210,0,25

(kafka.zk.kafkazkclient)

[2022-05-13 03:41:25,514] info registered broker 0 at path /brokers/ids/0 with addresses: plaintext://39.108.99.163:9092, czxid (broker epoch): 25 (kafka.zk.kafkazkclient)

[2022-05-13 03:41:25,802] info [expirationreaper-0-topic]: starting (kafka.server.delayedoperationpurgatory$expiredoperationreaper)

[2022-05-13 03:41:25,836] info [expirationreaper-0-heartbeat]: starting (kafka.server.delayedoperationpurgatory$expiredoperationreaper)

[2022-05-13 03:41:25,862] info successfully created /controller_epoch with initial epoch 0 (kafka.zk.kafkazkclient)

[2022-05-13 03:41:25,864] info [expirationreaper-0-rebalance]: starting (kafka.server.delayedoperationpurgatory$expiredoperationreaper)

[2022-05-13 03:41:25,894] info [groupcoordinator 0]: starting up. (kafka.coordinator.group.groupcoordinator)

[2022-05-13 03:41:25,926] info [groupcoordinator 0]: startup complete. (kafka.coordinator.group.groupcoordinator)

[2022-05-13 03:41:25,938] info feature zk node created at path: /feature (kafka.server.finalizedfeaturechangelistener)

[2022-05-13 03:41:26,031] info updated cache from existing <empty> to latest finalizedfeaturesandepoch(features=features{}, epoch=0). (kafka.server.finalizedfeaturecache)

[2022-05-13 03:41:26,051] info [producerid manager 0]: acquired new producerid block (brokerid:0,blockstartproducerid:0,blockendproducerid:999) by writing to zk with path version 1 (kafka.coordinator.transaction.produceridmanager)

[2022-05-13 03:41:26,052] info [transactioncoordinator id=0] starting up. (kafka.coordinator.transaction.transactioncoordinator)

[2022-05-13 03:41:26,066] info [transactioncoordinator id=0] startup complete. (kafka.coordinator.transaction.transactioncoordinator)

[2022-05-13 03:41:26,107] info [transaction marker channel manager 0]: starting (kafka.coordinator.transaction.transactionmarkerchannelmanager)

[2022-05-13 03:41:26,336] info [expirationreaper-0-alteracls]: starting (kafka.server.delayedoperationpurgatory$expiredoperationreaper)

[2022-05-13 03:41:26,373] info [/config/changes-event-process-thread]: starting (kafka.common.zknodechangenotificationlistener$changeeventprocessthread)

[2022-05-13 03:41:26,434] info [socketserver listenertype=zk_broker, nodeid=0] starting socket server acceptors and processors (kafka.network.socketserver)

[2022-05-13 03:41:26,507] info [socketserver listenertype=zk_broker, nodeid=0] started data-plane acceptor and processor(s) for endpoint : listenername(plaintext) (kafka.network.socketserver)

[2022-05-13 03:41:26,507] info [socketserver listenertype=zk_broker, nodeid=0] started socket server acceptors and processors (kafka.network.socketserver)

[2022-05-13 03:41:26,519] info kafka version: 2.8.1 (org.apache.kafka.common.utils.appinfoparser)

[2022-05-13 03:41:26,519] info kafka commitid: 839b886f9b732b15 (org.apache.kafka.common.utils.appinfoparser)

[2022-05-13 03:41:26,519] info kafka starttimems: 1652413286507 (org.apache.kafka.common.utils.appinfoparser)

[2022-05-13 03:41:26,527] info [kafkaserver id=0] started (kafka.server.kafkaserver)

[2022-05-13 03:41:26,631] info [broker-0-to-controller-send-thread]: recorded new controller, from now on will use broker 39.108.99.163:9092 (id: 0 rack: null) (kafka.server.brokertocontrollerrequestthread)

started说明启动成功了!

总结

以上为个人经验,希望能给大家一个参考,也希望大家多多支持代码网。

发表评论