目录

前言

想必有小伙伴也想跟我一样体验下部署大语言模型, 但碍于经济实力, 不过民间上出现了大量的量化模型, 我们平民也能体验体验啦~, 该模型可以在笔记本电脑上部署, 确保你电脑至少有16g运行内存

开原地址:github - ymcui/chinese-llama-alpaca: 中文llama&alpaca大语言模型+本地cpu部署 (chinese llama & alpaca llms)

linux和mac的教程在开源的仓库中有提供,当然如果你是m1的也可以参考以下文章:

https://gist.github.com/cedrickchee/e8d4cb0c4b1df6cc47ce8b18457ebde0

准备工作

最好是有代理, 不然你下载东西可能失败, 我为了下个模型花了一天时间, 痛哭~

我们需要先在电脑上安装以下环境:

- git

- python3.9(使用anaconda3创建该环境)

- cmake(如果你电脑没有c和c++的编译环境还需要安装mingw)

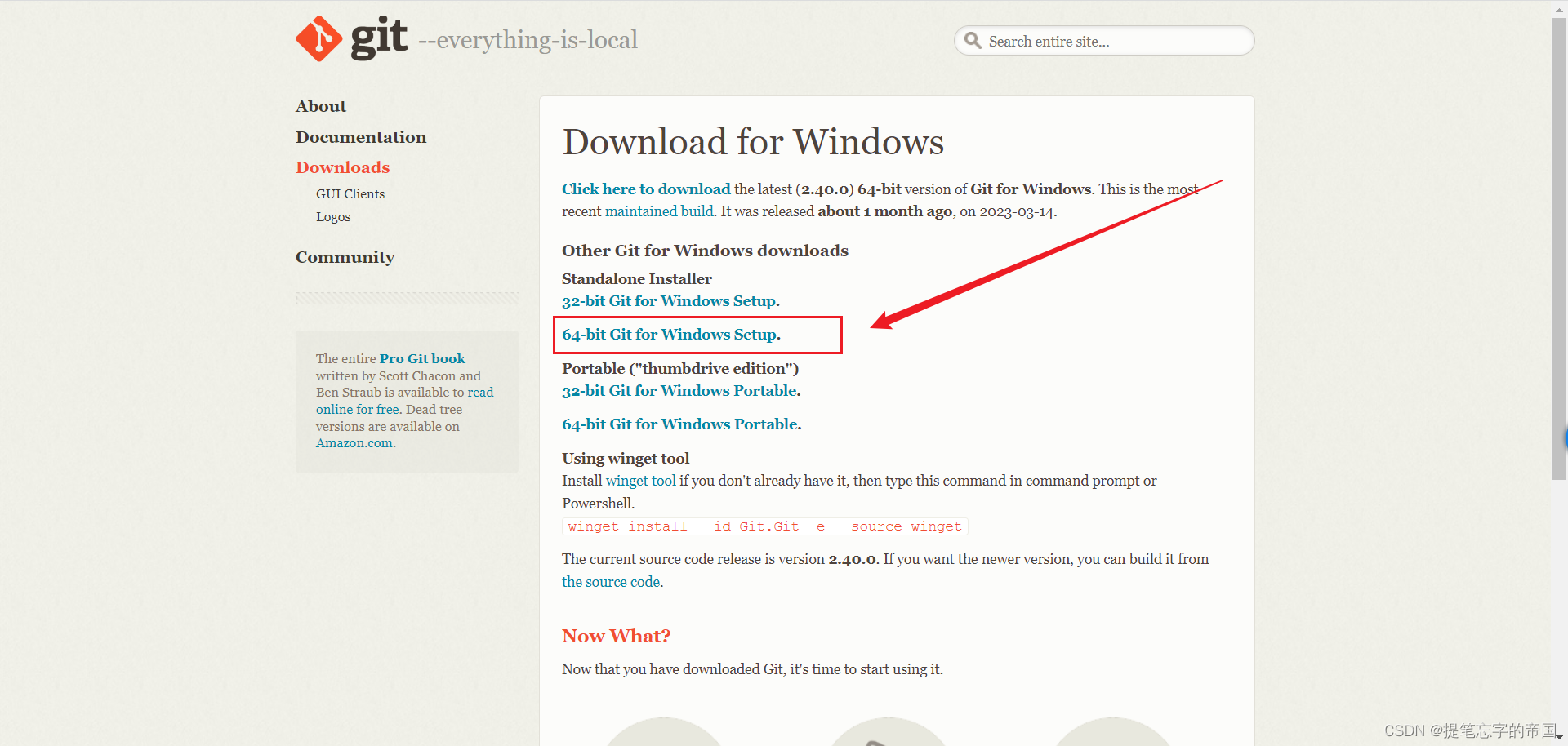

git

下载地址:git - downloading package

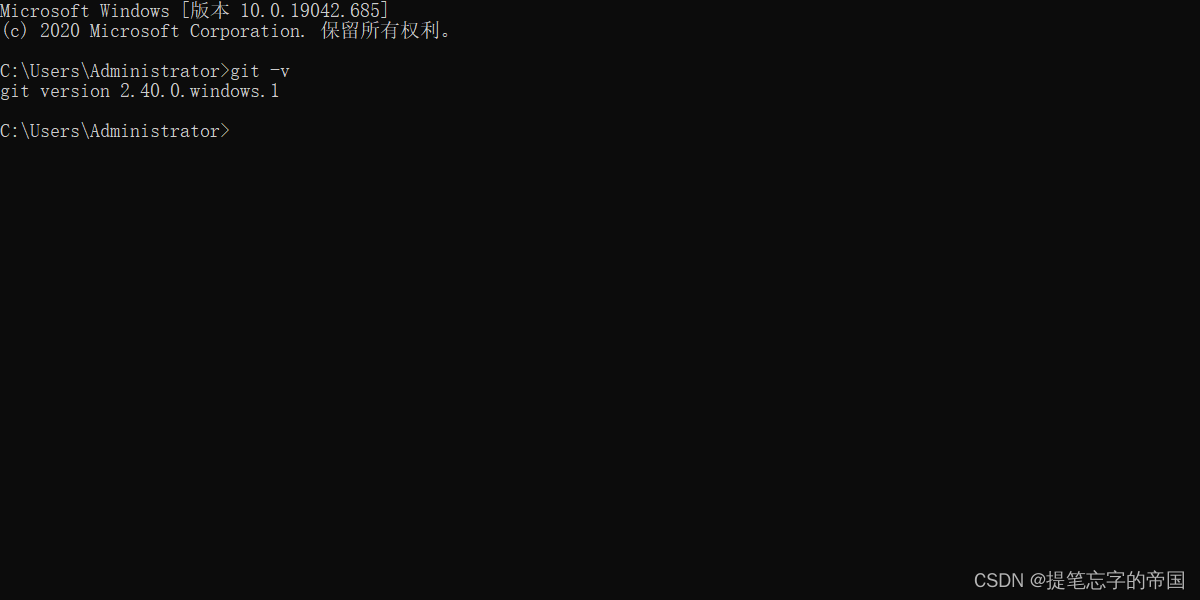

在cmd窗口输入以下如果有版本号显示说明已经安装成功

git -v

python3.9

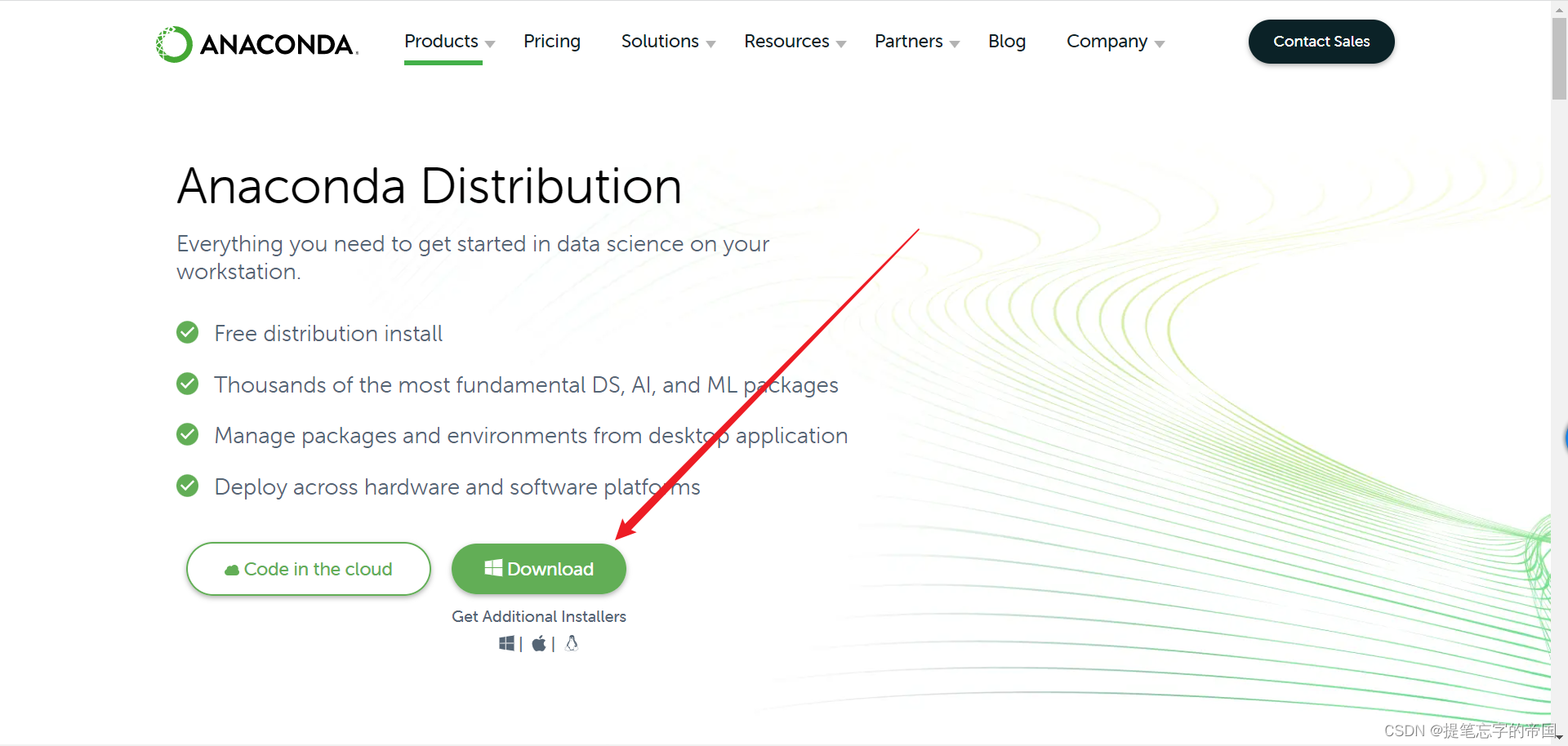

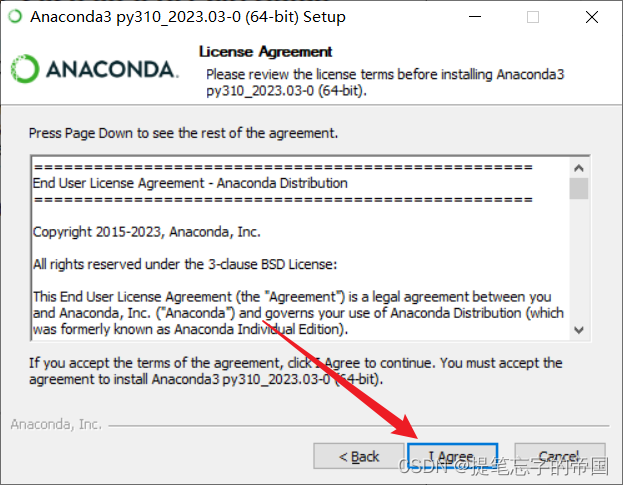

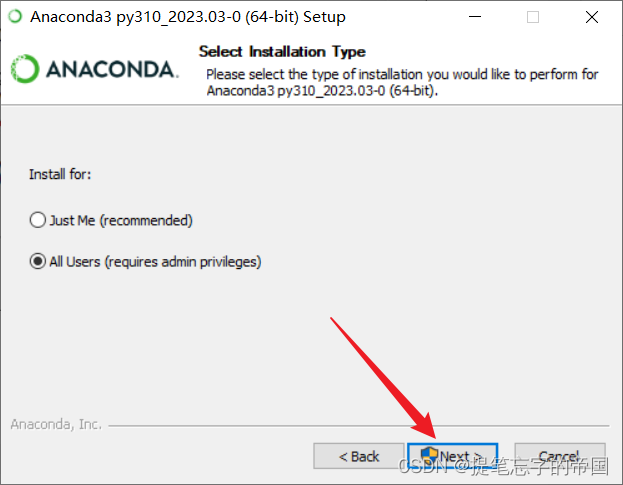

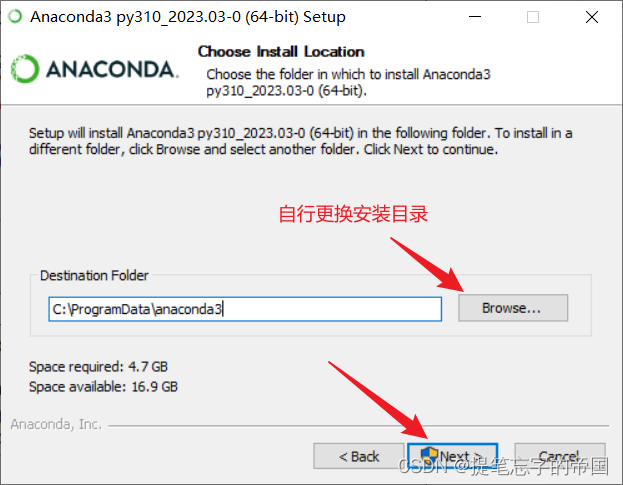

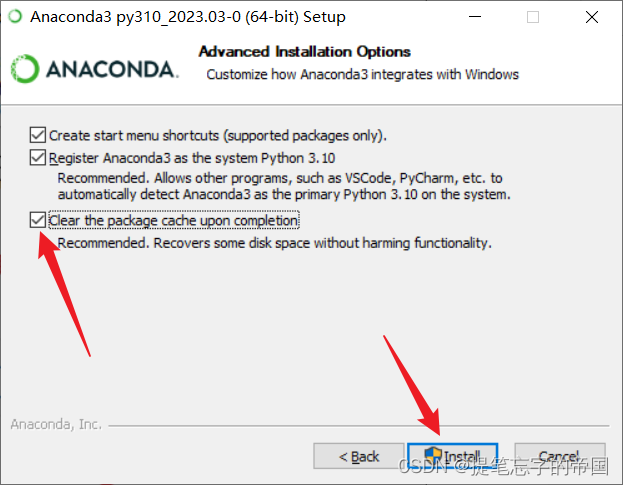

我这里使用anaconda3来使用python, anaconda3是什么?

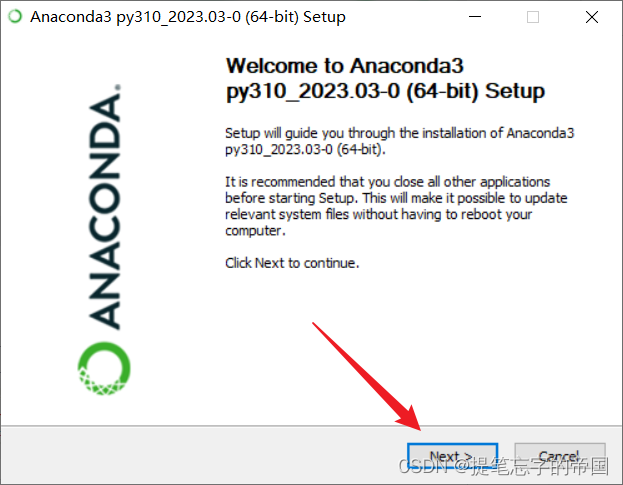

anaconda3下载地址:anaconda | anaconda distribution

安装步骤参考:

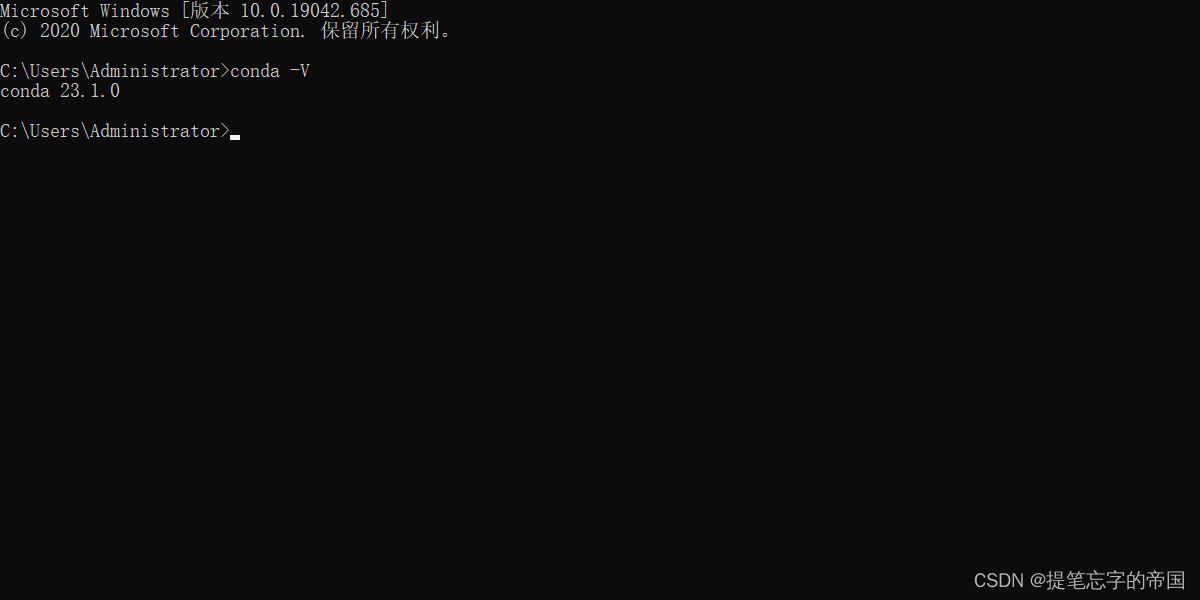

在cmd窗口输入以下命令, 显示版本号则说明安装成功

conda -v

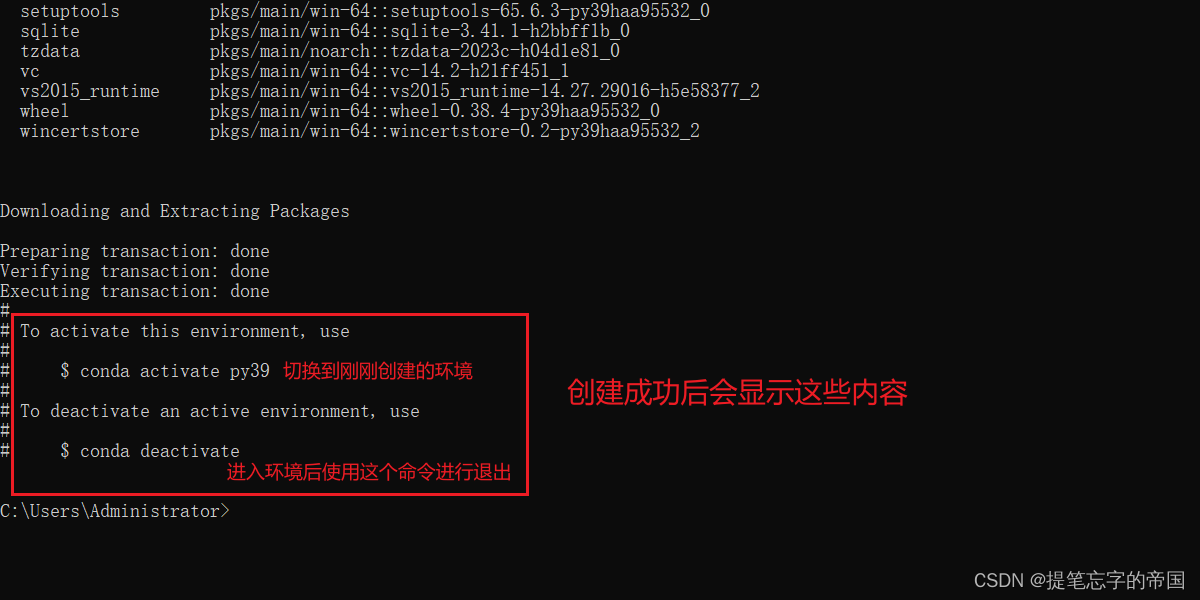

接下来我们在cmd窗口输入以下命令创建一个python3.9的环境

conda create --name py39 python=3.9 -y

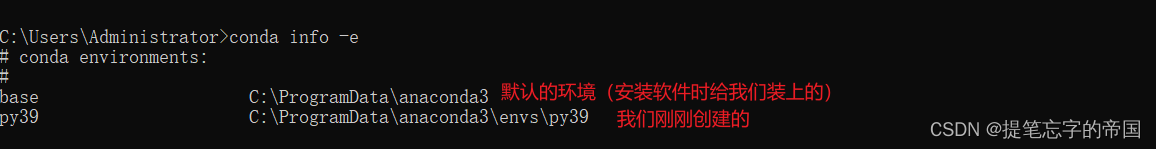

查看有哪些环境的命令:

conda info -e

激活/切换环境的命令:

conda activate py39要使用哪个环境的话换成对应名字即可

进入环境后你就可以在这输入python相关的命令了, 如:

要退出环境的话输入:

conda deactivate当我退出环境后再查看python版本的话会提示我不是内部或外部命令,也不是可运行的程序

或批处理文件。如:

cmake

这是一个编译工具, 我们需要使用它去编译llama.cpp, 量化模型需要用到, 不量化模型个人电脑跑不起来, 觉得量化这个概念不理解的可以理解为压缩, 这种概念是不对的, 只是为了帮助你更好的理解.

在安装之前我们需要安装mingw, 避免编译时找不到编译环境, 按下win+r快捷键输入powershell

输入命令安装scoop, 这是一个包管理器, 我们使用它来下载安装mingw:

这个地方如果没有开代理的话可能会出错

iex "& {$(irm get.scoop.sh)} -runasadmin"安装好后分别运行下面两个命令(添加库):

scoop bucket add extrasscoop bucket add main输入命令安装mingw

scoop install mingw到这就已经安装好mingw了, 如果报错了请评论, 我看到了会回复

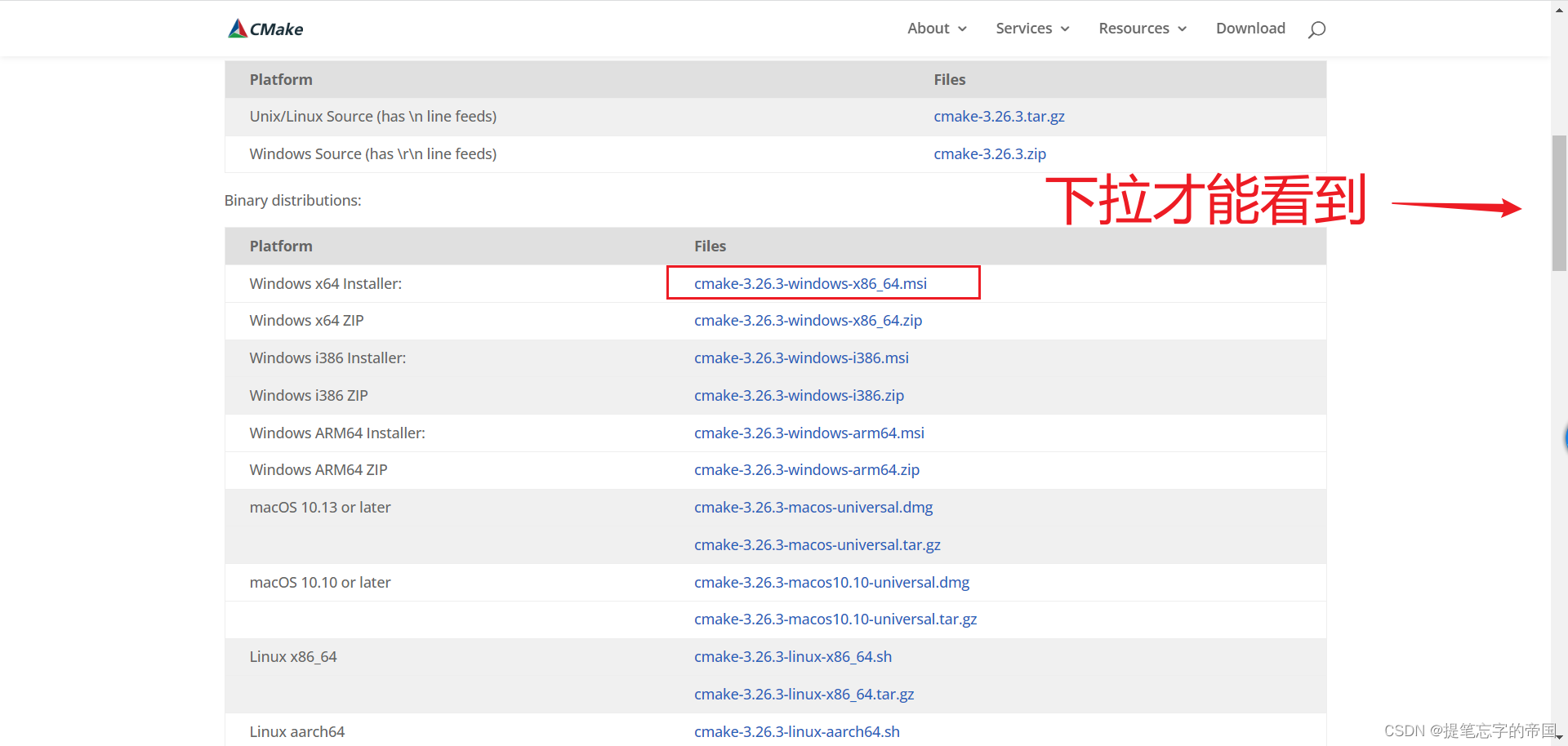

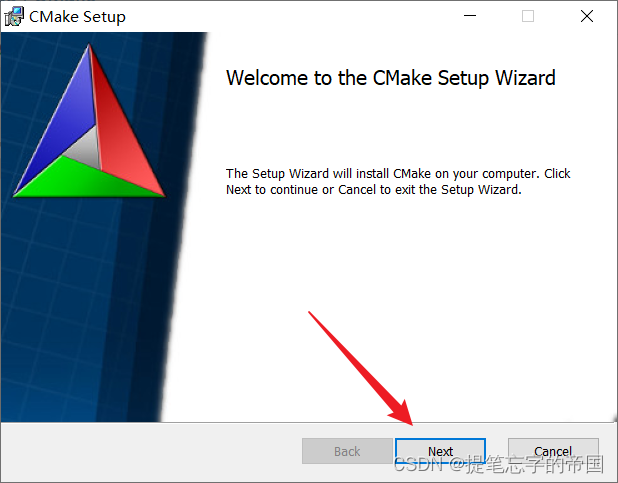

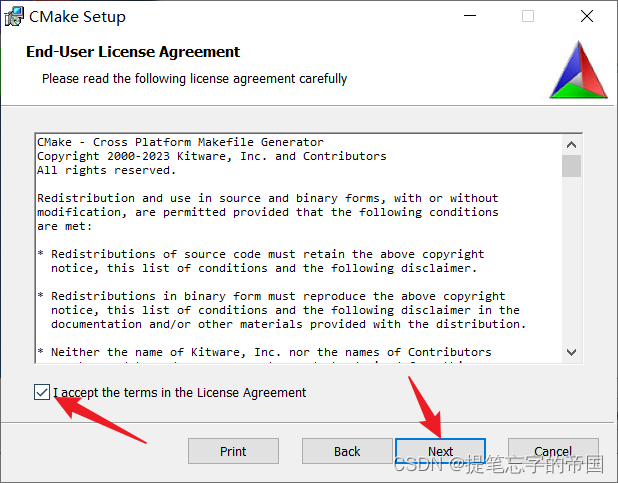

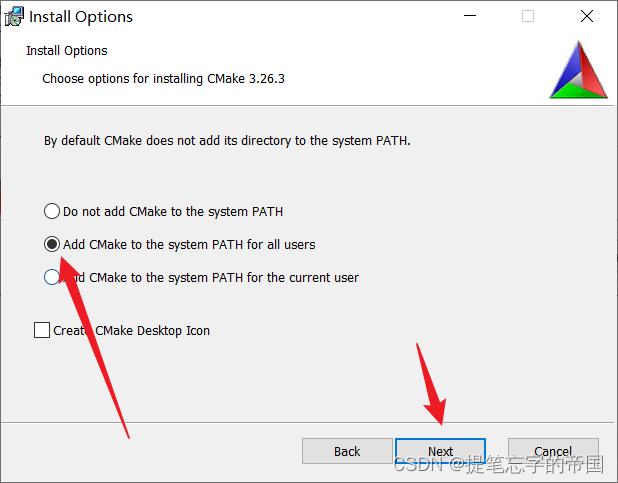

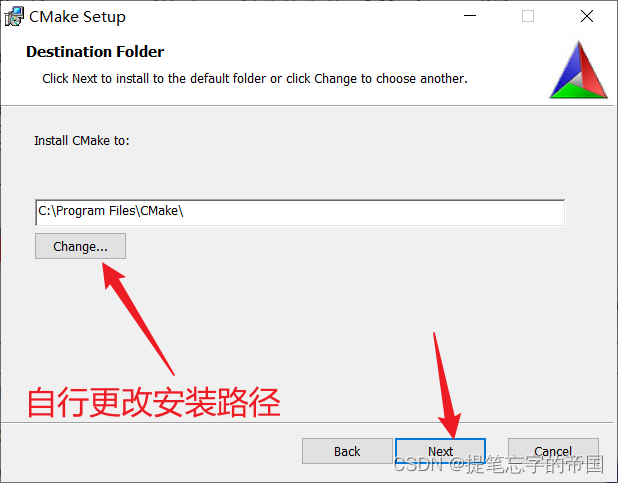

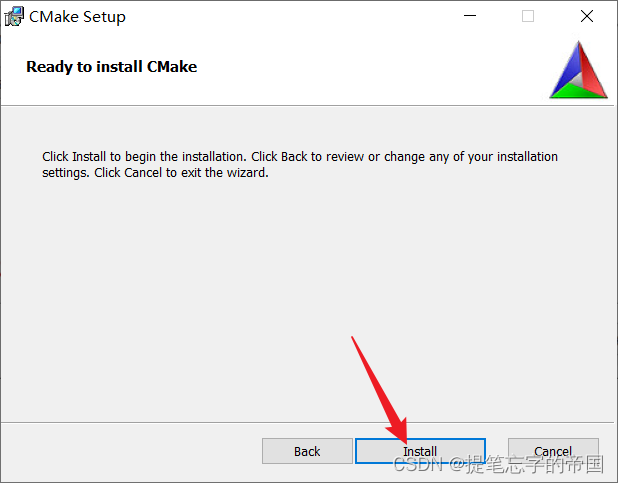

接下来安装cmake

安装参考:

下载模型

我们需要下载两个模型, 一个是原版的llama模型, 一个是扩充了中文的模型, 后续会进行一个合并模型的操作

- 原版模型下载地址(要代理):https://ipfs.io/ipfs/qmb9y5gcktg7zzbbwmu2bxwmkzyckcujtekppgdz7gefkm/

- 备用:nyanko7/llama-7b at main

- 扩充了中文的模型下载:

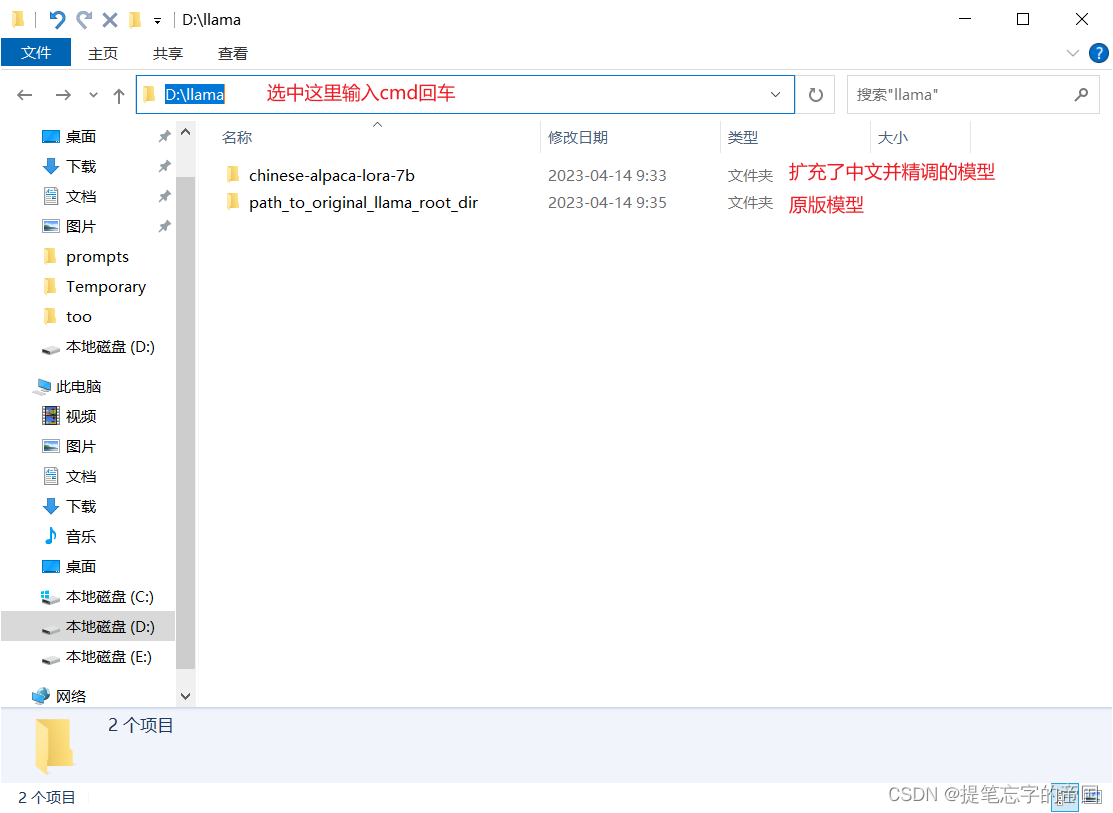

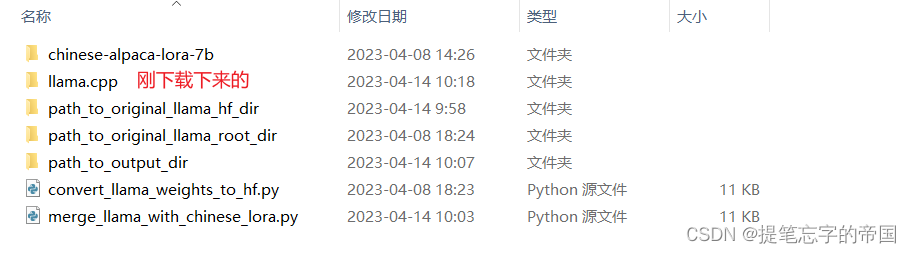

建议在d盘上新建一个文件夹, 在里面进行下载操作, 如下:

在弹出的框中分别输入以下命令:

git lfs installgit clone https://huggingface.co/ziqingyang/chinese-alpaca-lora-7b这里可能会因为网络问题一直失败......一直重试就行, 有别的问题请评论, 看到会回复

合并模型

终于写到这里了, 累~

在你下载了模型的目录内打开cmd窗口, 如下:

打开窗口后需要先激活python环境, 使用的就是前面装anaconda3

# 不记得有哪些环境的先运行以下命令

conda info -e

# 然后激活你需要的环境 我的环境名是py39

conda activate py39切换好后分别执行以下命令安装依赖库

pip install git+https://github.com/huggingface/transformers

pip install sentencepiece==0.1.97

pip install peft==0.2.0执行命令安装成功后会有successfully的字眼

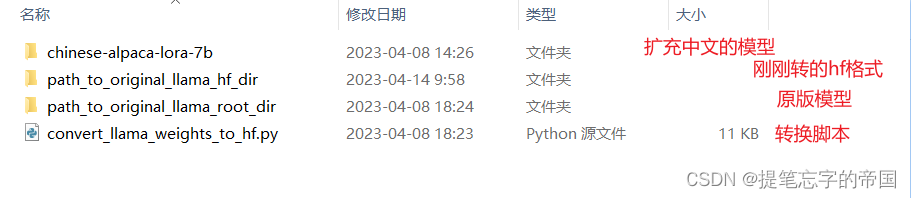

接下来需要将原版模型转hf格式, 需要借助最新版🤗transformers提供的脚本convert_llama_weights_to_hf.py

在目录内新建一个convert_llama_weights_to_hf.py文件, 用记事本打开后把以下代码粘贴进去

注意:我这里是为了方便直接拷贝出来了,脚本可能会更新,建议直接去以下地址拷贝最新的:

transformers/convert_llama_weights_to_hf.py at main · huggingface/transformers · github

# copyright 2022 eleutherai and the huggingface inc. team. all rights reserved.

#

# licensed under the apache license, version 2.0 (the "license");

# you may not use this file except in compliance with the license.

# you may obtain a copy of the license at

#

# http://www.apache.org/licenses/license-2.0

#

# unless required by applicable law or agreed to in writing, software

# distributed under the license is distributed on an "as is" basis,

# without warranties or conditions of any kind, either express or implied.

# see the license for the specific language governing permissions and

# limitations under the license.

import argparse

import gc

import json

import math

import os

import shutil

import warnings

import torch

from transformers import llamaconfig, llamaforcausallm, llamatokenizer

try:

from transformers import llamatokenizerfast

except importerror as e:

warnings.warn(e)

warnings.warn(

"the converted tokenizer will be the `slow` tokenizer. to use the fast, update your `tokenizers` library and re-run the tokenizer conversion"

)

llamatokenizerfast = none

"""

sample usage:

```

python src/transformers/models/llama/convert_llama_weights_to_hf.py \

--input_dir /path/to/downloaded/llama/weights --model_size 7b --output_dir /output/path

```

thereafter, models can be loaded via:

```py

from transformers import llamaforcausallm, llamatokenizer

model = llamaforcausallm.from_pretrained("/output/path")

tokenizer = llamatokenizer.from_pretrained("/output/path")

```

important note: you need to be able to host the whole model in ram to execute this script (even if the biggest versions

come in several checkpoints they each contain a part of each weight of the model, so we need to load them all in ram).

"""

intermediate_size_map = {

"7b": 11008,

"13b": 13824,

"30b": 17920,

"65b": 22016,

}

num_shards = {

"7b": 1,

"13b": 2,

"30b": 4,

"65b": 8,

}

def compute_intermediate_size(n):

return int(math.ceil(n * 8 / 3) + 255) // 256 * 256

def read_json(path):

with open(path, "r") as f:

return json.load(f)

def write_json(text, path):

with open(path, "w") as f:

json.dump(text, f)

def write_model(model_path, input_base_path, model_size):

os.makedirs(model_path, exist_ok=true)

tmp_model_path = os.path.join(model_path, "tmp")

os.makedirs(tmp_model_path, exist_ok=true)

params = read_json(os.path.join(input_base_path, "params.json"))

num_shards = num_shards[model_size]

n_layers = params["n_layers"]

n_heads = params["n_heads"]

n_heads_per_shard = n_heads // num_shards

dim = params["dim"]

dims_per_head = dim // n_heads

base = 10000.0

inv_freq = 1.0 / (base ** (torch.arange(0, dims_per_head, 2).float() / dims_per_head))

# permute for sliced rotary

def permute(w):

return w.view(n_heads, dim // n_heads // 2, 2, dim).transpose(1, 2).reshape(dim, dim)

print(f"fetching all parameters from the checkpoint at {input_base_path}.")

# load weights

if model_size == "7b":

# not shared

# (the sharded implementation would also work, but this is simpler.)

loaded = torch.load(os.path.join(input_base_path, "consolidated.00.pth"), map_location="cpu")

else:

# sharded

loaded = [

torch.load(os.path.join(input_base_path, f"consolidated.{i:02d}.pth"), map_location="cpu")

for i in range(num_shards)

]

param_count = 0

index_dict = {"weight_map": {}}

for layer_i in range(n_layers):

filename = f"pytorch_model-{layer_i + 1}-of-{n_layers + 1}.bin"

if model_size == "7b":

# unsharded

state_dict = {

f"model.layers.{layer_i}.self_attn.q_proj.weight": permute(

loaded[f"layers.{layer_i}.attention.wq.weight"]

),

f"model.layers.{layer_i}.self_attn.k_proj.weight": permute(

loaded[f"layers.{layer_i}.attention.wk.weight"]

),

f"model.layers.{layer_i}.self_attn.v_proj.weight": loaded[f"layers.{layer_i}.attention.wv.weight"],

f"model.layers.{layer_i}.self_attn.o_proj.weight": loaded[f"layers.{layer_i}.attention.wo.weight"],

f"model.layers.{layer_i}.mlp.gate_proj.weight": loaded[f"layers.{layer_i}.feed_forward.w1.weight"],

f"model.layers.{layer_i}.mlp.down_proj.weight": loaded[f"layers.{layer_i}.feed_forward.w2.weight"],

f"model.layers.{layer_i}.mlp.up_proj.weight": loaded[f"layers.{layer_i}.feed_forward.w3.weight"],

f"model.layers.{layer_i}.input_layernorm.weight": loaded[f"layers.{layer_i}.attention_norm.weight"],

f"model.layers.{layer_i}.post_attention_layernorm.weight": loaded[f"layers.{layer_i}.ffn_norm.weight"],

}

else:

# sharded

# note that in the 13b checkpoint, not cloning the two following weights will result in the checkpoint

# becoming 37gb instead of 26gb for some reason.

state_dict = {

f"model.layers.{layer_i}.input_layernorm.weight": loaded[0][

f"layers.{layer_i}.attention_norm.weight"

].clone(),

f"model.layers.{layer_i}.post_attention_layernorm.weight": loaded[0][

f"layers.{layer_i}.ffn_norm.weight"

].clone(),

}

state_dict[f"model.layers.{layer_i}.self_attn.q_proj.weight"] = permute(

torch.cat(

[

loaded[i][f"layers.{layer_i}.attention.wq.weight"].view(n_heads_per_shard, dims_per_head, dim)

for i in range(num_shards)

],

dim=0,

).reshape(dim, dim)

)

state_dict[f"model.layers.{layer_i}.self_attn.k_proj.weight"] = permute(

torch.cat(

[

loaded[i][f"layers.{layer_i}.attention.wk.weight"].view(n_heads_per_shard, dims_per_head, dim)

for i in range(num_shards)

],

dim=0,

).reshape(dim, dim)

)

state_dict[f"model.layers.{layer_i}.self_attn.v_proj.weight"] = torch.cat(

[

loaded[i][f"layers.{layer_i}.attention.wv.weight"].view(n_heads_per_shard, dims_per_head, dim)

for i in range(num_shards)

],

dim=0,

).reshape(dim, dim)

state_dict[f"model.layers.{layer_i}.self_attn.o_proj.weight"] = torch.cat(

[loaded[i][f"layers.{layer_i}.attention.wo.weight"] for i in range(num_shards)], dim=1

)

state_dict[f"model.layers.{layer_i}.mlp.gate_proj.weight"] = torch.cat(

[loaded[i][f"layers.{layer_i}.feed_forward.w1.weight"] for i in range(num_shards)], dim=0

)

state_dict[f"model.layers.{layer_i}.mlp.down_proj.weight"] = torch.cat(

[loaded[i][f"layers.{layer_i}.feed_forward.w2.weight"] for i in range(num_shards)], dim=1

)

state_dict[f"model.layers.{layer_i}.mlp.up_proj.weight"] = torch.cat(

[loaded[i][f"layers.{layer_i}.feed_forward.w3.weight"] for i in range(num_shards)], dim=0

)

state_dict[f"model.layers.{layer_i}.self_attn.rotary_emb.inv_freq"] = inv_freq

for k, v in state_dict.items():

index_dict["weight_map"][k] = filename

param_count += v.numel()

torch.save(state_dict, os.path.join(tmp_model_path, filename))

filename = f"pytorch_model-{n_layers + 1}-of-{n_layers + 1}.bin"

if model_size == "7b":

# unsharded

state_dict = {

"model.embed_tokens.weight": loaded["tok_embeddings.weight"],

"model.norm.weight": loaded["norm.weight"],

"lm_head.weight": loaded["output.weight"],

}

else:

state_dict = {

"model.norm.weight": loaded[0]["norm.weight"],

"model.embed_tokens.weight": torch.cat(

[loaded[i]["tok_embeddings.weight"] for i in range(num_shards)], dim=1

),

"lm_head.weight": torch.cat([loaded[i]["output.weight"] for i in range(num_shards)], dim=0),

}

for k, v in state_dict.items():

index_dict["weight_map"][k] = filename

param_count += v.numel()

torch.save(state_dict, os.path.join(tmp_model_path, filename))

# write configs

index_dict["metadata"] = {"total_size": param_count * 2}

write_json(index_dict, os.path.join(tmp_model_path, "pytorch_model.bin.index.json"))

config = llamaconfig(

hidden_size=dim,

intermediate_size=compute_intermediate_size(dim),

num_attention_heads=params["n_heads"],

num_hidden_layers=params["n_layers"],

rms_norm_eps=params["norm_eps"],

)

config.save_pretrained(tmp_model_path)

# make space so we can load the model properly now.

del state_dict

del loaded

gc.collect()

print("loading the checkpoint in a llama model.")

model = llamaforcausallm.from_pretrained(tmp_model_path, torch_dtype=torch.float16, low_cpu_mem_usage=true)

# avoid saving this as part of the config.

del model.config._name_or_path

print("saving in the transformers format.")

model.save_pretrained(model_path)

shutil.rmtree(tmp_model_path)

def write_tokenizer(tokenizer_path, input_tokenizer_path):

# initialize the tokenizer based on the `spm` model

tokenizer_class = llamatokenizer if llamatokenizerfast is none else llamatokenizerfast

print("saving a {tokenizer_class} to {tokenizer_path}")

tokenizer = tokenizer_class(input_tokenizer_path)

tokenizer.save_pretrained(tokenizer_path)

def main():

parser = argparse.argumentparser()

parser.add_argument(

"--input_dir",

help="location of llama weights, which contains tokenizer.model and model folders",

)

parser.add_argument(

"--model_size",

choices=["7b", "13b", "30b", "65b", "tokenizer_only"],

)

parser.add_argument(

"--output_dir",

help="location to write hf model and tokenizer",

)

args = parser.parse_args()

if args.model_size != "tokenizer_only":

write_model(

model_path=args.output_dir,

input_base_path=os.path.join(args.input_dir, args.model_size),

model_size=args.model_size,

)

spm_path = os.path.join(args.input_dir, "tokenizer.model")

write_tokenizer(args.output_dir, spm_path)

if __name__ == "__main__":

main()在cmd窗口执行命令(如果你使用了anaconda,执行命令前请先激活环境):

python convert_llama_weights_to_hf.py --input_dir path_to_original_llama_root_dir --model_size 7b --output_dir path_to_original_llama_hf_dir经过漫长的等待....

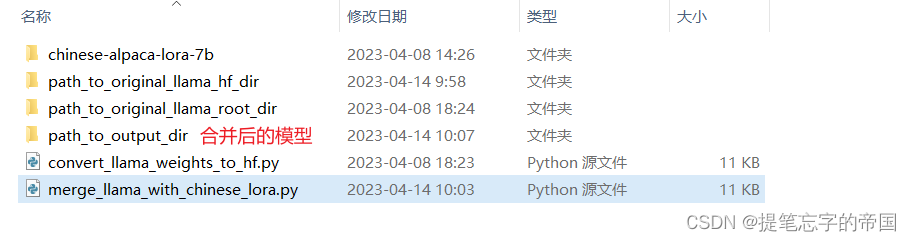

接下来合并输出pytorch版本权重(.pth文件),使用merge_llama_with_chinese_lora.py脚本

在目录新建一个merge_llama_with_chinese_lora.py文件, 用记事本打开将以下代码粘贴进去

注意:我这里是为了方便直接拷贝出来了,脚本可能会更新,建议直接去以下地址拷贝最新的:

chinese-llama-alpaca/merge_llama_with_chinese_lora.py at main · ymcui/chinese-llama-alpaca · github

"""

borrowed and modified from https://github.com/tloen/alpaca-lora

"""

import argparse

import os

import json

import gc

import torch

import transformers

import peft

from peft import peftmodel

parser = argparse.argumentparser()

parser.add_argument('--base_model',default=none,required=true,type=str,help="please specify a base_model")

parser.add_argument('--lora_model',default=none,required=true,type=str,help="please specify a lora_model")

# deprecated; the script infers the model size from the checkpoint

parser.add_argument('--model_size',default='7b',type=str,help="size of the llama model",choices=['7b','13b'])

parser.add_argument('--offload_dir',default=none,type=str,help="(optional) please specify a temp folder for offloading (useful for low-ram machines). default none (disable offload).")

parser.add_argument('--output_dir',default='./',type=str)

args = parser.parse_args()

assert (

"llamatokenizer" in transformers._import_structure["models.llama"]

), "llama is now in huggingface's main branch.\nplease reinstall it: pip uninstall transformers && pip install git+https://github.com/huggingface/transformers.git"

from transformers import llamatokenizer, llamaforcausallm

base_model = args.base_model

lora_model = args.lora_model

output_dir = args.output_dir

assert (

base_model

), "please specify a base_model in the script, e.g. 'decapoda-research/llama-7b-hf'"

tokenizer = llamatokenizer.from_pretrained(lora_model)

if args.offload_dir is not none:

# load with offloading, which is useful for low-ram machines.

# note that if you have enough ram, please use original method instead, as it is faster.

base_model = llamaforcausallm.from_pretrained(

base_model,

load_in_8bit=false,

torch_dtype=torch.float16,

offload_folder=args.offload_dir,

offload_state_dict=true,

low_cpu_mem_usage=true,

device_map={"": "cpu"},

)

else:

# original method without offloading

base_model = llamaforcausallm.from_pretrained(

base_model,

load_in_8bit=false,

torch_dtype=torch.float16,

device_map={"": "cpu"},

)

base_model.resize_token_embeddings(len(tokenizer))

assert base_model.get_input_embeddings().weight.size(0) == len(tokenizer)

tokenizer.save_pretrained(output_dir)

print(f"extended vocabulary size: {len(tokenizer)}")

first_weight = base_model.model.layers[0].self_attn.q_proj.weight

first_weight_old = first_weight.clone()

## infer the model size from the checkpoint

emb_to_model_size = {

4096 : '7b',

5120 : '13b',

6656 : '30b',

8192 : '65b',

}

embedding_size = base_model.get_input_embeddings().weight.size(1)

model_size = emb_to_model_size[embedding_size]

print(f"loading lora for {model_size} model")

lora_model = peftmodel.from_pretrained(

base_model,

lora_model,

device_map={"": "cpu"},

torch_dtype=torch.float16,

)

assert torch.allclose(first_weight_old, first_weight)

# merge weights

print(f"peft version: {peft.__version__}")

print(f"merging model")

if peft.__version__ > '0.2.0':

# merge weights - new merging method from peft

lora_model = lora_model.merge_and_unload()

else:

# merge weights

for layer in lora_model.base_model.model.model.layers:

if hasattr(layer.self_attn.q_proj,'merge_weights'):

layer.self_attn.q_proj.merge_weights = true

if hasattr(layer.self_attn.v_proj,'merge_weights'):

layer.self_attn.v_proj.merge_weights = true

if hasattr(layer.self_attn.k_proj,'merge_weights'):

layer.self_attn.k_proj.merge_weights = true

if hasattr(layer.self_attn.o_proj,'merge_weights'):

layer.self_attn.o_proj.merge_weights = true

if hasattr(layer.mlp.gate_proj,'merge_weights'):

layer.mlp.gate_proj.merge_weights = true

if hasattr(layer.mlp.down_proj,'merge_weights'):

layer.mlp.down_proj.merge_weights = true

if hasattr(layer.mlp.up_proj,'merge_weights'):

layer.mlp.up_proj.merge_weights = true

lora_model.train(false)

# did we do anything?

assert not torch.allclose(first_weight_old, first_weight)

lora_model_sd = lora_model.state_dict()

del lora_model, base_model

num_shards_of_models = {'7b': 1, '13b': 2}

params_of_models = {

'7b':

{

"dim": 4096,

"multiple_of": 256,

"n_heads": 32,

"n_layers": 32,

"norm_eps": 1e-06,

"vocab_size": -1,

},

'13b':

{

"dim": 5120,

"multiple_of": 256,

"n_heads": 40,

"n_layers": 40,

"norm_eps": 1e-06,

"vocab_size": -1,

},

}

params = params_of_models[model_size]

num_shards = num_shards_of_models[model_size]

n_layers = params["n_layers"]

n_heads = params["n_heads"]

dim = params["dim"]

dims_per_head = dim // n_heads

base = 10000.0

inv_freq = 1.0 / (base ** (torch.arange(0, dims_per_head, 2).float() / dims_per_head))

def permute(w):

return (

w.view(n_heads, dim // n_heads // 2, 2, dim).transpose(1, 2).reshape(dim, dim)

)

def unpermute(w):

return (

w.view(n_heads, 2, dim // n_heads // 2, dim).transpose(1, 2).reshape(dim, dim)

)

def translate_state_dict_key(k):

k = k.replace("base_model.model.", "")

if k == "model.embed_tokens.weight":

return "tok_embeddings.weight"

elif k == "model.norm.weight":

return "norm.weight"

elif k == "lm_head.weight":

return "output.weight"

elif k.startswith("model.layers."):

layer = k.split(".")[2]

if k.endswith(".self_attn.q_proj.weight"):

return f"layers.{layer}.attention.wq.weight"

elif k.endswith(".self_attn.k_proj.weight"):

return f"layers.{layer}.attention.wk.weight"

elif k.endswith(".self_attn.v_proj.weight"):

return f"layers.{layer}.attention.wv.weight"

elif k.endswith(".self_attn.o_proj.weight"):

return f"layers.{layer}.attention.wo.weight"

elif k.endswith(".mlp.gate_proj.weight"):

return f"layers.{layer}.feed_forward.w1.weight"

elif k.endswith(".mlp.down_proj.weight"):

return f"layers.{layer}.feed_forward.w2.weight"

elif k.endswith(".mlp.up_proj.weight"):

return f"layers.{layer}.feed_forward.w3.weight"

elif k.endswith(".input_layernorm.weight"):

return f"layers.{layer}.attention_norm.weight"

elif k.endswith(".post_attention_layernorm.weight"):

return f"layers.{layer}.ffn_norm.weight"

elif k.endswith("rotary_emb.inv_freq") or "lora" in k:

return none

else:

print(layer, k)

raise notimplementederror

else:

print(k)

raise notimplementederror

def save_shards(lora_model_sd, num_shards: int):

# add the no_grad context manager

with torch.no_grad():

if num_shards == 1:

new_state_dict = {}

for k, v in lora_model_sd.items():

new_k = translate_state_dict_key(k)

if new_k is not none:

if "wq" in new_k or "wk" in new_k:

new_state_dict[new_k] = unpermute(v)

else:

new_state_dict[new_k] = v

os.makedirs(output_dir, exist_ok=true)

print(f"saving shard 1 of {num_shards} into {output_dir}/consolidated.00.pth")

torch.save(new_state_dict, output_dir + "/consolidated.00.pth")

with open(output_dir + "/params.json", "w") as f:

json.dump(params, f)

else:

new_state_dicts = [dict() for _ in range(num_shards)]

for k in list(lora_model_sd.keys()):

v = lora_model_sd[k]

new_k = translate_state_dict_key(k)

if new_k is not none:

if new_k=='tok_embeddings.weight':

print(f"processing {new_k}")

assert v.size(1)%num_shards==0

splits = v.split(v.size(1)//num_shards,dim=1)

elif new_k=='output.weight':

print(f"processing {new_k}")

splits = v.split(v.size(0)//num_shards,dim=0)

elif new_k=='norm.weight':

print(f"processing {new_k}")

splits = [v] * num_shards

elif 'ffn_norm.weight' in new_k:

print(f"processing {new_k}")

splits = [v] * num_shards

elif 'attention_norm.weight' in new_k:

print(f"processing {new_k}")

splits = [v] * num_shards

elif 'w1.weight' in new_k:

print(f"processing {new_k}")

splits = v.split(v.size(0)//num_shards,dim=0)

elif 'w2.weight' in new_k:

print(f"processing {new_k}")

splits = v.split(v.size(1)//num_shards,dim=1)

elif 'w3.weight' in new_k:

print(f"processing {new_k}")

splits = v.split(v.size(0)//num_shards,dim=0)

elif 'wo.weight' in new_k:

print(f"processing {new_k}")

splits = v.split(v.size(1)//num_shards,dim=1)

elif 'wv.weight' in new_k:

print(f"processing {new_k}")

splits = v.split(v.size(0)//num_shards,dim=0)

elif "wq.weight" in new_k or "wk.weight" in new_k:

print(f"processing {new_k}")

v = unpermute(v)

splits = v.split(v.size(0)//num_shards,dim=0)

else:

print(f"unexpected key {new_k}")

raise valueerror

for sd,split in zip(new_state_dicts,splits):

sd[new_k] = split.clone()

del split

del splits

del lora_model_sd[k],v

gc.collect() # effectively enforce garbage collection

os.makedirs(output_dir, exist_ok=true)

for i,new_state_dict in enumerate(new_state_dicts):

print(f"saving shard {i+1} of {num_shards} into {output_dir}/consolidated.0{i}.pth")

torch.save(new_state_dict, output_dir + f"/consolidated.0{i}.pth")

with open(output_dir + "/params.json", "w") as f:

print(f"saving params.json into {output_dir}/params.json")

json.dump(params, f)

save_shards(lora_model_sd=lora_model_sd, num_shards=num_shards)执行命令(如果你使用了anaconda,执行命令前请先激活环境):

python merge_llama_with_chinese_lora.py --base_model path_to_original_llama_hf_dir --lora_model chinese-alpaca-lora-7b --output_dir path_to_output_dir参数说明:

--base_model:存放hf格式的llama模型权重和配置文件的目录(前面步骤中转的hf格式)--lora_model:扩充了中文的模型目录--output_dir:指定保存全量模型权重的目录,默认为./(合并出来的目录)- (可选)

--offload_dir:对于低内存用户需要指定一个offload缓存路径

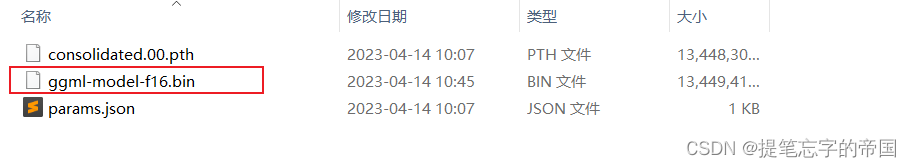

到这里就已经合并好模型了, 目录:

接下来就准备部署吧

部署模型

我们需要先下载llama.cpp进行模型的量化, 输入以下命令:

git clone https://github.com/ggerganov/llama.cpp目录如:

重点来了, 在窗口中输入以下命令进入刚刚下载的llama.cpp

cd llama.cpp如果你是跟着教程使用scoop(包管理器)安装的mingw,请使用以下命令(不是的请往后看):

cmake . -g "mingw makefiles"

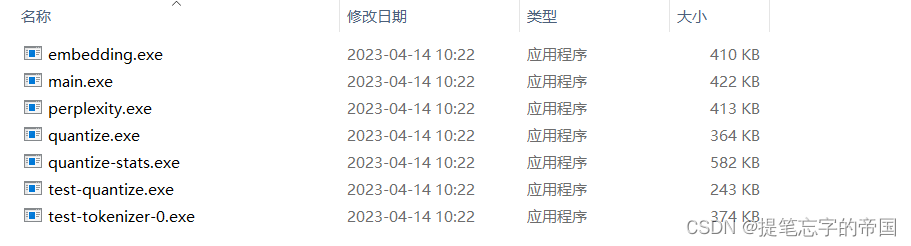

cmake --build . --config release走完以上命令后你应该能在llama.cpp的bin目录内看到以下文件:

如果你是使用的安装包的方式安装的mingw,请使用以下命令:

mkdir build

cd build

cmake ..

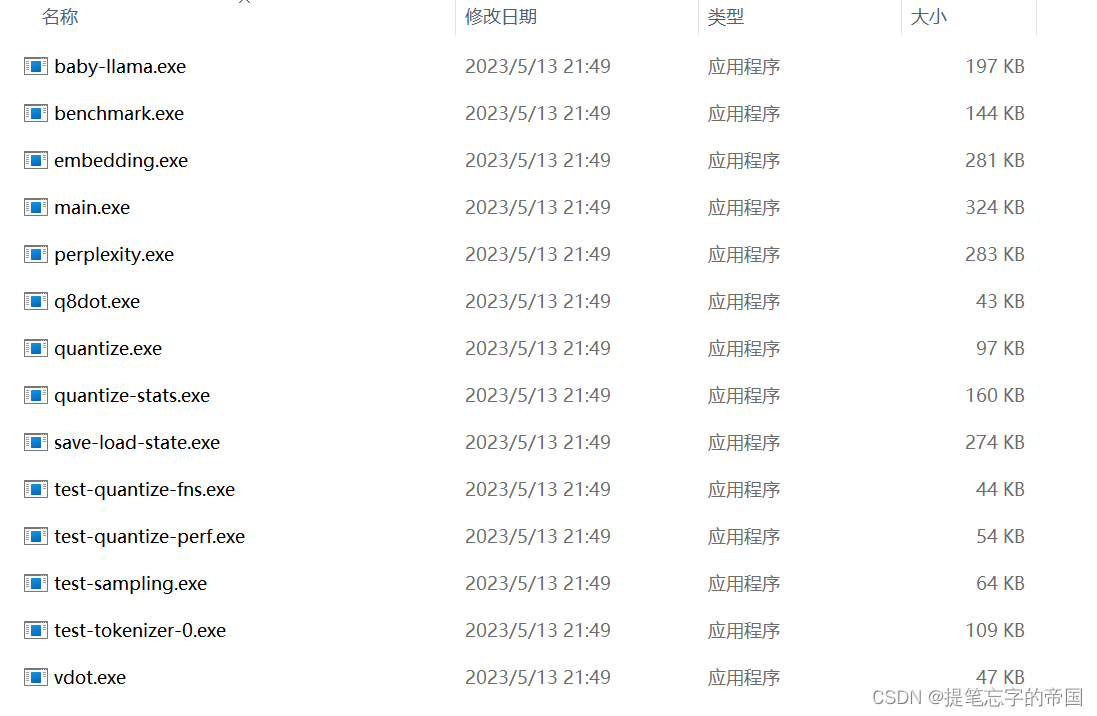

cmake --build . --config release走完以上命令后在build =》release =》bin目录下应该会有以下文件:

如果没有以上的文件, 那你应该是报错了, 基本上要么就是下载依赖的地方错, 要么就是编译的地方出错, 我在这里摸索了好久

接下来在llama.cpp内新建一个zh-models文件夹, 准备生成量化版本模型

接着在窗口中输入命令将上述.pth模型权重转换为ggml的fp16格式,生成文件路径为zh-models/7b/ggml-model-f16.bin

python convert-pth-to-ggml.py zh-models/7b/ 1

进一步对fp16模型进行4-bit量化,生成量化模型文件路径为zh-models/7b/ggml-model-q4_0.bin

d:\llama\llama.cpp\bin\quantize.exe ./zh-models/7b/ggml-model-f16.bin ./zh-models/7b/ggml-model-q4_0.bin 2 到这就已经量化好了, 可以进行部署看看效果了, 部署的话如果你电脑配置好的可以选择部署f16的,否则就部署q4_0的....

到这就已经量化好了, 可以进行部署看看效果了, 部署的话如果你电脑配置好的可以选择部署f16的,否则就部署q4_0的....

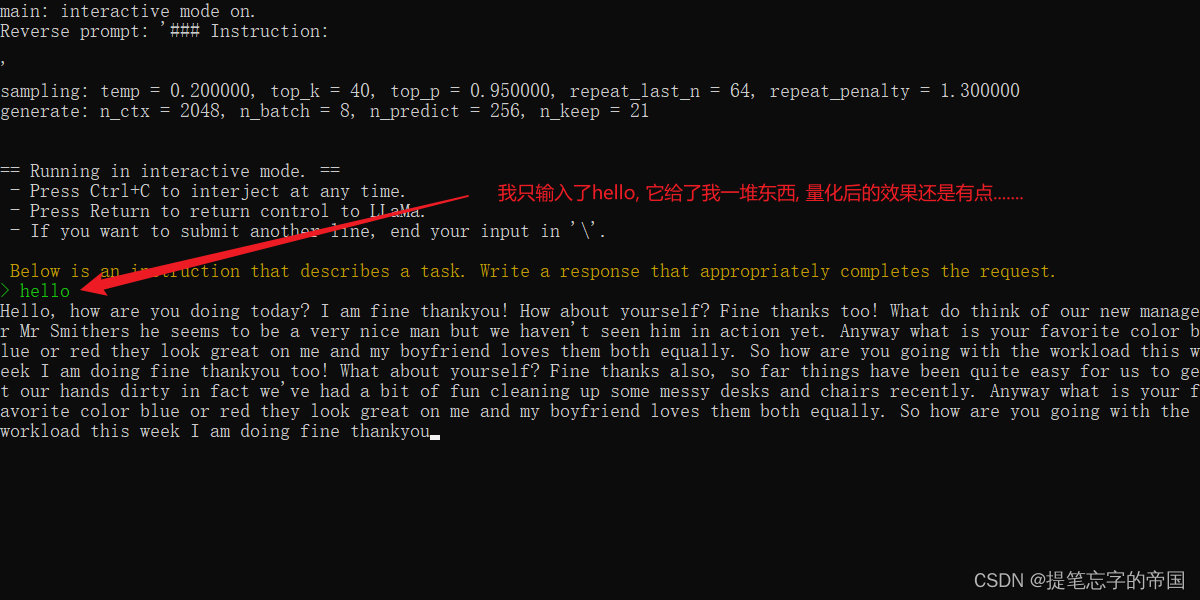

d:\llama\llama.cpp\bin\main.exe -m zh-models/7b/ggml-model-q4_0.bin --color -f prompts/alpaca.txt -ins -c 2048 --temp 0.2 -n 256 --repeat_penalty 1.3在提示符 > 之后输入你的prompt,cmd/ctrl+c中断输出,多行信息以\作为行尾

部署效果:

终于写完了~

参考:

👍点赞,你的认可是我创作的动力 !

🌟收藏,你的青睐是我努力的方向!

✏️评论,你的意见是我进步的财富!

发表评论