随着openai大火,从事ai开发的人趋之若鹜,这次使用python selenium抓取了领英上几万条岗位薪资数据,并使用pandas、matplotlib、seaborn等库进行可视化探索分析。

但领英设置了一些反爬措施,对ip进行限制封禁,因此会用到ip代理,用不同的ip进行访问,我这里用的是亮数据的ip代理。

亮数据是一家提供网络数据采集解决方案的网站,它拥有全球最大的代理ip网络,覆盖超过195个国家和地区,拥有超过7200万个不重复的真人ip地址。

这些ip地址可以用于匿名浏览网页、绕过ip封锁、抓取网页数据等。

亮数据官网地址:

https://get.brightdata.com/weijun

另外,亮数据提供各种数据采集工具,帮助企业轻松采集网页数据。这些工具包括web scraper ide、亮数据浏览器、serp api等等。

下面是关于python爬取领英的步骤和代码。

- 1、爬虫采集ai岗位数据-selenium&亮数据

- 2、处理和清洗数据-pandas

- 3、可视化数据探索-matplotlib seaborn

1、爬虫采集ai岗位数据-selenium&亮数据

# 导入相关库

import random

from selenium import webdriver

from selenium.webdriver.common.by import by

import time

import requests

import pandas as pd

from scripts.helpers import strip_val, get_value_by_path

# 选择edge浏览器

browser = 'edge'

# 创建网络会话,登录linkedin

# create_session函数用于创建一个自动化的浏览器会话,并使用提供的电子邮件和密码登录linkedin。

# 它首先根据browser变量选择相应的浏览器驱动程序(chrome或edge),然后导航到linkedin的登录页面,自动填写登录表单,并提交。

# 登录成功后,它会获取当前会话的cookies,并创建一个requests.session对象来保存这些cookies,以便后续的http请求可以保持登录状态。最后,它返回这个会话对象。

def create_session(email, password):

if browser == 'chrome':

driver = webdriver.chrome()

elif browser == 'edge':

driver = webdriver.edge()

# 登录信息

driver.get('https://www.linkedin.com/checkpoint/rm/sign-in-another-account')

time.sleep(1)

driver.find_element(by.id, 'username').send_keys(email)

driver.find_element(by.id, 'password').send_keys(password)

driver.find_element(by.xpath, '//*[@id="organic-div"]/form/div[3]/button').click()

time.sleep(1)

input('press enter after a successful login for "{}": '.format(email))

driver.get('https://www.linkedin.com/jobs/search/?')

time.sleep(1)

cookies = driver.get_cookies()

driver.quit()

session = requests.session()

for cookie in cookies:

session.cookies.set(cookie['name'], cookie['value'])

return session

# 获取登录账号和密码

def get_logins(method):

logins = pd.read_csv('logins.csv')

logins = logins[logins['method'] == method]

emails = logins['emails'].tolist()

passwords = logins['passwords'].tolist()

return emails, passwords

# jobsearchretriever类用于检索linkedin上的职位信息。

# 它初始化时设置了一个职位搜索链接,并获取登录凭证来创建多个会话。

# 它还定义了一个get_jobs方法,该方法通过会话发送http get请求到linkedin的职位搜索api,获取职位信息,并解析响应以提取职位id和标题。

# 如果职位被标记为赞助(即广告),它也会记录下来。

class jobsearchretriever:

def __init__(self):

self.job_search_link = 'https://www.linkedin.com/voyager/api/voyagerjobsdashjobcards?decorationid=com.linkedin.voyager.dash.deco.jobs.search.jobsearchcardscollection-187&count=100&q=jobsearch&query=(origin:job_search_page_other_entry,selectedfilters:(sortby:list(dd)),spellcorrectionenabled:true)&start=0'

emails, passwords = get_logins('search')

self.sessions = [create_session(email, password) for email, password in zip(emails, passwords)]

self.session_index = 0

self.headers = [{

'authority': 'www.linkedin.com',

'method': 'get',

'path': 'voyager/api/voyagerjobsdashjobcards?decorationid=com.linkedin.voyager.dash.deco.jobs.search.jobsearchcardscollection-187&count=25&q=jobsearch&query=(origin:job_search_page_other_entry,selectedfilters:(sortby:list(dd)),spellcorrectionenabled:true)&start=0',

'scheme': 'https',

'accept': 'application/vnd.linkedin.normalized+json+2.1',

'accept-encoding': 'gzip, deflate, br',

'accept-language': 'en-us,en;q=0.9',

'cookie': "; ".join([f"{key}={value}" for key, value in session.cookies.items()]),

'csrf-token': session.cookies.get('jsessionid').strip('"'),

# 'te': 'trailers',

'user-agent': 'mozilla/5.0 (macintosh; intel mac os x 10_15_7) applewebkit/537.36 (khtml, like gecko) chrome/117.0.0.0 safari/537.36',

# 'x-li-track': '{"clientversion":"1.12.7990","mpversion":"1.12.7990","osname":"web","timezoneoffset":-7,"timezone":"america/los_angeles","deviceformfactor":"desktop","mpname":"voyager-web","displaydensity":1,"displaywidth":1920,"displayheight":1080}'

'x-li-track': '{"clientversion":"1.13.5589","mpversion":"1.13.5589","osname":"web","timezoneoffset":-7,"timezone":"america/los_angeles","deviceformfactor":"desktop","mpname":"voyager-web","displaydensity":1,"displaywidth":360,"displayheight":800}'

} for session in self.sessions]

# self.proxies = [{'http': f'http://{proxy}', 'https': f'http://{proxy}'} for proxy in []]

# 添加亮数据代理ip

# get_jobs函数用于发送http请求到linkedin的职位搜索api,获取职位信息

# 它使用当前会话索引来选择一个会话,并发送带有相应请求头的get请求。如果响应状态码是200(表示请求成功)

# 它将解析json响应,提取职位id、标题和赞助状态,并将这些信息存储在一个字典中。

def get_jobs(self):

results = self.sessions[self.session_index].get(self.job_search_link, headers=self.headers[self.session_index]) #, proxies=self.proxies[self.session_index], timeout=5)

self.session_index = (self.session_index + 1) % len(self.sessions)

if results.status_code != 200:

raise exception('status code {} for search\ntext: {}'.format(results.status_code, results.text))

results = results.json()

job_ids = {}

for r in results['included']:

if r['$type'] == 'com.linkedin.voyager.dash.jobs.jobpostingcard' and 'referenceid' in r:

job_id = int(strip_val(r['jobpostingurn'], 1))

job_ids[job_id] = {'sponsored': false}

job_ids[job_id]['title'] = r.get('jobpostingtitle')

for x in r['footeritems']:

if x.get('type') == 'promoted':

job_ids[job_id]['sponsored'] = true

break

return job_ids

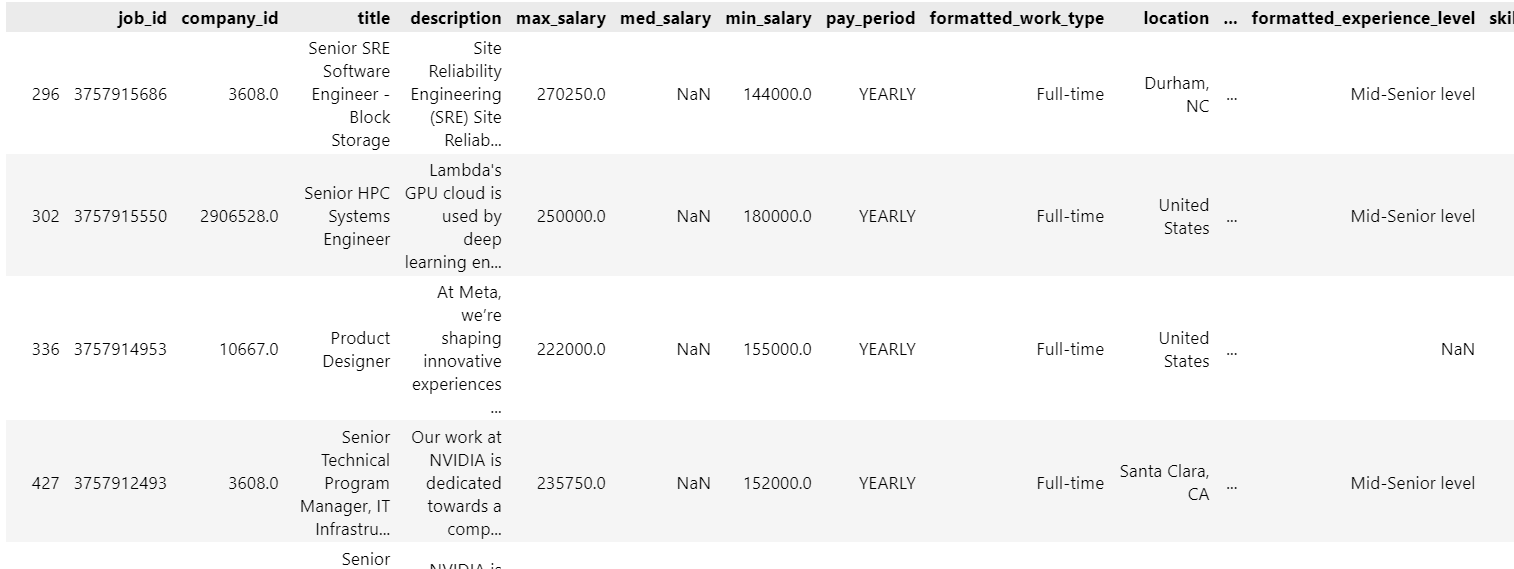

2、处理和清洗数据-pandas

# 导入相关库

import pandas as pd

import matplotlib.pyplot as plt

import seaborn as sns

from wordcloud import wordcloud

# 导入职位数据

job_postings = pd.read_csv('./archive/job_postings.csv')

job_postings

# 根据ai岗位关键词筛选ai相关岗位

keywords = ['data scientist', 'machine learning', 'data science', 'data analyst', 'ml engineer',' data engineer','ai engineer','ai/ml','ai/nlp','ai reasearcher','ai consultant','artificial intelligence','computer vision','deep learning']

# 新增一列,标注职位是否包含关键字

def check_keywords(description):

for keyword in keywords:

if keyword in str(description).lower():

return 'ai岗位'

return '非ai岗位'

job_postings['is_programmer'] = job_postings['description'].apply(check_keywords)

# 保存ai岗位新表

job_ai = job_postings[(job_postings['is_programmer']=='ai岗位') & (job_postings['pay_period']=='yearly') & (job_postings['max_salary']>10000) ]

job_others = job_postings[(job_postings['is_programmer']=='非ai岗位') & (job_postings['pay_period']=='yearly') & (job_postings['max_salary']>10000) & (job_postings['max_salary']<200000) ]

job_ai

处理好的数据如下:

3、可视化数据探索-matplotlib seaborn

ai岗位中位数年薪18w美金,最高50w以上

# 设置seaborn样式和调色板

sns.set_style("whitegrid")

palette = ["skyblue"]

# palette = ["#87ceeb"] # 使用颜色代码或者其他有效的颜色名称,这里使用天蓝色的颜色代码

# 箱线图

plt.figure(figsize=(8, 6))

sns.boxplot(y='max_salary', data=job_ai, palette=palette)

plt.ylabel('yearly salary')

plt.title('ai yearly salary boxplot')

# 添加分位数标注

quantiles = job_ai['max_salary'].quantile([0.25, 0.5, 0.75])

for q, label in zip(quantiles, ['q1', 'median', 'q3']):

plt.text(0, q, f'{label}: {int(q)}', horizontalalignment='center', verticalalignment='bottom', fontdict={'size': 10})

# 添加平均值、最大最小值标注

avg_value = job_ai['max_salary'].mean()

max_value = job_ai['max_salary'].max()

min_value = job_ai['max_salary'].min()

plt.text(0.2, avg_value, f'avg: {int(avg_value)}', ha='left', va='bottom', fontdict={'size': 10})

plt.text(0, max_value, f'max: {int(max_value)}', ha='center', va='bottom', fontdict={'size': 10})

plt.text(0, min_value, f'min: {int(min_value)}', ha='center', va='top', fontdict={'size': 10})

# 显示图形

plt.show()

ai岗位年薪主要集中在15-30w美金

# 1. 直方图

plt.figure(figsize=(10, 6))

plt.hist(job_ai['max_salary'], bins=30, color='skyblue', edgecolor='black')

plt.xlabel('yearly salary')

plt.ylabel('frequency')

plt.title('yearly salary distribution')

plt.show()

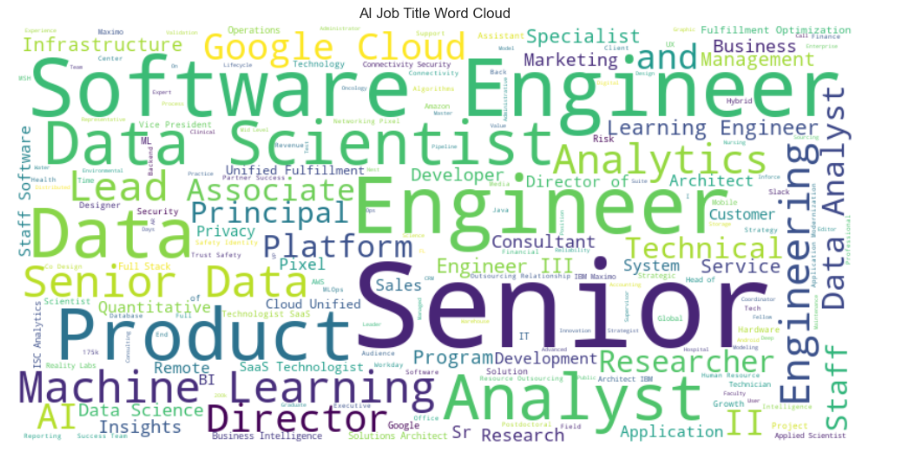

ai大多需要高级岗,对软件开发、机器学习、数据科学要求较多

# 词云

stopwords = set(["manager"])

job_titles_text = ' '.join(job_ai['title'])

wordcloud = wordcloud(width=800, height=400, background_color='white',stopwords=stopwords).generate(job_titles_text)

# 显示词云

plt.figure(figsize=(10, 6))

plt.imshow(wordcloud, interpolation='bilinear')

plt.title('ai job title word cloud')

plt.axis('off')

plt.tight_layout()

plt.show()

数据发现,ai岗位平均年薪竟高达18万美金,远超普通开发岗,而且ai岗位需求也在爆发性增长。

这次使用的是亮数据ip服务,质量还是蛮高的,大家可以试试。

亮数据官网地址:

发表评论