系统初始化

生产环境肯定要更高配置,虚拟机以保守的最低配置。

| 机器 | ip | 规格 |

|---|---|---|

| master | 192.168.203.11 | 1核2线程、2g内存、40g磁盘 |

| node2 | 192.168.203.12 | 1核2线程、2g内存、40g磁盘 |

| node3 | 192.168.203.13 | 1核2线程、2g内存、40g磁盘 |

修改为静态ip

vi /etc/resolv.conf

追加内容后保存并退出

nameserver 223.5.5.5 nameserver 223.6.6.6

sudo vi /etc/sysconfig/network-scripts/ifcfg-ens33

bootproto="dhcp"改成bootproto=“static”,如果是复制的机器uuid、ipaddr也要不一致

type="ethernet" proxy_method="none" browser_only="no" bootproto="static" defroute="yes" ipv4_failure_fatal="no" ipv6init="yes" ipv6_autoconf="yes" ipv6_defroute="yes" ipv6_failure_fatal="no" ipv6_addr_gen_mode="stable-privacy" name="ens33" uuid="0ef41c81-2fa8-405d-9ab5-3ff34ac815cf" device="ens33" onboot="yes" ipaddr="192.168.203.11" prefix="24" gateway="192.168.203.2" ipv6_privacy="no"

重启网络使配置生效

sudo systemctl restart network

永久关闭防火墙(所有机器)

sudo systemctl stop firewalld && systemctl disable firewalld

永久关闭selinux(所有机器)

sudo sed -i 's/^selinux=.*/selinux=disabled/' /etc/selinux/config

启用selinux命令:setenforce 0【不需要执行,只是作为一种记录】

永久禁止swap分区(所有机器)

sudo sed -ri 's/.*swap.*/#&/' /etc/fstab

永久设置hostname(根据机器分别设置mster、node1、node2)

三台机器分别为mster、node1、node2

sudo hostnamectl set-hostname master

使用hostnamectl或hostname命令验证是否修改成功

在hosts文件添加内容(仅master设置)

sudo cat >> /etc/hosts << eof 192.168.203.11 master 192.168.203.12 node1 192.168.203.13 node2 eof

将桥接的ipv4流量传递到iptables的链(所有机器)

sudo cat > /etc/sysctl.d/k8s.conf << eof net.bridge.bridge-nf-call-ip6tables = 1 net.bridge.bridge-nf-call-iptables = 1 net.ipv4.ip_forward = 1 vm.swappiness = 0 eof

使k8s.conf立即生效

sudo sysctl --system

时间同步(所有机器)

sudo yum install -y ntpdate

安装好后执行同步时间命令

sudo ntpdate time.windows.com

所有机器安装docker、kubeadm、kubelet、kubectl

安装docker

安装必要的一些系统工具

yum install -y net-tools yum install -y wget sudo yum install -y yum-utils device-mapper-persistent-data lvm2

安装配置管理和设置镜像源

sudo yum-config-manager --add-repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo sudo sed -i 's+download.docker.com+mirrors.aliyun.com/docker-ce+' /etc/yum.repos.d/docker-ce.repo

查找docker-ce的版本

sudo yum list docker-ce.x86_64 --showduplicates | sort -r

安装指定版本的docker-ce

sudo yum -y install docker-ce-[version]

sudo yum -y install docker-ce-18.06.1.ce-3.el7

启动docker服务

sudo systemctl enable docker && sudo systemctl start docker

查看docker是否启动成功【注意docker的client和server要一致,否则某些情况下会报错】

sudo docker --version

创建/etc/docker/daemon.json文件并设置docker仓库为aliyun仓库

sudo cat > /etc/docker/daemon.json << eof

{

"registry-mirrors":["https://b9pmyelo.mirror.aliyuncs.com"]

}

eof

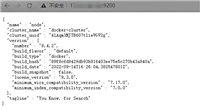

重启docker查看配置是否生效

sudo docker info

重启

sudo reboot now

添加yum软件源kubernetes.repo为阿里云

cat <<eof > /etc/yum.repos.d/kubernetes.repo [kubernetes] name=kubernetes baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/ enabled=1 gpgcheck=0 repo_gpgcheck=0 gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg eof

安装 kubelet、kubeadm、kubectl

sudo yum install -y kubelet-1.18.0 kubeadm-1.18.0 kubectl-1.18.0

设置开机启动和启动

sudo systemctl enable kubelet && systemctl start kubelet

部署kubernetes

apiserver-advertise-address表示master主机ip

image-repository表示镜像仓库

kubernetes-version表示k8s的版本,跟上面的kubelet、kubeadm、kubectl版本一致

service-cidr表示集群内部虚拟网络,pod统一访问入口

pod-network-cidr表示pod网络,与下面部署的cni网络组件yaml中保持一致

kubernetes初始化【仅master执行,过程可能会有点久,请耐心等待命令行输出】

–v=5可加可不加,建议加,输出完整的日志,方便排查问题

kubeadm init \ --v=5 \ --apiserver-advertise-address=192.168.203.11 \ --image-repository=registry.aliyuncs.com/google_containers \ --kubernetes-version=v1.18.0 \ --service-cidr=10.96.0.0/12 \ --pod-network-cidr=10.244.0.0/16

输出以下内容表示初始化成功

your kubernetes control-plane has initialized successfully!

to start using your cluster, you need to run the following as a regular user:

mkdir -p $home/.kube

sudo cp -i /etc/kubernetes/admin.conf $home/.kube/config

sudo chown $(id -u):$(id -g) $home/.kube/config

you should now deploy a pod network to the cluster.

run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

then you can join any number of worker nodes by running the following on each as root:

kubeadm join 192.168.203.11:6443 --token 51c0rb.ehwwxemgec75r1g6 \

--discovery-token-ca-cert-hash sha256:fad429370f462b36d2651e3e37be4d4b34e63d0378966a1532442dc3f67e41b4

根据上面的提示执行对应的to start using your cluster, you need to run the following as a regular user:命令

master节点执行,node节点不执行

kubectl get nodes查看节点信息

mkdir -p $home/.kube sudo cp -i /etc/kubernetes/admin.conf $home/.kube/config sudo chown $(id -u):$(id -g) $home/.kube/config kubectl get nodes

node节点根据上面的提示执行对应的then you can join any number of worker nodes by running the following on each as root:命令

node节点执行,master节点不执行

kubeadm join 192.168.203.11:6443 --token 51c0rb.ehwwxemgec75r1g6 \

--discovery-token-ca-cert-hash sha256:fad429370f462b36d2651e3e37be4d4b34e63d0378966a1532442dc3f67e41b4

node1和node2执行命令

安装cni

kube-flannel-ds-amd.yml文件

---

apiversion: policy/v1beta1

kind: podsecuritypolicy

metadata:

name: psp.flannel.unprivileged

annotations:

seccomp.security.alpha.kubernetes.io/allowedprofilenames: docker/default

seccomp.security.alpha.kubernetes.io/defaultprofilename: docker/default

apparmor.security.beta.kubernetes.io/allowedprofilenames: runtime/default

apparmor.security.beta.kubernetes.io/defaultprofilename: runtime/default

spec:

privileged: false

volumes:

- configmap

- secret

- emptydir

- hostpath

allowedhostpaths:

- pathprefix: "/etc/cni/net.d"

- pathprefix: "/etc/kube-flannel"

- pathprefix: "/run/flannel"

readonlyrootfilesystem: false

# users and groups

runasuser:

rule: runasany

supplementalgroups:

rule: runasany

fsgroup:

rule: runasany

# privilege escalation

allowprivilegeescalation: false

defaultallowprivilegeescalation: false

# capabilities

allowedcapabilities: ['net_admin']

defaultaddcapabilities: []

requireddropcapabilities: []

# host namespaces

hostpid: false

hostipc: false

hostnetwork: true

hostports:

- min: 0

max: 65535

# selinux

selinux:

# selinux is unused in caasp

rule: 'runasany'

---

kind: clusterrole

apiversion: rbac.authorization.k8s.io/v1beta1

metadata:

name: flannel

rules:

- apigroups: ['extensions']

resources: ['podsecuritypolicies']

verbs: ['use']

resourcenames: ['psp.flannel.unprivileged']

- apigroups:

- ""

resources:

- pods

verbs:

- get

- apigroups:

- ""

resources:

- nodes

verbs:

- list

- watch

- apigroups:

- ""

resources:

- nodes/status

verbs:

- patch

---

kind: clusterrolebinding

apiversion: rbac.authorization.k8s.io/v1beta1

metadata:

name: flannel

roleref:

apigroup: rbac.authorization.k8s.io

kind: clusterrole

name: flannel

subjects:

- kind: serviceaccount

name: flannel

namespace: kube-system

---

apiversion: v1

kind: serviceaccount

metadata:

name: flannel

namespace: kube-system

---

kind: configmap

apiversion: v1

metadata:

name: kube-flannel-cfg

namespace: kube-system

labels:

tier: node

app: flannel

data:

cni-conf.json: |

{

"name": "cbr0",

"cniversion": "0.3.1",

"plugins": [

{

"type": "flannel",

"delegate": {

"hairpinmode": true,

"isdefaultgateway": true

}

},

{

"type": "portmap",

"capabilities": {

"portmappings": true

}

}

]

}

net-conf.json: |

{

"network": "10.244.0.0/16",

"backend": {

"type": "vxlan"

}

}

---

apiversion: apps/v1

kind: daemonset

metadata:

name: kube-flannel-ds-amd64

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchlabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeaffinity:

requiredduringschedulingignoredduringexecution:

nodeselectorterms:

- matchexpressions:

- key: beta.kubernetes.io/os

operator: in

values:

- linux

- key: beta.kubernetes.io/arch

operator: in

values:

- amd64

hostnetwork: true

tolerations:

- operator: exists

effect: noschedule

serviceaccountname: flannel

initcontainers:

- name: install-cni

image: quay.io/coreos/flannel:v0.13.0-rc2

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumemounts:

- name: cni

mountpath: /etc/cni/net.d

- name: flannel-cfg

mountpath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: quay.io/coreos/flannel:v0.13.0-rc2

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50mi"

limits:

cpu: "100m"

memory: "50mi"

securitycontext:

privileged: false

capabilities:

add: ["net_admin"]

env:

- name: pod_name

valuefrom:

fieldref:

fieldpath: metadata.name

- name: pod_namespace

valuefrom:

fieldref:

fieldpath: metadata.namespace

volumemounts:

- name: run

mountpath: /run/flannel

- name: flannel-cfg

mountpath: /etc/kube-flannel/

volumes:

- name: run

hostpath:

path: /run/flannel

- name: cni

hostpath:

path: /etc/cni/net.d

- name: flannel-cfg

configmap:

name: kube-flannel-cfg

---

apiversion: apps/v1

kind: daemonset

metadata:

name: kube-flannel-ds-arm64

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchlabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeaffinity:

requiredduringschedulingignoredduringexecution:

nodeselectorterms:

- matchexpressions:

- key: beta.kubernetes.io/os

operator: in

values:

- linux

- key: beta.kubernetes.io/arch

operator: in

values:

- arm64

hostnetwork: true

tolerations:

- operator: exists

effect: noschedule

serviceaccountname: flannel

initcontainers:

- name: install-cni

image: quay-mirror.qiniu.com/coreos/flannel:v0.11.0-arm64

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumemounts:

- name: cni

mountpath: /etc/cni/net.d

- name: flannel-cfg

mountpath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: quay-mirror.qiniu.com/coreos/flannel:v0.11.0-arm64

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50mi"

limits:

cpu: "100m"

memory: "50mi"

securitycontext:

privileged: false

capabilities:

add: ["net_admin"]

env:

- name: pod_name

valuefrom:

fieldref:

fieldpath: metadata.name

- name: pod_namespace

valuefrom:

fieldref:

fieldpath: metadata.namespace

volumemounts:

- name: run

mountpath: /run/flannel

- name: flannel-cfg

mountpath: /etc/kube-flannel/

volumes:

- name: run

hostpath:

path: /run/flannel

- name: cni

hostpath:

path: /etc/cni/net.d

- name: flannel-cfg

configmap:

name: kube-flannel-cfg

---

apiversion: apps/v1

kind: daemonset

metadata:

name: kube-flannel-ds-arm

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchlabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeaffinity:

requiredduringschedulingignoredduringexecution:

nodeselectorterms:

- matchexpressions:

- key: beta.kubernetes.io/os

operator: in

values:

- linux

- key: beta.kubernetes.io/arch

operator: in

values:

- arm

hostnetwork: true

tolerations:

- operator: exists

effect: noschedule

serviceaccountname: flannel

initcontainers:

- name: install-cni

image: quay-mirror.qiniu.com/coreos/flannel:v0.11.0-arm

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumemounts:

- name: cni

mountpath: /etc/cni/net.d

- name: flannel-cfg

mountpath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: quay-mirror.qiniu.com/coreos/flannel:v0.11.0-arm

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50mi"

limits:

cpu: "100m"

memory: "50mi"

securitycontext:

privileged: false

capabilities:

add: ["net_admin"]

env:

- name: pod_name

valuefrom:

fieldref:

fieldpath: metadata.name

- name: pod_namespace

valuefrom:

fieldref:

fieldpath: metadata.namespace

volumemounts:

- name: run

mountpath: /run/flannel

- name: flannel-cfg

mountpath: /etc/kube-flannel/

volumes:

- name: run

hostpath:

path: /run/flannel

- name: cni

hostpath:

path: /etc/cni/net.d

- name: flannel-cfg

configmap:

name: kube-flannel-cfg

---

apiversion: apps/v1

kind: daemonset

metadata:

name: kube-flannel-ds-ppc64le

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchlabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeaffinity:

requiredduringschedulingignoredduringexecution:

nodeselectorterms:

- matchexpressions:

- key: beta.kubernetes.io/os

operator: in

values:

- linux

- key: beta.kubernetes.io/arch

operator: in

values:

- ppc64le

hostnetwork: true

tolerations:

- operator: exists

effect: noschedule

serviceaccountname: flannel

initcontainers:

- name: install-cni

image: quay-mirror.qiniu.com/coreos/flannel:v0.11.0-ppc64le

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumemounts:

- name: cni

mountpath: /etc/cni/net.d

- name: flannel-cfg

mountpath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: quay-mirror.qiniu.com/coreos/flannel:v0.11.0-ppc64le

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50mi"

limits:

cpu: "100m"

memory: "50mi"

securitycontext:

privileged: false

capabilities:

add: ["net_admin"]

env:

- name: pod_name

valuefrom:

fieldref:

fieldpath: metadata.name

- name: pod_namespace

valuefrom:

fieldref:

fieldpath: metadata.namespace

volumemounts:

- name: run

mountpath: /run/flannel

- name: flannel-cfg

mountpath: /etc/kube-flannel/

volumes:

- name: run

hostpath:

path: /run/flannel

- name: cni

hostpath:

path: /etc/cni/net.d

- name: flannel-cfg

configmap:

name: kube-flannel-cfg

---

apiversion: apps/v1

kind: daemonset

metadata:

name: kube-flannel-ds-s390x

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchlabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeaffinity:

requiredduringschedulingignoredduringexecution:

nodeselectorterms:

- matchexpressions:

- key: beta.kubernetes.io/os

operator: in

values:

- linux

- key: beta.kubernetes.io/arch

operator: in

values:

- s390x

hostnetwork: true

tolerations:

- operator: exists

effect: noschedule

serviceaccountname: flannel

initcontainers:

- name: install-cni

image: quay-mirror.qiniu.com/coreos/flannel:v0.11.0-s390x

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumemounts:

- name: cni

mountpath: /etc/cni/net.d

- name: flannel-cfg

mountpath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: quay-mirror.qiniu.com/coreos/flannel:v0.11.0-s390x

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50mi"

limits:

cpu: "100m"

memory: "50mi"

securitycontext:

privileged: false

capabilities:

add: ["net_admin"]

env:

- name: pod_name

valuefrom:

fieldref:

fieldpath: metadata.name

- name: pod_namespace

valuefrom:

fieldref:

fieldpath: metadata.namespace

volumemounts:

- name: run

mountpath: /run/flannel

- name: flannel-cfg

mountpath: /etc/kube-flannel/

volumes:

- name: run

hostpath:

path: /run/flannel

- name: cni

hostpath:

path: /etc/cni/net.d

- name: flannel-cfg

configmap:

name: kube-flannel-cfg

docker pull quay.io/coreos/flannel:v0.13.0-rc2 kubectl apply -f kube-flannel-ds-amd.yml

kubectl get pod -n kube-system 查看kube-flannel-ds-xxx 是否为runnin状态

systemctl restart kubelet kubectl get pod -n kube-system

master执行

kubectl get node

node1和node2节点处于ready状态

[root@master ~]# kubectl get node name status roles age version master ready master 50m v1.18.0 node1 ready <none> 49m v1.18.0 node2 ready <none> 49m v1.18.0

master部署cni网络插件【如果前面没有把–network-plugin=cni移除并重启kubelet,这步很可能会报错】

kubectl apply -f https://raw.githubusercontent.com/coreos/flannel/master/documentation/kube-flannel.yml kubectl get pods -n kube-system kubectl get node

master执行测试kubernetes(k8s)集群

kubectl create deployment nginx --image=nginx kubectl expose deployment nginx --port=80 --type=nodeport kubectl get pod,svc

输出如下

name type cluster-ip external-ip port(s) age service/kubernetes clusterip 10.96.0.1 <none> 443/tcp 21m service/nginx nodeport 10.108.8.133 <none> 80:30008/tcp 111s

如果nginx启动失败,则进行删除

kubectl delete service nginx

总结

到此这篇关于linux安装kubernetes(k8s)的文章就介绍到这了,更多相关linux安装k8s内容请搜索代码网以前的文章或继续浏览下面的相关文章希望大家以后多多支持代码网!

发表评论