whisper使用

github: https://gitcode.com/openai/whisper/overview

1. 直接调用 语音识别

,transcribe()方法会读取整个文件,并使用一个30秒的滑动窗口对音频进行处理,对每个窗口进行自回归序列到序列的预测。

官网readme调用1

import whisper

model = whisper.load_model("base") # 加载模型

result = model.transcribe("audio.mp3") # 指定音频路径 识别

print(result["text"]) # 输出识别结果

load_model方法在__init__.py文件中有定义

{'text': ' 你一定會笑著說 二百克芝麻能力好耐架', 'segments': [{'id': 0, 'seek': 0, 'start': 0.0, 'end': 2.0, 'text': ' 你一定會笑著說', 'tokens': [50365, 10930, 24272, 6236, 11600, 19382, 4622, 50465], 'temperature': 0.0, 'avg_logprob': -0.5130815124511718, 'compression_ratio': 0.8253968253968254, 'no_speech_prob': 0.12529681622982025}, {'id': 1, 'seek': 0, 'start': 2.0, 'end': 5.5, 'text': ' 二百克芝麻能力好耐架', 'tokens': [50465, 220, 11217, 31906, 24881, 13778, 251, 38999, 8225, 13486, 2131, 4450, 238, 7360, 114, 50640], 'temperature': 0.0, 'avg_logprob': -0.5130815124511718, 'compression_ratio': 0.8253968253968254, 'no_speech_prob': 0.12529681622982025}], 'language': 'yue'}

2. 语种识别 whisper.detect_language()和whisper.decode()

以下是使用whisper.detect_language()和whisper.decode()的示例用法,这些方法提供对模型的更低级别访问。更低级别可以说是更底层的调用。

官网readme调用2

import whisper

model = whisper.load_model("base") # 加载预训练的语音识别模型,这里使用了名为"base"的模型。

# load audio and pad/trim it to fit 30 seconds

audio = whisper.load_audio("audio.mp3")

audio = whisper.pad_or_trim(audio) # 对加载的音频进行填充或裁剪,使其适合30秒的滑动窗口处理。

# make log-mel spectrogram and move to the same device as the model

mel = whisper.log_mel_spectrogram(audio).to(model.device)

# 将音频转换为对数梅尔频谱图,并将其移动到与模型相同的设备(如gpu)上进行处理。

# detect the spoken language

_, probs = model.detect_language(mel) # 使用模型进行语言检测,返回检测到的语言和对应的概率。

# 打印检测到的语言,选取概率最高的语言作为结果。

print(f"detected language: {max(probs, key=probs.get)}")

# decode the audio

# 置解码的选项,如语言模型、解码器等。

options = whisper.decodingoptions()

# 使用模型对音频进行解码,生成识别结果。

result = whisper.decode(model, mel, options)

# print the recognized text

# 打印识别结果,即模型识别出的文本内容。

print(result.text)

3. 指定要识别的语种做语音识别

from whisper import load_model

from whisper.transcribe import transcribe

model = load_model(model_path, device=device)

# 指定model 音频路径 要识别的语言类型 yue--粤语

result = transcribe(model, audio_path, language="yue")

whisper 源码的transcribe函数

def transcribe(

model: "whisper",

audio: union[str, np.ndarray, torch.tensor],

*,

verbose: optional[bool] = none,

temperature: union[float, tuple[float, ...]] = (0.0, 0.2, 0.4, 0.6, 0.8, 1.0),

compression_ratio_threshold: optional[float] = 2.4,

logprob_threshold: optional[float] = -1.0,

no_speech_threshold: optional[float] = 0.6,

condition_on_previous_text: bool = true,

initial_prompt: optional[str] = none,

word_timestamps: bool = false,

prepend_punctuations: str = "\"'“¿([{-",

append_punctuations: str = "\"'.。,,!!??::”)]}、",

clip_timestamps: union[str, list[float]] = "0",

hallucination_silence_threshold: optional[float] = none,

**decode_options,

):

"""

transcribe an audio file using whisper

parameters

----------

model: whisper

the whisper model instance

audio: union[str, np.ndarray, torch.tensor]

the path to the audio file to open, or the audio waveform

verbose: bool

whether to display the text being decoded to the console. if true, displays all the details,

if false, displays minimal details. if none, does not display anything

temperature: union[float, tuple[float, ...]]

temperature for sampling. it can be a tuple of temperatures, which will be successively used

upon failures according to either `compression_ratio_threshold` or `logprob_threshold`.

compression_ratio_threshold: float

if the gzip compression ratio is above this value, treat as failed

logprob_threshold: float

if the average log probability over sampled tokens is below this value, treat as failed

no_speech_threshold: float

if the no_speech probability is higher than this value and the average log probability

over sampled tokens is below `logprob_threshold`, consider the segment as silent

condition_on_previous_text: bool

if true, the previous output of the model is provided as a prompt for the next window;

disabling may make the text inconsistent across windows, but the model becomes less prone to

getting stuck in a failure loop, such as repetition looping or timestamps going out of sync.

word_timestamps: bool

extract word-level timestamps using the cross-attention pattern and dynamic time warping,

and include the timestamps for each word in each segment.

prepend_punctuations: str

if word_timestamps is true, merge these punctuation symbols with the next word

append_punctuations: str

if word_timestamps is true, merge these punctuation symbols with the previous word

initial_prompt: optional[str]

optional text to provide as a prompt for the first window. this can be used to provide, or

"prompt-engineer" a context for transcription, e.g. custom vocabularies or proper nouns

to make it more likely to predict those word correctly.

decode_options: dict

keyword arguments to construct `decodingoptions` instances

clip_timestamps: union[str, list[float]]

comma-separated list start,end,start,end,... timestamps (in seconds) of clips to process.

the last end timestamp defaults to the end of the file.

hallucination_silence_threshold: optional[float]

when word_timestamps is true, skip silent periods longer than this threshold (in seconds)

when a possible hallucination is detected

returns

-------

a dictionary containing the resulting text ("text") and segment-level details ("segments"), and

the spoken language ("language"), which is detected when `decode_options["language"]` is none.

"""

dtype = torch.float16 if decode_options.get("fp16", true) else torch.float32

if model.device == torch.device("cpu"):

if torch.cuda.is_available():

warnings.warn("performing inference on cpu when cuda is available")

if dtype == torch.float16:

warnings.warn("fp16 is not supported on cpu; using fp32 instead")

dtype = torch.float32

if dtype == torch.float32:

decode_options["fp16"] = false

# pad 30-seconds of silence to the input audio, for slicing

mel = log_mel_spectrogram(audio, model.dims.n_mels, padding=n_samples)

content_frames = mel.shape[-1] - n_frames

content_duration = float(content_frames * hop_length / sample_rate)

if decode_options.get("language", none) is none:

if not model.is_multilingual:

decode_options["language"] = "en"

else:

if verbose:

print(

"detecting language using up to the first 30 seconds. use `--language` to specify the language"

)

mel_segment = pad_or_trim(mel, n_frames).to(model.device).to(dtype)

_, probs = model.detect_language(mel_segment)

decode_options["language"] = max(probs, key=probs.get)

if verbose is not none:

print(

f"detected language: {languages[decode_options['language']].title()}"

)

language: str = decode_options["language"]

task: str = decode_options.get("task", "transcribe")

tokenizer = get_tokenizer(

model.is_multilingual,

num_languages=model.num_languages,

language=language,

task=task,

)

if isinstance(clip_timestamps, str):

clip_timestamps = [

float(ts) for ts in (clip_timestamps.split(",") if clip_timestamps else [])

]

seek_points: list[int] = [round(ts * frames_per_second) for ts in clip_timestamps]

if len(seek_points) == 0:

seek_points.append(0)

if len(seek_points) % 2 == 1:

seek_points.append(content_frames)

seek_clips: list[tuple[int, int]] = list(zip(seek_points[::2], seek_points[1::2]))

punctuation = "\"'“¿([{-\"'.。,,!!??::”)]}、"

if word_timestamps and task == "translate":

warnings.warn("word-level timestamps on translations may not be reliable.")

def decode_with_fallback(segment: torch.tensor) -> decodingresult:

temperatures = (

[temperature] if isinstance(temperature, (int, float)) else temperature

)

decode_result = none

for t in temperatures:

kwargs = {**decode_options}

if t > 0:

# disable beam_size and patience when t > 0

kwargs.pop("beam_size", none)

kwargs.pop("patience", none)

else:

# disable best_of when t == 0

kwargs.pop("best_of", none)

options = decodingoptions(**kwargs, temperature=t)

decode_result = model.decode(segment, options)

needs_fallback = false

if (

compression_ratio_threshold is not none

and decode_result.compression_ratio > compression_ratio_threshold

):

needs_fallback = true # too repetitive

if (

logprob_threshold is not none

and decode_result.avg_logprob < logprob_threshold

):

needs_fallback = true # average log probability is too low

if (

no_speech_threshold is not none

and decode_result.no_speech_prob > no_speech_threshold

):

needs_fallback = false # silence

if not needs_fallback:

break

return decode_result

clip_idx = 0

seek = seek_clips[clip_idx][0]

input_stride = exact_div(

n_frames, model.dims.n_audio_ctx

) # mel frames per output token: 2

time_precision = (

input_stride * hop_length / sample_rate

) # time per output token: 0.02 (seconds)

all_tokens = []

all_segments = []

prompt_reset_since = 0

if initial_prompt is not none:

initial_prompt_tokens = tokenizer.encode(" " + initial_prompt.strip())

all_tokens.extend(initial_prompt_tokens)

else:

initial_prompt_tokens = []

def new_segment(

*, start: float, end: float, tokens: torch.tensor, result: decodingresult

):

tokens = tokens.tolist()

text_tokens = [token for token in tokens if token < tokenizer.eot]

return {

"seek": seek,

"start": start,

"end": end,

"text": tokenizer.decode(text_tokens),

"tokens": tokens,

"temperature": result.temperature,

"avg_logprob": result.avg_logprob,

"compression_ratio": result.compression_ratio,

"no_speech_prob": result.no_speech_prob,

}

# show the progress bar when verbose is false (if true, transcribed text will be printed)

with tqdm.tqdm(

total=content_frames, unit="frames", disable=verbose is not false

) as pbar:

last_speech_timestamp = 0.0

# note: this loop is obscurely flattened to make the diff readable.

# a later commit should turn this into a simpler nested loop.

# for seek_clip_start, seek_clip_end in seek_clips:

# while seek < seek_clip_end

while clip_idx < len(seek_clips):

seek_clip_start, seek_clip_end = seek_clips[clip_idx]

if seek < seek_clip_start:

seek = seek_clip_start

if seek >= seek_clip_end:

clip_idx += 1

if clip_idx < len(seek_clips):

seek = seek_clips[clip_idx][0]

continue

time_offset = float(seek * hop_length / sample_rate)

window_end_time = float((seek + n_frames) * hop_length / sample_rate)

segment_size = min(n_frames, content_frames - seek, seek_clip_end - seek)

mel_segment = mel[:, seek : seek + segment_size]

segment_duration = segment_size * hop_length / sample_rate

mel_segment = pad_or_trim(mel_segment, n_frames).to(model.device).to(dtype)

decode_options["prompt"] = all_tokens[prompt_reset_since:]

result: decodingresult = decode_with_fallback(mel_segment)

tokens = torch.tensor(result.tokens)

if no_speech_threshold is not none:

# no voice activity check

should_skip = result.no_speech_prob > no_speech_threshold

if (

logprob_threshold is not none

and result.avg_logprob > logprob_threshold

):

# don't skip if the logprob is high enough, despite the no_speech_prob

should_skip = false

if should_skip:

seek += segment_size # fast-forward to the next segment boundary

continue

previous_seek = seek

current_segments = []

# anomalous words are very long/short/improbable

def word_anomaly_score(word: dict) -> float:

probability = word.get("probability", 0.0)

duration = word["end"] - word["start"]

score = 0.0

if probability < 0.15:

score += 1.0

if duration < 0.133:

score += (0.133 - duration) * 15

if duration > 2.0:

score += duration - 2.0

return score

def is_segment_anomaly(segment: optional[dict]) -> bool:

if segment is none or not segment["words"]:

return false

words = [w for w in segment["words"] if w["word"] not in punctuation]

words = words[:8]

score = sum(word_anomaly_score(w) for w in words)

return score >= 3 or score + 0.01 >= len(words)

def next_words_segment(segments: list[dict]) -> optional[dict]:

return next((s for s in segments if s["words"]), none)

timestamp_tokens: torch.tensor = tokens.ge(tokenizer.timestamp_begin)

single_timestamp_ending = timestamp_tokens[-2:].tolist() == [false, true]

consecutive = torch.where(timestamp_tokens[:-1] & timestamp_tokens[1:])[0]

consecutive.add_(1)

if len(consecutive) > 0:

# if the output contains two consecutive timestamp tokens

slices = consecutive.tolist()

if single_timestamp_ending:

slices.append(len(tokens))

last_slice = 0

for current_slice in slices:

sliced_tokens = tokens[last_slice:current_slice]

start_timestamp_pos = (

sliced_tokens[0].item() - tokenizer.timestamp_begin

)

end_timestamp_pos = (

sliced_tokens[-1].item() - tokenizer.timestamp_begin

)

current_segments.append(

new_segment(

start=time_offset + start_timestamp_pos * time_precision,

end=time_offset + end_timestamp_pos * time_precision,

tokens=sliced_tokens,

result=result,

)

)

last_slice = current_slice

if single_timestamp_ending:

# single timestamp at the end means no speech after the last timestamp.

seek += segment_size

else:

# otherwise, ignore the unfinished segment and seek to the last timestamp

last_timestamp_pos = (

tokens[last_slice - 1].item() - tokenizer.timestamp_begin

)

seek += last_timestamp_pos * input_stride

else:

duration = segment_duration

timestamps = tokens[timestamp_tokens.nonzero().flatten()]

if (

len(timestamps) > 0

and timestamps[-1].item() != tokenizer.timestamp_begin

):

# no consecutive timestamps but it has a timestamp; use the last one.

last_timestamp_pos = (

timestamps[-1].item() - tokenizer.timestamp_begin

)

duration = last_timestamp_pos * time_precision

current_segments.append(

new_segment(

start=time_offset,

end=time_offset + duration,

tokens=tokens,

result=result,

)

)

seek += segment_size

if word_timestamps:

add_word_timestamps(

segments=current_segments,

model=model,

tokenizer=tokenizer,

mel=mel_segment,

num_frames=segment_size,

prepend_punctuations=prepend_punctuations,

append_punctuations=append_punctuations,

last_speech_timestamp=last_speech_timestamp,

)

if not single_timestamp_ending:

last_word_end = get_end(current_segments)

if last_word_end is not none and last_word_end > time_offset:

seek = round(last_word_end * frames_per_second)

# skip silence before possible hallucinations

if hallucination_silence_threshold is not none:

threshold = hallucination_silence_threshold

if not single_timestamp_ending:

last_word_end = get_end(current_segments)

if last_word_end is not none and last_word_end > time_offset:

remaining_duration = window_end_time - last_word_end

if remaining_duration > threshold:

seek = round(last_word_end * frames_per_second)

else:

seek = previous_seek + segment_size

# if first segment might be a hallucination, skip leading silence

first_segment = next_words_segment(current_segments)

if first_segment is not none and is_segment_anomaly(first_segment):

gap = first_segment["start"] - time_offset

if gap > threshold:

seek = previous_seek + round(gap * frames_per_second)

continue

# skip silence before any possible hallucination that is surrounded

# by silence or more hallucinations

hal_last_end = last_speech_timestamp

for si in range(len(current_segments)):

segment = current_segments[si]

if not segment["words"]:

continue

if is_segment_anomaly(segment):

next_segment = next_words_segment(

current_segments[si + 1 :]

)

if next_segment is not none:

hal_next_start = next_segment["words"][0]["start"]

else:

hal_next_start = time_offset + segment_duration

silence_before = (

segment["start"] - hal_last_end > threshold

or segment["start"] < threshold

or segment["start"] - time_offset < 2.0

)

silence_after = (

hal_next_start - segment["end"] > threshold

or is_segment_anomaly(next_segment)

or window_end_time - segment["end"] < 2.0

)

if silence_before and silence_after:

seek = round(

max(time_offset + 1, segment["start"])

* frames_per_second

)

if content_duration - segment["end"] < threshold:

seek = content_frames

current_segments[si:] = []

break

hal_last_end = segment["end"]

last_word_end = get_end(current_segments)

if last_word_end is not none:

last_speech_timestamp = last_word_end

if verbose:

for segment in current_segments:

start, end, text = segment["start"], segment["end"], segment["text"]

line = f"[{format_timestamp(start)} --> {format_timestamp(end)}] {text}"

print(make_safe(line))

# if a segment is instantaneous or does not contain text, clear it

for i, segment in enumerate(current_segments):

if segment["start"] == segment["end"] or segment["text"].strip() == "":

segment["text"] = ""

segment["tokens"] = []

segment["words"] = []

all_segments.extend(

[

{"id": i, **segment}

for i, segment in enumerate(

current_segments, start=len(all_segments)

)

]

)

all_tokens.extend(

[token for segment in current_segments for token in segment["tokens"]]

)

if not condition_on_previous_text or result.temperature > 0.5:

# do not feed the prompt tokens if a high temperature was used

prompt_reset_since = len(all_tokens)

# update progress bar

pbar.update(min(content_frames, seek) - previous_seek)

return dict(

text=tokenizer.decode(all_tokens[len(initial_prompt_tokens) :]),

segments=all_segments,

language=language,

)

函数解析

1. transcript.py

kimichat:

这段代码是一个python脚本,它使用了whisper模型来进行音频转录。whisper是一个由openai开发的大型语音识别模型,能够处理多种语言和任务。以下是代码的主要功能和组件的逐行解释:

1-7行:导入了所需的库和模块,包括argparse(命令行参数解析)、os(操作系统交互)、traceback(错误跟踪)、warnings(警告信息)、numpy(科学计算)、torch(pytorch深度学习库)、tqdm(进度条显示)。

9-14行:定义了一些常量和辅助函数,如frames_per_second(每秒帧数)、hop_length(帧跳跃长度)、n_frames(每个音频段的帧数)、n_samples(音频样本数)、sample_rate(采样率)等。

16-25行:定义了log_mel_spectrogram函数,用于将音频信号转换为mel频率倒谱系数,这是语音识别中常用的特征表示方法。

27-36行:定义了pad_or_trim函数,用于对音频信号进行填充或裁剪,以匹配模型所需的输入长度。

38-55行:定义了transcribe函数,它是脚本的核心,负责加载音频、预处理、使用whisper模型进行转录,并返回转录结果。它接受多种参数,如verbose(是否显示详细信息)、temperature(采样温度)、compression_ratio_threshold(压缩比阈值)等,这些参数影响转录过程和结果。

57-82行:在transcribe函数中,首先检查设备类型和数据类型(是否使用半精度浮点数),然后对音频进行预处理,包括检测语言、加载tokenizer、处理音频剪辑时间戳等。

84-131行:实现了一个循环,用于处理音频文件的每个剪辑段,进行转录,并更新进度条。

133-174行:在transcribe函数中,还实现了对转录结果的处理,包括添加单词时间戳、处理可能的幻觉(hallucination)和沉默段。

176-198行:定义了cli函数,它使用argparse解析命令行参数,并调用transcribe函数来处理音频文件。

200-206行:在cli函数中,根据用户输入的参数,加载了whisper模型,并设置了输出格式和目录。

208-213行:实现了对每个音频文件的循环处理,调用transcribe函数进行转录,并将结果写入到文件中。

215-218行:最后,如果这个脚本作为主程序运行,它将执行cli函数,开始命令行界面。

整体来看,这个脚本提供了一个完整的命令行界面,允许用户指定音频文件、模型、输出格式和其他参数,以执行音频转录任务。它使用了whisper模型的强大功能,能够处理多种语言和语音识别任务。

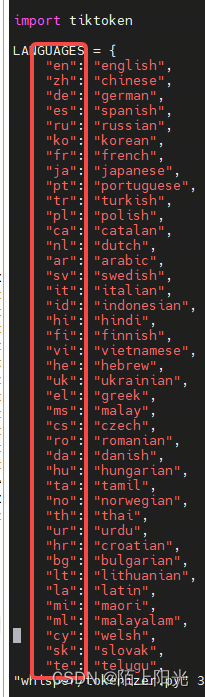

2. tokenizer.py

根据缩写指定要识别的语言

3. audio.py

处理音频用到了ffmpeg命令行工具,在运行环境要安装上ffmpeg命令行工具。

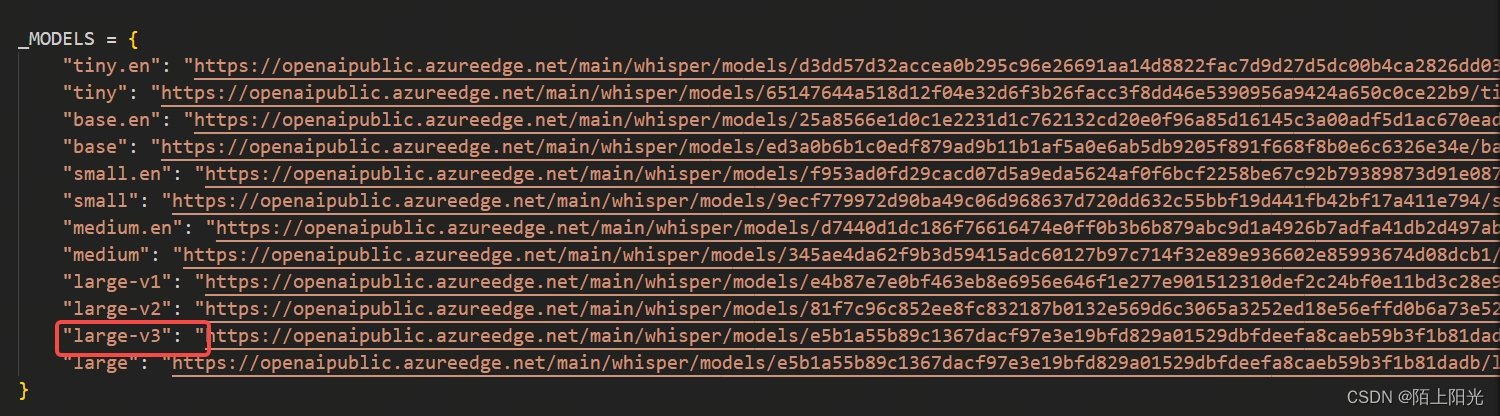

4. __ init__.py

指定要调用的模型, 可以把模型先下载到本地,直接指定模型路径加载本地模型。

grep -h “example” * 匹配内容的同时输出被匹配的文件名。

发表评论