文章目录

1.k8s环境规划

pod网段: 10.0.0.0/16

service网段: 10.255.0.0/16

集群角色 ip 主机名 安装组件

控制节点 10.10.0.10 master01 apiserver、controller-manager、scheduler、etcd、docker、keepalived、nginx

控制节点 10.10.0.11 master02 apiserver、controller-manager、scheduler、etcd、docker、keepalived、nginx

控制节点 10.10.0.12 master03 apiserver、controller-manager、scheduler、etcd、docker、keepalived、nginx

工作节点 10.10.0.14 node01 kubelet、kube-proxy、docker、calico、coredns

vip 10.10.0.100

2.kubeadm和二进制安装k8s适用场景分析

kubeadm是官方提供的开源工具,是一个开源项目,用于快速搭建kubernetes集群,

目前是比较方便和推荐使用的。kubeadm init 以及 kubeadm join 这两个命令可以快速创建 kubernetes 集群。

kubeadm初始化k8s,所有的组件都是以pod形式运行的,具备故障自恢复能力。

kubeadm是工具,可以快速搭建集群,也就是相当于用程序脚本帮我们装好了集群,属于自动部署,

简化部署操作,自动部署屏蔽了很多细节,使得对各个模块感知很少,如果对k8s架构组件理解不深的话,遇到问题比较难排查。

kubeadm适合需要经常部署k8s,或者对自动化要求比较高的场景下使用。

二进制:在官网下载相关组件的二进制包,如果手动安装,对kubernetes理解也会更全面。

kubeadm和二进制都适合生产环境,在生产环境运行都很稳定,具体如何选择,可以根据实际项目进行评估。

3.必备工具安装

yum install wget jq psmisc vim net-tools telnet yum-utils device-mapper-persistent-data lvm2 git -y

安装ntpdate:

[root@master01 ~ ]# yum install -y ntpdate

[root@master01 ~ ]# systemctl enable ntpdate.service --now

3.初始化

3.1 配置静态ip

略

3.2 配置主机名

各个主机名配置类似下面命令

hostnamectl set-hostname master03 && bash

3.3 配置hosts文件

#修改master01、master02、master03、node01机器的/etc/hosts文件,增加如下四行:

10.10.0.10 master01

10.10.0.11 master02

10.10.0.12 master03

10.10.0.14 node01

3.4 配置主机之间无密码登录,每台机器都按照如下操作

#生成ssh 密钥对

ssh-keygen -t rsa #一路回车,不输入密码

把本地的ssh公钥文件安装到远程主机对应的账户

3.5 关闭firewalld防火墙

在master01、master02、master03、node01上操作:

systemctl stop firewalld ; systemctl disable firewalld

3.6 关闭selinux

在master01、master02、master03、node01上操作:

sed -i 's/selinux=enforcing/selinux=disabled/g' /etc/selinux/config

#修改selinux配置文件之后,重启机器,selinux配置才能永久生效

重启之后登录机器验证是否修改成功:

getenforce

#显示disabled说明selinux已经关闭

3.7 关闭交换分区swap

在master01、master02、master03、node01上操作:

#临时关闭

swapoff -a

#永久关闭:注释swap挂载,给swap这行开头加一下注释

vim /etc/fstab

#/dev/mapper/centos-swap swap swap defaults 0 0

#如果是克隆的虚拟机,需要删除uuid

3.8 修改内核参数

在master1、master2、master3、node01上操作:

所有节点安装ipvsadm: 使用ipvs流量调度模式

yum install ipvsadm ipset sysstat conntrack libseccomp -y

所有节点配置ipvs模块,在内核4.19+版本nf_conntrack_ipv4已经改为nf_conntrack, 4.18以下使用nf_conntrack_ipv4即可:

modprobe -- ip_vs

modprobe -- ip_vs_rr

modprobe -- ip_vs_wrr

modprobe -- ip_vs_sh

modprobe -- nf_conntrack

[root@master01 ~ ]# vim /etc/modules-load.d/ipvs.conf

ip_vs

ip_vs_lc

ip_vs_wlc

ip_vs_rr

ip_vs_wrr

ip_vs_lblc

ip_vs_lblcr

ip_vs_dh

ip_vs_sh

ip_vs_fo

ip_vs_nq

ip_vs_sed

ip_vs_ftp

ip_vs_sh

nf_conntrack

ip_tables

ip_set

xt_set

ipt_set

ipt_rpfilter

ipt_reject

ipip

然后执行systemctl enable --now systemd-modules-load.service即可

内核参数修改:br_netfilter模块用于将桥接流量转发至iptables链,br_netfilter内核参数需要开启转发。

[root@master01 ~]# modprobe br_netfilter

#修改内核参数

开启一些k8s集群中必须的内核参数,所有节点配置k8s内核:

cat <<eof > /etc/sysctl.d/k8s.conf

net.ipv4.ip_forward = 1

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-ip6tables = 1

fs.may_detach_mounts = 1

net.ipv4.conf.all.route_localnet = 1

vm.overcommit_memory=1

vm.panic_on_oom=0

fs.inotify.max_user_watches=89100

fs.file-max=52706963

fs.nr_open=52706963

net.netfilter.nf_conntrack_max=2310720

net.ipv4.tcp_keepalive_time = 600

net.ipv4.tcp_keepalive_probes = 3

net.ipv4.tcp_keepalive_intvl =15

net.ipv4.tcp_max_tw_buckets = 36000

net.ipv4.tcp_tw_reuse = 1

net.ipv4.tcp_max_orphans = 327680

net.ipv4.tcp_orphan_retries = 3

net.ipv4.tcp_syncookies = 1

net.ipv4.tcp_max_syn_backlog = 16384

net.ipv4.ip_conntrack_max = 65536

net.ipv4.tcp_max_syn_backlog = 16384

net.ipv4.tcp_timestamps = 0

net.core.somaxconn = 16384

eof

sysctl --system

sysctl -p /etc/sysctl.d/k8s.conf出现报错:

sysctl: cannot stat /proc/sys/net/bridge/bridge-nf-call-ip6tables: no such file or directory

sysctl: cannot stat /proc/sys/net/bridge/bridge-nf-call-iptables: no such file or directory

解决方法:

modprobe br_netfilter

所有节点配置完内核后,重启服务器,保证重启后内核依旧加载

reboot

lsmod | grep --color=auto -e ip_vs -e nf_conntrack

[root@master01 ~ ]# lsmod | grep --color=auto -e ip_vs -e nf_conntrack

ip_vs_ftp 16384 0

nf_nat 32768 1 ip_vs_ftp

ip_vs_sed 16384 0

ip_vs_nq 16384 0

ip_vs_fo 16384 0

ip_vs_sh 16384 0

ip_vs_dh 16384 0

ip_vs_lblcr 16384 0

ip_vs_lblc 16384 0

ip_vs_wrr 16384 0

ip_vs_rr 16384 0

ip_vs_wlc 16384 0

ip_vs_lc 16384 0

ip_vs 151552 24 ip_vs_wlc,ip_vs_rr,ip_vs_dh,ip_vs_lblcr,ip_vs_sh,ip_vs_fo,ip_vs_nq,ip_vs_lblc,ip_vs_wrr,ip_vs_lc,ip_vs_sed,ip_vs_ftp

nf_conntrack 143360 2 nf_nat,ip_vs

nf_defrag_ipv6 20480 1 nf_conntrack

nf_defrag_ipv4 16384 1 nf_conntrack

libcrc32c 16384 4 nf_conntrack,nf_nat,xfs,ip_vs

3.9阿里源安装docker-ce

# step 1: 安装必要的一些系统工具

sudo yum install -y yum-utils device-mapper-persistent-data lvm2

# step 2: 添加软件源信息

sudo yum-config-manager --add-repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

# step 3

sudo sed -i 's+download.docker.com+mirrors.aliyun.com/docker-ce+' /etc/yum.repos.d/docker-ce.repo

# step 4: 更新并安装docker-ce

sudo yum makecache fast

sudo yum -y install docker-ce

# step 5: 开启docker服务

sudo service docker start

3.10配置docker加速

[root@master01 ~ ]# cat /etc/docker/daemon.json

{

"registry-mirrors": ["http://abcd1234.m.daocloud.io"],

"exec-opts": ["native.cgroupdriver=systemd"]

}

systemctl daemon-reload

systemctl restart docker

systemctl status docker

4.搭建etcd集群

4.1 配置etcd工作目录

#创建配置文件和证书文件存放目录

[root@master01 ~ ]#mkdir -p /etc/etcd

[root@master01 ~ ]#mkdir -p /etc/etcd/ssl

[root@master02 ~ ]#mkdir -p /etc/etcd

[root@master02 ~ ]#mkdir -p /etc/etcd/ssl

[root@master03 ~ ]#mkdir -p /etc/etcd

[root@master03 ~ ]#mkdir -p /etc/etcd/ssl

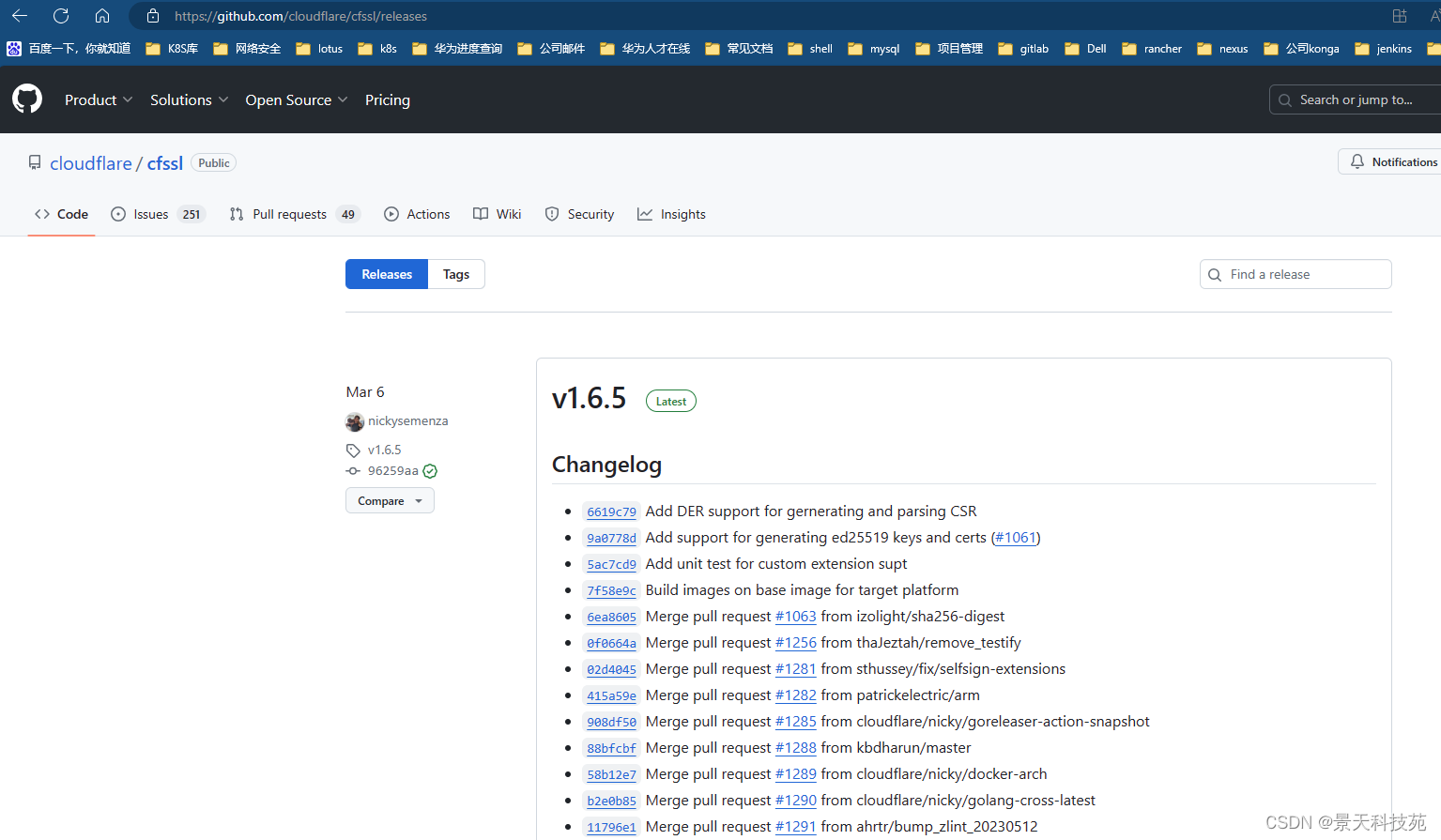

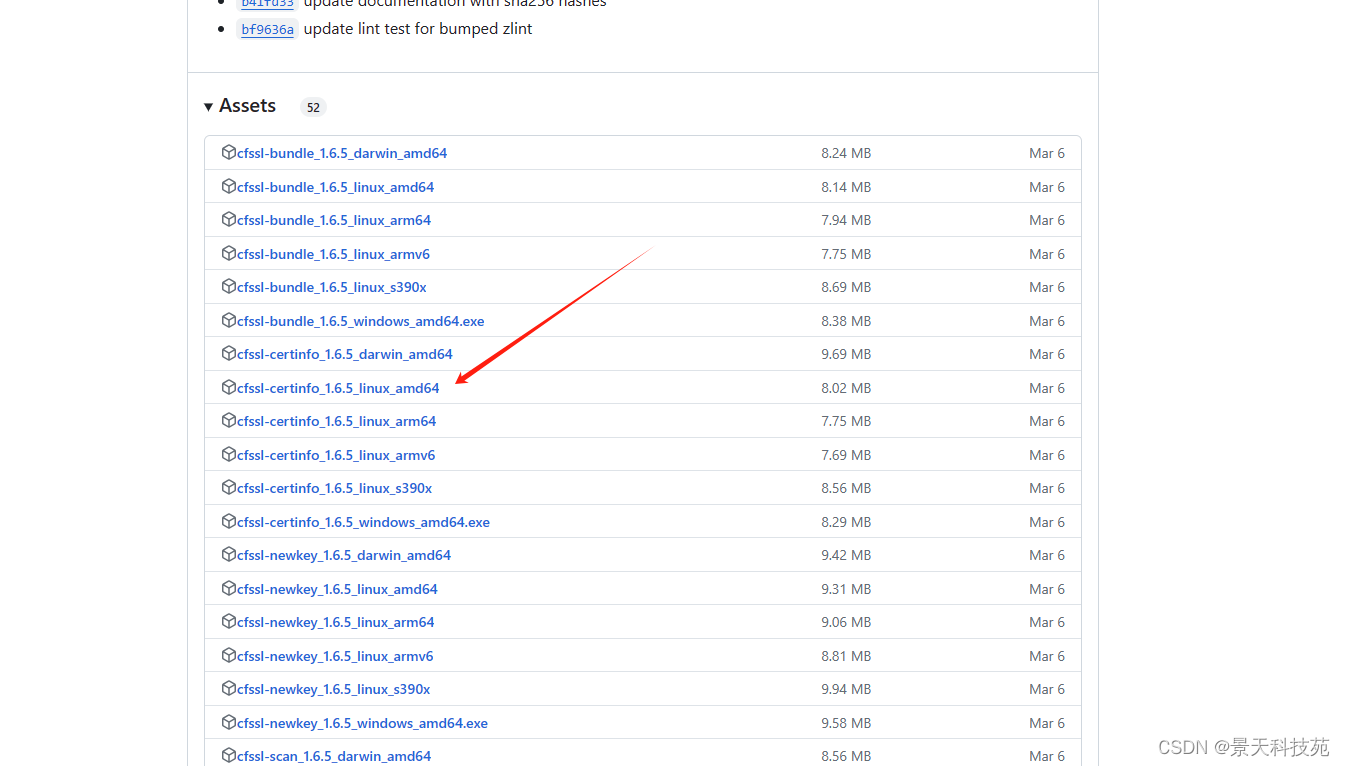

4.2 安装签发证书工具cfssl

下载证书工具

下载地址: https://github.com/cloudflare/cfssl/releases

[root@master01 ~ ]#mkdir /data/work -p

[root@master01 ~ ]#cd /data/work/

#cfssl-certinfo_linux-amd64 、cfssljson_linux-amd64 、cfssl_linux-amd64上传到/data/work/目录下

[root@master01 work ]#ll

total 18808

-rw-r--r-- 1 root root 6595195 oct 25 15:39 cfssl-certinfo_linux-amd64

-rw-r--r-- 1 root root 2277873 oct 25 15:39 cfssljson_linux-amd64

-rw-r--r-- 1 root root 10376657 oct 25 15:39 cfssl_linux-amd64

#把文件变成可执行权限

[root@master01 work ]#chmod +x *

[root@master01 work ]#ll

total 18808

-rwxr-xr-x 1 root root 6595195 oct 25 15:39 cfssl-certinfo_linux-amd64

-rwxr-xr-x 1 root root 2277873 oct 25 15:39 cfssljson_linux-amd64

-rwxr-xr-x 1 root root 10376657 oct 25 15:39 cfssl_linux-amd64

[root@master01 work ]#mv cfssl_linux-amd64 /usr/local/bin/cfssl

[root@master01 work ]#mv cfssljson_linux-amd64 /usr/local/bin/cfssljson

[root@master01 work ]#mv cfssl-certinfo_linux-amd64 /usr/local/bin/cfssl-certinfo

4.3 配置ca证书

#生成ca证书请求文件

生成ca证书:

初始化

cfssl print-defaults config > ca-config.json

cfssl print-defaults csr > ca-csr.json

#生成ca证书请求文件

[root@master01 work ]#cat ca-csr.json

{

"cn": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"c": "cn",

"st": "hubei",

"l": "wuhan",

"o": "k8s",

"ou": "system"

}

],

"ca": {

"expiry": "87600h"

}

}

注:

cn:common name(公用名称),kube-apiserver 从证书中提取该字段作为请求的用户名 (user name);

浏览器使用该字段验证网站是否合法;对于 ssl 证书,一般为网站域名;而对于代码签名证书则为申请单位名称;

而对于客户端证书则为证书申请者的姓名。

o:organization(单位名称),kube-apiserver 从证书中提取该字段作为请求用户所属的组 (group);

对于 ssl 证书,一般为网站域名;而对于代码签名证书则为申请单位名称;而对于客户端单位证书则为证书申请者所在单位名称。

l 字段:所在城市

s 字段:所在省份

c 字段:只能是国家字母缩写,如中国:cn

[root@master01 work ]#cfssl gencert -initca ca-csr.json | cfssljson -bare ca

2022/10/25 16:59:15 [info] generating a new ca key and certificate from csr

2022/10/25 16:59:15 [info] generate received request

2022/10/25 16:59:15 [info] received csr

2022/10/25 16:59:15 [info] generating key: rsa-2048

2022/10/25 16:59:15 [info] encoded csr

2022/10/25 16:59:15 [info] signed certificate with serial number 389912219972037047043791867430049210836195704387

[root@master01 work ]#ll

total 16

-rw-r–r-- 1 root root 997 oct 25 16:59 ca.csr

-rw-r–r-- 1 root root 253 oct 25 15:39 ca-csr.json

-rw------- 1 root root 1679 oct 25 16:59 ca-key.pem

-rw-r–r-- 1 root root 1346 oct 25 16:59 ca.pem

#生成ca证书文件

[root@master01 work ]#cat ca-config.json

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"kubernetes": {

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

],

"expiry": "87600h"

}

}

}

}

4.4 生成etcd证书

#配置etcd证书请求,hosts的ip变成自己etcd所在节点的ip

[root@master01 work ]#cat etcd-csr.json

{

"cn": "etcd",

"hosts": [

"127.0.0.1",

"10.10.0.10",

"10.10.0.11",

"10.10.0.12",

"10.10.0.100"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [{

"c": "cn",

"st": "hubei",

"l": "wuhan",

"o": "k8s",

"ou": "system"

}]

}

#上述文件hosts字段中ip为所有etcd节点的集群内部通信ip,vip.可以预留几个,做扩容用。

[root@master01 work ]#cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes etcd-csr.json | cfssljson -bare etcd

2022/10/25 17:08:11 [info] generate received request

2022/10/25 17:08:11 [info] received csr

2022/10/25 17:08:11 [info] generating key: rsa-2048

2022/10/25 17:08:11 [info] encoded csr

2022/10/25 17:08:11 [info] signed certificate with serial number 135048769106462136999813410398927441265408992932

2022/10/25 17:08:11 [warning] this certificate lacks a "hosts" field. this makes it unsuitable for

websites. for more information see the baseline requirements for the issuance and management

of publicly-trusted certificates, v.1.1.6, from the ca/browser forum (https://cabforum.org);

specifically, section 10.2.3 ("information requirements").

上述命令-profile指定的文件名称要与ca-config.json 中 "profiles"下面的名称一致

[root@master01 work ]#ls etcd*.pem

etcd-key.pem etcd.pem

4.5 部署etcd集群

下载etcd:

https://github.com/etcd-io/etcd/releases

把etcd-v3.4.13-linux-amd64.tar.gz上传到/data/work目录下

[root@master01 work ]#ll

-rw-r–r-- 1 root root 17373136 oct 25 15:39 etcd-v3.4.13-linux-amd64.tar.gz

[root@master01 work ]#tar xf etcd-v3.4.13-linux-amd64.tar.gz

[root@master01 etcd-v3.4.13-linux-amd64 ]#cp -a etcdctl etcd /usr/local/bin/

将etcd文件拷贝到另两个master节点:

[root@master01 etcd-v3.4.13-linux-amd64 ]#scp -p etcd* 10.10.0.11:/usr/local/bin/

etcd 100% 23mb 119.7mb/s 00:00

etcdctl 100% 17mb 69.5mb/s 00:00

[root@master01 etcd-v3.4.13-linux-amd64 ]#scp -p etcd* 10.10.0.12:/usr/local/bin/

etcd 100% 23mb 112.7mb/s 00:00

etcdctl

#创建配置文件

[root@master01 work ]#cat etcd.conf

#[member]

etcd_name="etcd1"

etcd_data_dir="/var/lib/etcd/default.etcd"

etcd_listen_peer_urls="https://10.10.0.10:2380"

etcd_listen_client_urls="https://10.10.0.10:2379,http://127.0.0.1:2379"

#[clustering]

etcd_initial_advertise_peer_urls="https://10.10.0.10:2380"

etcd_advertise_client_urls="https://10.10.0.10:2379"

etcd_initial_cluster="etcd1=https://10.10.0.10:2380,etcd2=https://10.10.0.11:2380,etcd3=https://10.10.0.12:2380"

etcd_initial_cluster_token="etcd-cluster"

etcd_initial_cluster_state="new"

#注:

etcd_name:节点名称,集群中唯一

etcd_data_dir:数据目录

etcd_listen_peer_urls:集群通信监听地址

etcd_listen_client_urls:客户端访问监听地址

etcd_initial_advertise_peer_urls:集群通告地址

etcd_advertise_client_urls:客户端通告地址

etcd_initial_cluster:集群节点地址

etcd_initial_cluster_token:集群token

etcd_initial_cluster_state:加入集群的当前状态,new是新集群,existing表示加入已有集群

#创建启动服务文件

[root@master01 work ]#cat etcd.service

[unit]

description=etcd server

after=network.target

after=network-online.target

wants=network-online.target

[service]

type=notify

environmentfile=-/etc/etcd/etcd.conf

workingdirectory=/var/lib/etcd/

execstart=/usr/local/bin/etcd \

--cert-file=/etc/etcd/ssl/etcd.pem \

--key-file=/etc/etcd/ssl/etcd-key.pem \

--trusted-ca-file=/etc/etcd/ssl/ca.pem \

--peer-cert-file=/etc/etcd/ssl/etcd.pem \

--peer-key-file=/etc/etcd/ssl/etcd-key.pem \

--peer-trusted-ca-file=/etc/etcd/ssl/ca.pem \

--peer-client-cert-auth \

--client-cert-auth

restart=on-failure

restartsec=5

limitnofile=65536

[install]

wantedby=multi-user.target

[root@master01 work ]#cp ca*.pem /etc/etcd/ssl/

[root@master01 work ]#cp etcd*.pem /etc/etcd/ssl/

[root@master01 work ]#cp etcd.conf /etc/etcd/

[root@master01 work ]#cp etcd.service /usr/lib/systemd/system/

[root@master01 work ]#for i in master02 master03;do rsync -vaz etcd.conf $i:/etc/etcd/;done

[root@master01 work ]#for i in master02 master03;do rsync -vaz etcd*.pem ca*.pem $i:/etc/etcd/ssl/;done

[root@master01 work ]#for i in master02 master03;do rsync -vaz etcd.service $i:/usr/lib/systemd/system/;done

#启动etcd集群

[root@master01 work]# mkdir -p /var/lib/etcd/default.etcd

[root@master02 work]# mkdir -p /var/lib/etcd/default.etcd

[root@master03 work]# mkdir -p /var/lib/etcd/default.etcd

修改master02的配置文件:

[root@master02 ~ ]#cat /etc/etcd/etcd.conf

#[member]

etcd_name="etcd2"

etcd_data_dir="/var/lib/etcd/default.etcd"

etcd_listen_peer_urls="https://10.10.0.11:2380"

etcd_listen_client_urls="https://10.10.0.11:2379,http://127.0.0.1:2379"

#[clustering]

etcd_initial_advertise_peer_urls="https://10.10.0.11:2380"

etcd_advertise_client_urls="https://10.10.0.11:2379"

etcd_initial_cluster="etcd1=https://10.10.0.10:2380,etcd2=https://10.10.0.11:2380,etcd3=https://10.10.0.12:2380"

etcd_initial_cluster_token="etcd-cluster"

etcd_initial_cluster_state="new"

修改master03的配置文件:

[root@master03 ~ ]#vim /etc/etcd/etcd.conf

#[member]

etcd_name="etcd3"

etcd_data_dir="/var/lib/etcd/default.etcd"

etcd_listen_peer_urls="https://10.10.0.12:2380"

etcd_listen_client_urls="https://10.10.0.12:2379,http://127.0.0.1:2379"

#[clustering]

etcd_initial_advertise_peer_urls="https://10.10.0.12:2380"

etcd_advertise_client_urls="https://10.10.0.12:2379"

etcd_initial_cluster="etcd1=https://10.10.0.10:2380,etcd2=https://10.10.0.11:2380,etcd3=https://10.10.0.12:2380"

etcd_initial_cluster_token="etcd-cluster"

etcd_initial_cluster_state="new"

[root@master01 work ]#systemctl daemon-reload

[root@master02 ~ ]#systemctl daemon-reload

[root@master03 ~ ]#systemctl daemon-reload

[root@master01 work ]#systemctl enable etcd.service --now

[root@master02 work ]#systemctl enable etcd.service --now

[root@master03 work ]#systemctl enable etcd.service --now

启动etcd的时候,先启动xianchaomaster1的etcd服务,

会一直卡住在启动的状态,然后接着再启动xianchaomaster2的etcd,这样xianchaomaster1这个节点etcd才会正常起来

全部重启下

[root@master01 work ]#systemctl restart etcd.service

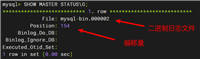

#查看etcd集群

[root@master01 work ]#export etcdctl_api=3

[root@master01 work ]#etcdctl --write-out=table --cacert=/etc/etcd/ssl/ca.pem --cert=/etc/etcd/ssl/etcd.pem --key=/etc/etcd/ssl/etcd-key.pem --endpoints=https://10.10.0.10:2379,https://10.10.0.11:2379,https://10.10.0.12:2379 endpoint health

+-------------------------+--------+-------------+-------+

| endpoint | health | took | error |

+-------------------------+--------+-------------+-------+

| https://10.10.0.12:2379 | true | 10.214118ms | |

| https://10.10.0.10:2379 | true | 9.085152ms | |

| https://10.10.0.11:2379 | true | 10.12115ms | |

+-------------------------+--------+-------------+-------+

[root@master01 work ]#

[root@master01 work ]#etcdctl --write-out=table --cacert=/etc/etcd/ssl/ca.pem --cert=/etc/etcd/ssl/etcd.pem --key=/etc/etcd/ssl/etcd-key.pem --endpoints=https://10.10.0.10:2379,https://10.10.0.11:2379,https://10.10.0.12:2379 endpoint status

+-------------------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+

| endpoint | id | version | db size | is leader | is learner | raft term | raft index | raft applied index | errors |

+-------------------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+

| https://10.10.0.10:2379 | 7c3b81b30c59fb64 | 3.4.13 | 20 kb | true | false | 11 | 15 | 15 | |

| https://10.10.0.11:2379 | 86041dd24c0806ff | 3.4.13 | 25 kb | false | false | 11 | 15 | 15 | |

| https://10.10.0.12:2379 | 76002ef45e4ee68e | 3.4.13 | 20 kb | false | false | 11 | 15 | 15 | |

+-------------------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+

5.安装kubernetes组件

5.1 下载安装包

二进制包所在的github地址如下:

https://github.com/kubernetes/kubernetes/blob/master/changelog/

在githup上下载

https://github.com/kubernetes/kubernetes/releases/tag/v1.23.8

release 点击changelog – download for v1.23.8 – server bianries – kubernetes-server-linux-amd64.tar.gz

下载这个

不要使用1.21.0 这种点0版本,刚出来的版本可能会有很多问题

#把kubernetes-server-linux-amd64.tar.gz上传到master01上的/data/work目录下:

[root@master01 work ]#tar xf kubernetes-server-linux-amd64.tar.gz

[root@master01 work ]#cd kubernetes/

[root@master01 kubernetes ]#ll

total 33672

drwxr-xr-x 2 root root 6 may 12 2021 addons

-rw-r–r-- 1 root root 34477274 may 12 2021 kubernetes-src.tar.gz

drwxr-xr-x 3 root root 49 may 12 2021 licenses

drwxr-xr-x 3 root root 17 may 12 2021 server

[root@master01 server ]#cd bin/

[root@master01 bin ]#ll

total 986524

-rwxr-xr-x 1 root root 46678016 may 12 2021 apiextensions-apiserver

-rwxr-xr-x 1 root root 39215104 may 12 2021 kubeadm

-rwxr-xr-x 1 root root 44675072 may 12 2021 kube-aggregator

-rwxr-xr-x 1 root root 118210560 may 12 2021 kube-apiserver

-rw-r--r-- 1 root root 8 may 12 2021 kube-apiserver.docker_tag

-rw------- 1 root root 123026944 may 12 2021 kube-apiserver.tar

-rwxr-xr-x 1 root root 112746496 may 12 2021 kube-controller-manager

-rw-r--r-- 1 root root 8 may 12 2021 kube-controller-manager.docker_tag

-rw------- 1 root root 117562880 may 12 2021 kube-controller-manager.tar

-rwxr-xr-x 1 root root 40226816 may 12 2021 kubectl

-rwxr-xr-x 1 root root 114097256 may 12 2021 kubelet

-rwxr-xr-x 1 root root 39481344 may 12 2021 kube-proxy

-rw-r--r-- 1 root root 8 may 12 2021 kube-proxy.docker_tag

-rw------- 1 root root 120374784 may 12 2021 kube-proxy.tar

-rwxr-xr-x 1 root root 43716608 may 12 2021 kube-scheduler

-rw-r--r-- 1 root root 8 may 12 2021 kube-scheduler.docker_tag

-rw------- 1 root root 48532992 may 12 2021 kube-scheduler.tar

-rwxr-xr-x 1 root root 1634304 may 12 2021 mounter

[root@master01 bin ]#cp kube-apiserver kube-controller-manager kube-scheduler kubectl kubelet /usr/local/bin/

将二进制程序拷贝到其他master节点

[root@master01 bin ]#rsync -avz kube-apiserver kube-controller-manager kube-scheduler kubectl kubelet master02:/usr/local/bin/

sending incremental file list

kube-apiserver

kube-controller-manager

kube-scheduler

kubectl

kubelet

sent 109,825,016 bytes received 111 bytes 6,656,068.30 bytes/sec

total size is 428,997,736 speedup is 3.91

[root@master01 bin ]#rsync -avz kube-apiserver kube-controller-manager kube-scheduler kubectl kubelet master03:/usr/local/bin/

sending incremental file list

kube-apiserver

kube-controller-manager

kube-scheduler

kubectl

kubelet

sent 109,825,016 bytes received 111 bytes 5,108,145.44 bytes/sec

total size is 428,997,736 speedup is 3.91

server节点的程序包含client节点的二进制包,将二进制程序拷贝到node节点

[root@master01 bin ]#pwd

/data/work/kubernetes/server/bin

[root@master01 bin ]#scp kube-proxy kubelet node01:/usr/local/bin/

the authenticity of host 'node01 (10.10.0.14)' can't be established.

ecdsa key fingerprint is sha256:knfjal1iy7qwpjevbphblelq/cggjs4iu3qb3gxgqgs.

ecdsa key fingerprint is md5:6b:82:08:da:02:d6:1b:d0:ec:d1:93:c3:b8:21:6a:b7.

are you sure you want to continue connecting (yes/no)? yes

warning: permanently added 'node01' (ecdsa) to the list of known hosts.

kube-proxy 100% 38mb 27.6mb/s 00:01

kubelet

[root@master01 work ]#mkdir -p /etc/kubernetes/

[root@master01 work ]#mkdir -p /etc/kubernetes/ssl

[root@master01 work ]#mkdir /var/log/kubernetes

[root@master02 ~ ]#mkdir -p /etc/kubernetes/

[root@master02 ~ ]#mkdir -p /etc/kubernetes/ssl

[root@master02 ~ ]#mkdir /var/log/kubernetes

[root@master03 ~ ]#mkdir -p /etc/kubernetes/

[root@master03 ~ ]#mkdir -p /etc/kubernetes/ssl

[root@master03 ~ ]#mkdir /var/log/kubernetes

5.2 部署apiserver组件

#启动tls bootstrapping 机制

master apiserver启用tls认证后,每个节点的 kubelet 组件都要使用由 apiserver 使用的 ca 签发的有效证书才能与 apiserver 通讯,

当node节点很多时,这种客户端证书颁发需要大量工作,同样也会增加集群扩展复杂度。

为了简化流程,kubernetes引入了tls bootstraping机制来自动颁发客户端证书,

kubelet会以一个低权限用户自动向apiserver申请证书,kubelet的证书由apiserver动态签署,自动给kubelet颁发证书。

bootstrap 是很多系统中都存在的程序,

比如 linux 的bootstrap,bootstrap 一般都是作为预先配置在开启或者系统启动的时候加载,

这可以用来生成一个指定环境。kubernetes 的 kubelet 在启动时同样可以加载一个这样的配置文件,这个文件的内容类似如下形式:

apiversion: v1

clusters: null

contexts:

- context:

cluster: kubernetes

user: kubelet-bootstrap

name: default

current-context: default

kind: config

preferences: {}

users:

- name: kubelet-bootstrap

user: {}

#tls bootstrapping 具体引导过程

1.tls 作用

tls 的作用就是对通讯加密,防止中间人窃听;

同时如果证书不信任的话根本就无法与 apiserver 建立连接,

更不用提有没有权限向apiserver请求指定内容。

- rbac 作用 role base access control 基于角色的访问控制

当 tls 解决了通讯问题后,那么权限问题就应由 rbac 解决(可以使用其他权限模型,如 abac);

rbac 中规定了一个用户或者用户组(subject)具有请求哪些 api 的权限;

在配合 tls 加密的时候,实际上 apiserver 读取客户端证书的 cn 字段作为用户名,读取 o字段作为用户组.

以上说明:

第一,想要与 apiserver 通讯就必须采用由 apiserver ca 签发的证书,

这样才能形成信任关系,建立 tls 连接;

第二,可以通过证书的 cn、o 字段来提供 rbac 所需的用户与用户组。

#kubelet 首次启动流程

tls bootstrapping 功能是让 kubelet 组件去 apiserver 申请证书,

然后用于连接 apiserver;那么第一次启动时没有证书如何连接 apiserver ?

在apiserver 配置中指定了一个 token.csv 文件,该文件中是一个预设的用户配置;启动时会加载

同时该用户的token 和 由apiserver 的 ca签发的用户被写入了 kubelet 所使用的 bootstrap.kubeconfig 配置文件中;

kubelet启动时,会加载bootstrap.kubeconfig文件

这样在首次请求时,kubelet 使用 bootstrap.kubeconfig 中被 apiserver ca 签发证书时信任的用户来与 apiserver 建立 tls 通讯,

使用 bootstrap.kubeconfig 中的用户 token 来向 apiserver 声明自己的 rbac 授权身份.

token.csv格式:

3940fd7fbb391d1b4d861ad17a1f0613,kubelet-bootstrap,10001,“system:kubelet-bootstrap”

创建上述配置文件中token文件:

生产token:

head -c 16 /dev/urandom | od -an -t x | tr -d ’ ’

cat > /data/kubernetes/token/token.csv << eof

8414f742b0b05960998699427f780978,kubelet-bootstrap,10001,“system:node-bootstrapper”

eof

首次启动时,可能与遇到 kubelet 报 401 无权访问 apiserver 的错误;

这是因为在默认情况下,kubelet 通过 bootstrap.kubeconfig 中的预设用户 token 声明了自己的身份

,然后创建 csr 请求;但是不要忘记这个用户在我们不处理的情况下他没任何权限的,

包括创建 csr 请求;所以需要创建一个 clusterrolebinding,

将预设用户 kubelet-bootstrap 与内置的 clusterrole system:node-bootstrapper 绑定到一起,

使其能够发起 csr 请求。

稍后安装kubelet的时候演示。

#创建token.csv文件

[root@master01 work]# cat > token.csv << eof

$(head -c 16 /dev/urandom | od -an -t x | tr -d ' '),kubelet-bootstrap,10001,"system:kubelet-bootstrap"

eof

#格式:token,用户名,uid,用户组

[root@master01 work ]#cat token.csv

fe63c95ecacff5f161138fddee6d0a5e,kubelet-bootstrap,10001,“system:kubelet-bootstrap”

#创建csr请求文件,替换为自己机器的ip

[root@master01 work ]#cat kube-apiserver-csr.json

{

"cn": "kubernetes",

"hosts": [

"127.0.0.1",

"10.10.0.10",

"10.10.0.11",

"10.10.0.12",

"10.10.0.14",

"10.10.0.100",

"10.255.0.1",

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"c": "cn",

"st": "hubei",

"l": "wuhan",

"o": "k8s",

"ou": "system"

}

]

}

#注: 如果 hosts 字段不为空则需要指定授权使用该证书的 ip 或域名列表。

由于该证书后续被 kubernetes master 集群使用,需要将master节点的ip都填上,还可以多预留几个ip,最好node节点的ip也填上

同时还需要填写 service 网络的首个ip。

(一般是 kube-apiserver 指定的 service-cluster-ip-range 网段的第一个ip,如 10.255.0.1)

#生成证书

[root@master01 work ]#cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-apiserver-csr.json | cfssljson -bare kube-apiserver

2022/10/26 09:17:42 [info] generate received request

2022/10/26 09:17:42 [info] received csr

2022/10/26 09:17:42 [info] generating key: rsa-2048

2022/10/26 09:17:43 [info] encoded csr

2022/10/26 09:17:43 [info] signed certificate with serial number 472605278820153059718832369709947675981512800305

2022/10/26 09:17:43 [warning] this certificate lacks a "hosts" field. this makes it unsuitable for

websites. for more information see the baseline requirements for the issuance and management

of publicly-trusted certificates, v.1.1.6, from the ca/browser forum (https://cabforum.org);

specifically, section 10.2.3 ("information requirements").

-profile=kubernetes 是在ca-config.json文件中指定的

#创建api-server的配置文件,替换成自己的ip

[root@master01 work ]#cat kube-apiserver.conf

kube_apiserver_opts="--enable-admission-plugins=namespacelifecycle,noderestriction,limitranger,serviceaccount,defaultstorageclass,resourcequota \

--anonymous-auth=false \

--bind-address=10.10.0.10 \

--secure-port=6443 \

--advertise-address=10.10.0.10 \

--insecure-port=0 \

--authorization-mode=node,rbac \

--runtime-config=api/all=true \

--enable-bootstrap-token-auth \

--service-cluster-ip-range=10.255.0.0/16 \

--token-auth-file=/etc/kubernetes/token.csv \

--service-node-port-range=30000-50000 \

--tls-cert-file=/etc/kubernetes/ssl/kube-apiserver.pem \

--tls-private-key-file=/etc/kubernetes/ssl/kube-apiserver-key.pem \

--client-ca-file=/etc/kubernetes/ssl/ca.pem \

--kubelet-client-certificate=/etc/kubernetes/ssl/kube-apiserver.pem \

--kubelet-client-key=/etc/kubernetes/ssl/kube-apiserver-key.pem \

--service-account-key-file=/etc/kubernetes/ssl/ca-key.pem \

--service-account-signing-key-file=/etc/kubernetes/ssl/ca-key.pem \

--service-account-issuer=https://kubernetes.default.svc.cluster.local \

--etcd-cafile=/etc/etcd/ssl/ca.pem \

--etcd-certfile=/etc/etcd/ssl/etcd.pem \

--etcd-keyfile=/etc/etcd/ssl/etcd-key.pem \

--etcd-servers=https://10.10.0.10:2379,https://10.10.0.11:2379,https://10.10.0.12:2379 \

--enable-swagger-ui=true \

--allow-privileged=true \

--apiserver-count=3 \

--audit-log-maxage=30 \

--audit-log-maxbackup=3 \

--audit-log-maxsize=100 \

--audit-log-path=/var/log/kube-apiserver-audit.log \

--event-ttl=1h \

--alsologtostderr=true \

--logtostderr=false \

--log-dir=/var/log/kubernetes \

--v=4"

#注:

–logtostderr:启用日志

–v:日志等级

–log-dir:日志目录

–etcd-servers:etcd集群地址

–bind-address:监听地址

–secure-port:https安全端口

–advertise-address:集群通告地址

–allow-privileged:启用授权

–service-cluster-ip-range:service虚拟ip地址段

–enable-admission-plugins:准入控制模块

–authorization-mode:认证授权,启用rbac授权和节点自管理

–enable-bootstrap-token-auth:启用tls bootstrap机制

–token-auth-file:bootstrap token文件

–service-node-port-range:service nodeport类型默认分配端口范围

–kubelet-client-xxx:apiserver访问kubelet客户端证书

–tls-xxx-file:apiserver https证书

–etcd-xxxfile:连接etcd集群证书 –

-audit-log-xxx:审计日志

#创建服务启动文件

[root@master01 work ]#cat kube-apiserver.service

[unit]

description=kubernetes api server

documentation=https://github.com/kubernetes/kubernetes

after=etcd.service

wants=etcd.service

[service]

environmentfile=-/etc/kubernetes/kube-apiserver.conf

execstart=/usr/local/bin/kube-apiserver $kube_apiserver_opts

restart=on-failure

restartsec=5

type=notify

limitnofile=65536

[install]

wantedby=multi-user.target

[root@master01 work ]#cp ca*.pem /etc/kubernetes/ssl

[root@master01 work ]#cp kube-apiserver*.pem /etc/kubernetes/ssl/

[root@master01 work ]#cp token.csv /etc/kubernetes/

[root@master01 work ]#cp kube-apiserver.conf /etc/kubernetes/

[root@master01 work ]#cp kube-apiserver.service /usr/lib/systemd/system/

[root@master01 work ]#rsync -vaz token.csv master02:/etc/kubernetes/

sending incremental file list

token.csv

sent 161 bytes received 35 bytes 392.00 bytes/sec

total size is 84 speedup is 0.43

[root@master01 work ]#rsync -vaz token.csv master03:/etc/kubernetes/

sending incremental file list

token.csv

sent 161 bytes received 35 bytes 78.40 bytes/sec

total size is 84 speedup is 0.43

[root@master01 work ]#rsync -vaz kube-apiserver*.pem master02:/etc/kubernetes/ssl/

sending incremental file list

kube-apiserver-key.pem

kube-apiserver.pem

sent 2,604 bytes received 54 bytes 5,316.00 bytes/sec

total size is 3,310 speedup is 1.25

[root@master01 work ]#rsync -vaz kube-apiserver*.pem master03:/etc/kubernetes/ssl/

sending incremental file list

kube-apiserver-key.pem

kube-apiserver.pem

sent 2,604 bytes received 54 bytes 5,316.00 bytes/sec

total size is 3,310 speedup is 1.25

[root@master01 work ]#rsync -vaz ca*.pem master02:/etc/kubernetes/ssl/

sending incremental file list

ca-key.pem

ca.pem

sent 2,420 bytes received 54 bytes 4,948.00 bytes/sec

total size is 3,025 speedup is 1.22

[root@master01 work ]#rsync -vaz ca*.pem master03:/etc/kubernetes/ssl/

sending incremental file list

ca-key.pem

ca.pem

sent 2,420 bytes received 54 bytes 1,649.33 bytes/sec

total size is 3,025 speedup is 1.22

[root@master01 work ]#rsync -vaz kube-apiserver.conf master02:/etc/kubernetes/

sending incremental file list

kube-apiserver.conf

sent 1,005 bytes received 54 bytes 2,118.00 bytes/sec

total size is 1,959 speedup is 1.85

[root@master01 work ]#rsync -vaz kube-apiserver.conf master03:/etc/kubernetes/

sending incremental file list

kube-apiserver.conf

sent 707 bytes received 35 bytes 1,484.00 bytes/sec

total size is 1,597 speedup is 2.15

[root@master01 work ]#rsync -vaz kube-apiserver.service master02:/usr/lib/systemd/system/

sending incremental file list

kube-apiserver.service

sent 340 bytes received 35 bytes 750.00 bytes/sec

total size is 362 speedup is 0.97

[root@master01 work ]#rsync -vaz kube-apiserver.service master03:/usr/lib/systemd/system/

sending incremental file list

kube-apiserver.service

sent 340 bytes received 35 bytes 750.00 bytes/sec

total size is 362 speedup is 0.97

注:master02和master03配置文件kube-apiserver.conf的ip地址修改为实际的本机ip

master02配置文件:

[root@master02 kubernetes ]#cat kube-apiserver.conf

kube_apiserver_opts="--enable-admission-plugins=namespacelifecycle,noderestriction,limitranger,serviceaccount,defaultstorageclass,resourcequota \

--anonymous-auth=false \

--bind-address=10.10.0.11 \

--secure-port=6443 \

--advertise-address=10.10.0.11 \

--insecure-port=0 \

--authorization-mode=node,rbac \

--runtime-config=api/all=true \

--enable-bootstrap-token-auth \

--service-cluster-ip-range=10.255.0.0/16 \

--token-auth-file=/etc/kubernetes/token.csv \

--service-node-port-range=30000-50000 \

--tls-cert-file=/etc/kubernetes/ssl/kube-apiserver.pem \

--tls-private-key-file=/etc/kubernetes/ssl/kube-apiserver-key.pem \

--client-ca-file=/etc/kubernetes/ssl/ca.pem \

--kubelet-client-certificate=/etc/kubernetes/ssl/kube-apiserver.pem \

--kubelet-client-key=/etc/kubernetes/ssl/kube-apiserver-key.pem \

--service-account-key-file=/etc/kubernetes/ssl/ca-key.pem \

--service-account-signing-key-file=/etc/kubernetes/ssl/ca-key.pem \

--service-account-issuer=https://kubernetes.default.svc.cluster.local \

--etcd-cafile=/etc/etcd/ssl/ca.pem \

--etcd-certfile=/etc/etcd/ssl/etcd.pem \

--etcd-keyfile=/etc/etcd/ssl/etcd-key.pem \

--etcd-servers=https://10.10.0.10:2379,https://10.10.0.11:2379,https://10.10.0.12:2379 \

--enable-swagger-ui=true \

--allow-privileged=true \

--apiserver-count=3 \

--audit-log-maxage=30 \

--audit-log-maxbackup=3 \

--audit-log-maxsize=100 \

--audit-log-path=/var/log/kube-apiserver-audit.log \

--event-ttl=1h \

--alsologtostderr=true \

--logtostderr=false \

--log-dir=/var/log/kubernetes \

--v=4"

master03配置文件:

[root@master03 kubernetes ]#cat kube-apiserver.conf

kube_apiserver_opts="--enable-admission-plugins=namespacelifecycle,noderestriction,limitranger,serviceaccount,defaultstorageclass,resourcequota \

--anonymous-auth=false \

--bind-address=10.10.0.12 \

--secure-port=6443 \

--advertise-address=10.10.0.12 \

--insecure-port=0 \

--authorization-mode=node,rbac \

--runtime-config=api/all=true \

--enable-bootstrap-token-auth \

--service-cluster-ip-range=10.255.0.0/16 \

--token-auth-file=/etc/kubernetes/token.csv \

--service-node-port-range=30000-50000 \

--tls-cert-file=/etc/kubernetes/ssl/kube-apiserver.pem \

--tls-private-key-file=/etc/kubernetes/ssl/kube-apiserver-key.pem \

--client-ca-file=/etc/kubernetes/ssl/ca.pem \

--kubelet-client-certificate=/etc/kubernetes/ssl/kube-apiserver.pem \

--kubelet-client-key=/etc/kubernetes/ssl/kube-apiserver-key.pem \

--service-account-key-file=/etc/kubernetes/ssl/ca-key.pem \

--service-account-signing-key-file=/etc/kubernetes/ssl/ca-key.pem \

--service-account-issuer=https://kubernetes.default.svc.cluster.local \

--etcd-cafile=/etc/etcd/ssl/ca.pem \

--etcd-certfile=/etc/etcd/ssl/etcd.pem \

--etcd-keyfile=/etc/etcd/ssl/etcd-key.pem \

--etcd-servers=https://10.10.0.10:2379,https://10.10.0.11:2379,https://10.10.0.12:2379 \

--enable-swagger-ui=true \

--allow-privileged=true \

--apiserver-count=3 \

--audit-log-maxage=30 \

--audit-log-maxbackup=3 \

--audit-log-maxsize=100 \

--audit-log-path=/var/log/kube-apiserver-audit.log \

--event-ttl=1h \

--alsologtostderr=true \

--logtostderr=false \

--log-dir=/var/log/kubernetes \

--v=4"

[root@master01 work ]#systemctl daemon-reload

[root@master02 kubernetes ]#systemctl daemon-reload

[root@master03 kubernetes ]#systemctl daemon-reload

[root@master01 work ]#systemctl enable kube-apiserver.service --now

[root@master02 kubernetes ]#systemctl enable kube-apiserver.service --now

[root@master03 kubernetes ]#systemctl enable kube-apiserver.service --now

[root@master01 work ]#curl --insecure https://10.10.0.10:6443/

{

"kind": "status",

"apiversion": "v1",

"metadata": {

},

"status": "failure",

"message": "unauthorized",

"reason": "unauthorized",

"code": 401

上面看到401,这个是正常的的状态,还没认证

5.3 部署kubectl组件

kubectl是客户端工具,操作k8s资源的,如增删改查等。

kubectl操作资源的时候,怎么知道连接到哪个集群,

需要一个文件/etc/kubernetes/admin.conf,这个文件是kubeadm安装时生成的。kubectl会根据这个文件的配置,

去访问k8s资源。/etc/kubernetes/admin.conf文件记录了访问的k8s集群,和要用到的证书。

可以设置一个环境变量kubeconfig

[root@master01 ~ ]#export kubeconfig =/etc/kubernetes/admin.conf

这样在操作kubectl,就会自动加载kubeconfig来操作要管理哪个集群的k8s资源了

也可以按照下面方法,这个是在kubeadm初始化k8s的时候会告诉我们要用的一个方法

[root@ master01 ~]# cp /etc/kubernetes/admin.conf /root/.kube/config

这样我们在执行kubectl,就会加载/root/.kube/config文件,去操作k8s资源了

如果设置了kubeconfig,那就会先找到kubeconfig去操作k8s,

如果没有kubeconfig变量,那就会使用/root/.kube/config文件决定管理哪个k8s集群的资源

二进制安装/root/.kube/config 文件不会自动生成,需要手动去配置

#创建csr请求文件

[root@master01 work ]#cat admin-csr.json

{

"cn": "admin",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"c": "cn",

"st": "hubei",

"l": "wuhan",

"o": "system:masters",

"ou": "system"

}

]

}

#说明: 后续 kube-apiserver 使用 rbac 对客户端(如 kubelet、kube-proxy、pod)请求进行授权;

kube-apiserver 预定义了一些 rbac 使用的 rolebindings,

如 cluster-admin 将 group system:masters 与 role cluster-admin 绑定,

该 role 授予了调用kube-apiserver 的所有 api的权限;

o指定该证书的 group 为 system:masters,kubelet 使用该证书访问 kube-apiserver 时 ,

由于证书被 ca 签名,所以认证通过,同时由于证书用户组为经过预授权的 system:masters,所以被授予访问所有 api 的权限;

注: 这个admin 证书,是将来生成管理员用的kube config 配置文件用的,

现在我们一般建议使用rbac 来对kubernetes 进行角色权限控制,

kubernetes 将证书中的cn 字段 作为user, o 字段作为 group; “o”: “system:masters”,

必须是system:masters,否则后面kubectl create clusterrolebinding报错。

#证书o配置为system:masters 在集群内部cluster-admin的clusterrolebinding将system:masters组和cluster-admin clusterrole绑定在一起

#生成证书

[root@master01 work ]#cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes admin-csr.json | cfssljson -bare admin

2022/10/26 13:13:36 [info] generate received request

2022/10/26 13:13:36 [info] received csr

2022/10/26 13:13:36 [info] generating key: rsa-2048

2022/10/26 13:13:37 [info] encoded csr

2022/10/26 13:13:37 [info] signed certificate with serial number 446576960760276520597218013698142034111813000185

2022/10/26 13:13:37 [warning] this certificate lacks a "hosts" field. this makes it unsuitable for

websites. for more information see the baseline requirements for the issuance and management

of publicly-trusted certificates, v.1.1.6, from the ca/browser forum (https://cabforum.org);

specifically, section 10.2.3 ("information requirements").

-profile=kubernetes 是在ca-config.json文件中指定的

这样,admin以及system:masters组下的成员都被apiserver信任。

[root@master01 work ]#cp admin*.pem /etc/kubernetes/ssl/

配置安全上下文

#创建kubeconfig配置文件,比较重要

kubeconfig 为 kubectl 的配置文件,包含访问 apiserver 的所有信息,

如 apiserver 地址、ca 证书和自身使用的证书(这里如果报错找不到kubeconfig路径,请手动复制到相应路径下,没有则忽略)

二进制部署不会生成~/.kube/config文件

手动生成:

1.设置集群参数

集群名字是kubernetes --kubeconfig 指定生成文件的名称以及位置

[root@master01 work ]#kubectl config set-cluster kubernetes --certificate-authority=ca.pem --embed-certs=true --server=https://10.10.0.10:6443 --kubeconfig=kube.config

cluster "kubernetes" set.

#查看kube.config内容

[root@master01 work ]#cat kube.config

apiversion: v1

clusters:

- cluster:

certificate-authority-data: ls0tls1crudjtibdrvjusuzjq0furs0tls0tck1jsur0akndqxa2z0f3sujbz0lvukv4rxhidjq1zlvby1hcqlpengzeznrqzmtnd0rrwuplb1pjahzjtkfrruwkqlfbd1luruxnqwthqtfvrujotunrmdr4rgpbtujntlzcqwduqlvomvltvnbnutr3refzrfzruuhfd1zyzfdoaapiakvntufvr0exvuvdae1eyxpoek1rohdeuvlevlfrtev3wnplwe4wwlcweev6qvjcz05wqkfnventddfzbvz5cmjtvjbawe13sghjtk1qsxhnrekxturnmu5eqxdxagnotxpjee1esxlnrgcxtkrbd1dqqmhnuxn3q1fzrfzruuckrxdkrfrqru9nqxdhqtfvrunctuztsfzpwldreerqqu1cz05wqkfjvejwzdfhr0z1tvf3d0nnwurwuvflrxdocgppse14rhpbtkjntlzcqxnuqm5onwmzumxivevutujfr0exvuvbee1lytnwavpysnvawfjsy3pdq0ftsxdeuvlkcktvwklodmnoqvffqkjrqurnz0vqqurdq0frb0nnz0vcquxvv2lycgzpmfz6dtbrek8xyllavuo2dfvnthp2tduktljwwnpos2pnz3pdbmzym0phwum0swpfdgoxrwnhugdfouerbkz1undcwlk0a0u3nke1rjdhas8rnzdiak5tmapjcwdzy3o2vmdzm0jumezssxvmmlfkqm9oumnlwwhxl011z0zwulzrsuvqtjruzgxcvufschpnbdk4clpxsvu5ckxtmkz1wwrwytrrsi8wevp3ywlyyllxazfduk5jukrbmuzjrdh6vephexhrdmzoz05wrgjtqkhqvlhqk3oxzw4ktu5xqzvdmgt1qwcya3izcexhukg3t2dmr1dhv0rurxvwmuzkmujondl2v3iwtw8zqwd6te1tctdarnrgys9kygpvn3lowmzdznd5ntzqti9rckc5ess0afbonhvrdg16em1ybujyt2jjtff2sxhen2lkytvwb0i4q0f3rufbyu5tck1huxdez1levliwuefrsc9cqvfeqwdfr01csudbmvvkrxdfqi93uulnqvlcqwy4q0frsxdiuvlevliwt0jcwuukrk4rdfzgbnbvts9jcmmyc21qwkryt3blre1vve1coedbmvvksxdrwu1cyufgtit0vkzucfvnl0lyyzjzbwparapyt3blre1vve1bmeddu3fhu0lim0rrrujdd1vbqtrjqkfrqjk3nxbmody5thn6nkddwkdxcknywe1gmm00aenxcmfirgx4uwqvk3npqu5neesxunv1qwz5d2lmu2prtgfaymxstnzbmwq1cgvndxbmcudyqxljsje1mkpyugq1ae8knkpgmmlhmwxjms80bno4ofe1stbzcepjc0fsudzbtsttb2rrwjfkm3v5uhfzbfvmsnbju1plslhpqstervjcaqo1eehjzwmywvlpthjqk2xtmk90euj4k3zprnlmwfu0agkrn08zrvrwnjfjrwvkuhe0yzcvwe95ulnnmwzudffrckn5sc81ehfbsstsymhxmza4txdvwgh1m2qxcxfyrtbouxpovdvoow4zshn6v0f3dgthqvuzohezmezqzfdwyxgkefddcurbdyt5uelmc2jzdjdbmnvntukxy1o0zk9uwmkwk1myrhfxdnz4uez6enzza013ss9bnjqkls0tls1ftkqgq0vsvelgsunbveutls0tlqo=

server: https://10.10.0.10:6443

name: kubernetes

contexts: null

current-context: ""

kind: config

preferences: {}

users: null

certificate-authority-data 内容就是根据ca.pem内容加密/解密生成的

2.设置客户端认证参数

set-credentials admin admin是admin-csr.json 文件中的cn值

[root@master01 work ]#kubectl config set-credentials admin --client-certificate=admin.pem --client-key=admin-key.pem --embed-certs=true --kubeconfig=kube.config

user "admin" set.

[root@master01 work ]#cat kube.config

apiversion: v1

clusters:

- cluster:

certificate-authority-data: ls0tls1crudjtibdrvjusuzjq0furs0tls0tck1jsur0akndqxa2z0f3sujbz0lvukv4rxhidjq1zlvby1hcqlpengzeznrqzmtnd0rrwuplb1pjahzjtkfrruwkqlfbd1luruxnqwthqtfvrujotunrmdr4rgpbtujntlzcqwduqlvomvltvnbnutr3refzrfzruuhfd1zyzfdoaapiakvntufvr0exvuvdae1eyxpoek1rohdeuvlevlfrtev3wnplwe4wwlcweev6qvjcz05wqkfnventddfzbvz5cmjtvjbawe13sghjtk1qsxhnrekxturnmu5eqxdxagnotxpjee1esxlnrgcxtkrbd1dqqmhnuxn3q1fzrfzruuckrxdkrfrqru9nqxdhqtfvrunctuztsfzpwldreerqqu1cz05wqkfjvejwzdfhr0z1tvf3d0nnwurwuvflrxdocgppse14rhpbtkjntlzcqxnuqm5onwmzumxivevutujfr0exvuvbee1lytnwavpysnvawfjsy3pdq0ftsxdeuvlkcktvwklodmnoqvffqkjrqurnz0vqqurdq0frb0nnz0vcquxvv2lycgzpmfz6dtbrek8xyllavuo2dfvnthp2tduktljwwnpos2pnz3pdbmzym0phwum0swpfdgoxrwnhugdfouerbkz1undcwlk0a0u3nke1rjdhas8rnzdiak5tmapjcwdzy3o2vmdzm0jumezssxvmmlfkqm9oumnlwwhxl011z0zwulzrsuvqtjruzgxcvufschpnbdk4clpxsvu5ckxtmkz1wwrwytrrsi8wevp3ywlyyllxazfduk5jukrbmuzjrdh6vephexhrdmzoz05wrgjtqkhqvlhqk3oxzw4ktu5xqzvdmgt1qwcya3izcexhukg3t2dmr1dhv0rurxvwmuzkmujondl2v3iwtw8zqwd6te1tctdarnrgys9kygpvn3lowmzdznd5ntzqti9rckc5ess0afbonhvrdg16em1ybujyt2jjtff2sxhen2lkytvwb0i4q0f3rufbyu5tck1huxdez1levliwuefrsc9cqvfeqwdfr01csudbmvvkrxdfqi93uulnqvlcqwy4q0frsxdiuvlevliwt0jcwuukrk4rdfzgbnbvts9jcmmyc21qwkryt3blre1vve1coedbmvvksxdrwu1cyufgtit0vkzucfvnl0lyyzjzbwparapyt3blre1vve1bmeddu3fhu0lim0rrrujdd1vbqtrjqkfrqjk3nxbmody5thn6nkddwkdxcknywe1gmm00aenxcmfirgx4uwqvk3npqu5neesxunv1qwz5d2lmu2prtgfaymxstnzbmwq1cgvndxbmcudyqxljsje1mkpyugq1ae8knkpgmmlhmwxjms80bno4ofe1stbzcepjc0fsudzbtsttb2rrwjfkm3v5uhfzbfvmsnbju1plslhpqstervjcaqo1eehjzwmywvlpthjqk2xtmk90euj4k3zprnlmwfu0agkrn08zrvrwnjfjrwvkuhe0yzcvwe95ulnnmwzudffrckn5sc81ehfbsstsymhxmza4txdvwgh1m2qxcxfyrtbouxpovdvoow4zshn6v0f3dgthqvuzohezmezqzfdwyxgkefddcurbdyt5uelmc2jzdjdbmnvntukxy1o0zk9uwmkwk1myrhfxdnz4uez6enzza013ss9bnjqkls0tls1ftkqgq0vsvelgsunbveutls0tlqo=

server: https://10.10.0.10:6443

name: kubernetes

contexts: null

current-context: ""

kind: config

preferences: {}

users:

- name: admin

user:

client-certificate-data: ls0tls1crudjtibdrvjusuzjq0furs0tls0tck1jsuqxvendqxiyz0f3sujbz0lvvgprmediug1crwnrvvhgefzrl21wb05pry9rd0rrwuplb1pjahzjtkfrruwkqlfbd1luruxnqwthqtfvrujotunrmdr4rgpbtujntlzcqwduqlvomvltvnbnutr3refzrfzruuhfd1zyzfdoaapiakvntufvr0exvuvdae1eyxpoek1rohdeuvlevlfrtev3wnplwe4wwlcweev6qvjcz05wqkfnventddfzbvz5cmjtvjbawe13sghjtk1qsxhnrekyturvd09uqxdxagnotxpjee1esxpnrfv3t1rbd1dqqm5nuxn3q1fzrfzruuckrxdkrfrqru9nqxdhqtfvrunctuztsfzpwldreerqqu1cz05wqkfjvejwzdfhr0z1tvjjd0zrwurwuvflrxc1egplwe4wwlcwnmjxrnpkr1z5y3pfue1bmedbmvvfq3hnr2mzbhpkr1z0tve0d0rbwurwuvferxdwafphmxbiakndckftsxdeuvlks29aswh2y05buuvcqlfbrgdnrvbbrendqvfvq2dnrujbtdrqtglnaxjmuwljdhp2mhfooe1im3aksexmrgnmvxb3tutltfqwb3nsz0xfl21jdmmvsg12tlblngzmtwk1ovhytk5iv0jil0zva0rpdlfwkytoenzzegpftvfktnnntkdqousxbgftmuw4v1bjmmywc0xsm2h1ugt0l09otmdondbxuw81afywsfpztms0tzrpte1lejhzcmnjbjfvatvjdufothg2q2dknurqqwr6nwjhetdql0gvm0phkzvnblnknu8yb05sl0nmrmvkwvdjb055s1vltdkkv3a2t0l1s0pudw94t2jmt1lcwutitnbsuxvnrmfiqmx4auxcd2fjr3g4vvbttuqrrli0sxlmnldbwgfttuvpqwpdti9yayt6bxurogdsq1homunsbljatgnenxhdr29stlhpvfzgcliyc3dkctn0rhjxsupzv2fkznbvtgczwdhdckf3rufbyu4vtugwd0rnwurwujbqqvfil0jbuurbz1dntuiwr0exvwrkuvfxtujrr0ndc0dbuvvgqndnqkjnz3ikqmdfrkjry0rbakfnqmdovkhstujbzjhfqwpbqu1cmedbmvvkrgdrv0jcu1ljaxl3v3m5mzfjzmzjszrnvwlrdqpwq2j3nwpbzkjntlziu01fr0rbv2dcvgzyvljanlzeuhllm05ysm8yutf6cvhnekzfekfoqmdrcwhrauc5dzbcckfrc0zbqu9dqvffqvljtw5ydunmvev4rkwvrgrkuuyyt3pftex3y1hhofnmujjkrunxsni0qxpsd1juvuhjtdkkvgkxwxczzdi4twh0d09nekneyzm5zurlm2rhzgvlmfozefhxm0flvglrcfurznbcburmqw1mry9mdzk1yzzsmqoxexjin1dxv2pyrhjdzhvvn1jcqnhjt3rqoernng1mqk1uuehfqs9ry04yr1drc2zwrvpleelzwwc1b242z0racjdynwxtszzhtgzsww83emljy0nfak1zc281u1owbmjqyxzjuzz0tuy0u2nldwjkqupvserscfvmsnnqtwxtrw8ky01nqnvtd2zxunlrdu1hoxlnbhf0rdljzdjxtujsdu5tdlpwry80ejdvmkhrvjvkoc9uadftckladmzkndnmrqowotnyogdiamqvve5krjduvfy5zwphttlmzct1tuvjqzhnpt0kls0tls1ftkqgq0vsvelgsunbveutls0tlqo=

client-key-data: ls0tls1crudjtibsu0egufjjvkfursblrvktls0tlqpnsulfcefjqkfbs0nbuuvbdmlndul5s3q5q0lpm08vu28zd3h2zwtjc3nod3rtbkf3cdr0ufnpevdbc1qrwnk5cno4zwe4mdhyadhzeuxumwrjmdbkwuz2ofztuu02oununzqztzlqtvf4qjayd3cwws8wcldwcexvdnhzogpal1mkd3rizuc0k1mzodqwmknialnwq2ptrlhrzgxnmlrnn2ljc3g3uhhoehlmv2lma2k0qtb2sg9lqjnrt01cm1bscwpithmvogyvy2xyn2t5zeozazdhzzfioelzvjuxaflpzznjcfi0djfhbm80atrvbe82aku1c3m1z0znb2mybezdcjzbvm9jr1hhsxniqnb3ykh4ustzd1a0vkhnakl2cflczhfzd1e0sukzowvun09hnzd5q1vkzuhvs1dkrmt0d1akbkvjywlvmwvktlvxdehhekftcmuwt3rzz214wm9sk21ndurkzndjrefrqujbb0lcqvfdwkxjsjnyl0t3b2vldwpvczfceevav2x6ejfqnxazzvfiqvnlchrnu0hjnkyvwlvydgdinunyd0fwenhmsfjubeh4r0frng5pszfmt3vqcjkxkzbfr3pseitztctqvxjrchdhnefxnwpxvxo1ugczcuxdbegychvqy1punddxuy9rb2vbbfnwmzbpb2j2ewckk2tdwwhhqxvqc1u5vfztee1ractmmgppvvrqq1vachu2v0rlsvhivzfqwu5mdwo5bvbutlhwmtnvowr6vfplmapgsm1pd085vvd0ws81z0fcvdbqqmxhwhpcsvd1qli5qnmxchzts1bsnnbrvxjmmmvkzjjkr2farexswhvxb0crckp5rutkngrkrfzur0zzcfvsc0ncyxboytg4rjnnnzbmss9dekr2mg1mb1z4ce10rgrqrlljedhxb0pzrdngeuqkrfarmvhznejbb0dcqvbivehxmmhankjsbjvxnitem1f1u3nindazwmjul09naysyb3juk1fqnexyr3o0cvbssqp4yxfhcelycm9xyu05vzjjueo1dvjzdnm0ntzucmhwlzl0cwfxossrowtlrejsale2wi9kqkjxstdruhurdtvxcnpik0rxchg1tvgwvy8rs1dydflkvheyahbwqlqxk0lvztj6stn4mxniuk4zq3d0vw9cz2z4ykqvqw9hqkfnvtakbxl1shl3mgviy21in3dvtzf5v05nbvnpb0tqk1fjmev4u2zcblf1uwnjnfl1dfditufuywjgtxhjazzznnhpnwppvvdprwvlck4zbxdjm3nsn3dguhrwnguyq3lwzlzjkzjiv2xml3jztgp6eugrbzm1zvdll2naaw9ut1y2yzjwcmfla01tzlr1oxdnbm5un20vkzlsk0tpdvhubfbrevg4s3j5nelgt0jbb0dbwkz1twtlsge5nvdzbepivuu1wkykwtlpdu5gngvqsjn6v1brq2tddhdsymvhmmzmmujin3hxqi9pbldtwfu1sw16r1zqzud1uhzzz1jpttrgtdfxnwpivuvnzdbvmjz2ythzdxaydznoakrjtdawkytjzwnwvlkvuk9mnwniwnlnddnoetfzl3r3blnxb0jmrujtmfi0cmdzl2q0q0hrnitrd1ntz2jqqlbyrnrnq2dzqversjrck1fxngmwy00rcvp2cnlkrxpbbnlmwffwcu9qvlb2dlkkbufqejnksli2rddyofy1b2pjt1dcvu5arwzpskvqs1dfy3zvugvqz1rsy2phrufcvk9lkzn0axj2wkd6mkd5napjeti0ww1ynfe3nexzafflmva5uisxc1bwmjhmawlrznfemznuamtvtxbttnzvtjvuuxlnevhqsdu5azzbnkdkcjddnmnbuutcz1fdsedibtjodxpeaucymejvcurqvwr1dk9ycmyxv3p6thd0l05tzufgogdmngngcuy2wdaxouokctfuv0vksku5uflecw9tq1i4awovtmxsbvzhvudjnzdwlytwudezqtnwl1zwdndetu5ak2fdrdjwqkyvuxbutworumdtthhdbwpaehvbtvlbd0v1c3d2dw5lng9jewnwwjbfshpvstznbljdnkxqvk1izvqxz0e9pqotls0tluvorcbsu0egufjjvkfursblrvktls0tlqo=

3.设置上下文参数

目的是将admin这个用户和apiserver集群连接起来,靠上下文参数连接

[root@master01 work ]#kubectl config set-context kubernetes --cluster=kubernetes --user=admin --kubeconfig=kube.config

context "kubernetes" created.

[root@master01 work ]#cat kube.config

apiversion: v1

clusters:

- cluster:

certificate-authority-data: ls0tls1crudjtibdrvjusuzjq0furs0tls0tck1jsur0akndqxa2z0f3sujbz0lvukv4rxhidjq1zlvby1hcqlpengzeznrqzmtnd0rrwuplb1pjahzjtkfrruwkqlfbd1luruxnqwthqtfvrujotunrmdr4rgpbtujntlzcqwduqlvomvltvnbnutr3refzrfzruuhfd1zyzfdoaapiakvntufvr0exvuvdae1eyxpoek1rohdeuvlevlfrtev3wnplwe4wwlcweev6qvjcz05wqkfnventddfzbvz5cmjtvjbawe13sghjtk1qsxhnrekxturnmu5eqxdxagnotxpjee1esxlnrgcxtkrbd1dqqmhnuxn3q1fzrfzruuckrxdkrfrqru9nqxdhqtfvrunctuztsfzpwldreerqqu1cz05wqkfjvejwzdfhr0z1tvf3d0nnwurwuvflrxdocgppse14rhpbtkjntlzcqxnuqm5onwmzumxivevutujfr0exvuvbee1lytnwavpysnvawfjsy3pdq0ftsxdeuvlkcktvwklodmnoqvffqkjrqurnz0vqqurdq0frb0nnz0vcquxvv2lycgzpmfz6dtbrek8xyllavuo2dfvnthp2tduktljwwnpos2pnz3pdbmzym0phwum0swpfdgoxrwnhugdfouerbkz1undcwlk0a0u3nke1rjdhas8rnzdiak5tmapjcwdzy3o2vmdzm0jumezssxvmmlfkqm9oumnlwwhxl011z0zwulzrsuvqtjruzgxcvufschpnbdk4clpxsvu5ckxtmkz1wwrwytrrsi8wevp3ywlyyllxazfduk5jukrbmuzjrdh6vephexhrdmzoz05wrgjtqkhqvlhqk3oxzw4ktu5xqzvdmgt1qwcya3izcexhukg3t2dmr1dhv0rurxvwmuzkmujondl2v3iwtw8zqwd6te1tctdarnrgys9kygpvn3lowmzdznd5ntzqti9rckc5ess0afbonhvrdg16em1ybujyt2jjtff2sxhen2lkytvwb0i4q0f3rufbyu5tck1huxdez1levliwuefrsc9cqvfeqwdfr01csudbmvvkrxdfqi93uulnqvlcqwy4q0frsxdiuvlevliwt0jcwuukrk4rdfzgbnbvts9jcmmyc21qwkryt3blre1vve1coedbmvvksxdrwu1cyufgtit0vkzucfvnl0lyyzjzbwparapyt3blre1vve1bmeddu3fhu0lim0rrrujdd1vbqtrjqkfrqjk3nxbmody5thn6nkddwkdxcknywe1gmm00aenxcmfirgx4uwqvk3npqu5neesxunv1qwz5d2lmu2prtgfaymxstnzbmwq1cgvndxbmcudyqxljsje1mkpyugq1ae8knkpgmmlhmwxjms80bno4ofe1stbzcepjc0fsudzbtsttb2rrwjfkm3v5uhfzbfvmsnbju1plslhpqstervjcaqo1eehjzwmywvlpthjqk2xtmk90euj4k3zprnlmwfu0agkrn08zrvrwnjfjrwvkuhe0yzcvwe95ulnnmwzudffrckn5sc81ehfbsstsymhxmza4txdvwgh1m2qxcxfyrtbouxpovdvoow4zshn6v0f3dgthqvuzohezmezqzfdwyxgkefddcurbdyt5uelmc2jzdjdbmnvntukxy1o0zk9uwmkwk1myrhfxdnz4uez6enzza013ss9bnjqkls0tls1ftkqgq0vsvelgsunbveutls0tlqo=

server: https://10.10.0.10:6443

name: kubernetes

contexts:

- context:

cluster: kubernetes

user: admin

name: kubernetes

current-context: ""

kind: config

preferences: {}

users:

- name: admin

user:

client-certificate-data: ls0tls1crudjtibdrvjusuzjq0furs0tls0tck1jsuqxvendqxiyz0f3sujbz0lvvgprmediug1crwnrvvhgefzrl21wb05pry9rd0rrwuplb1pjahzjtkfrruwkqlfbd1luruxnqwthqtfvrujotunrmdr4rgpbtujntlzcqwduqlvomvltvnbnutr3refzrfzruuhfd1zyzfdoaapiakvntufvr0exvuvdae1eyxpoek1rohdeuvlevlfrtev3wnplwe4wwlcweev6qvjcz05wqkfnventddfzbvz5cmjtvjbawe13sghjtk1qsxhnrekyturvd09uqxdxagnotxpjee1esxpnrfv3t1rbd1dqqm5nuxn3q1fzrfzruuckrxdkrfrqru9nqxdhqtfvrunctuztsfzpwldreerqqu1cz05wqkfjvejwzdfhr0z1tvjjd0zrwurwuvflrxc1egplwe4wwlcwnmjxrnpkr1z5y3pfue1bmedbmvvfq3hnr2mzbhpkr1z0tve0d0rbwurwuvferxdwafphmxbiakndckftsxdeuvlks29aswh2y05buuvcqlfbrgdnrvbbrendqvfvq2dnrujbtdrqtglnaxjmuwljdhp2mhfooe1im3aksexmrgnmvxb3tutltfqwb3nsz0xfl21jdmmvsg12tlblngzmtwk1ovhytk5iv0jil0zva0rpdlfwkytoenzzegpftvfktnnntkdqousxbgftmuw4v1bjmmywc0xsm2h1ugt0l09otmdondbxuw81afywsfpztms0tzrpte1lejhzcmnjbjfvatvjdufothg2q2dknurqqwr6nwjhetdql0gvm0phkzvnblnknu8yb05sl0nmrmvkwvdjb055s1vltdkkv3a2t0l1s0pudw94t2jmt1lcwutitnbsuxvnrmfiqmx4auxcd2fjr3g4vvbttuqrrli0sxlmnldbwgfttuvpqwpdti9yayt6bxurogdsq1homunsbljatgnenxhdr29stlhpvfzgcliyc3dkctn0rhjxsupzv2fkznbvtgczwdhdckf3rufbyu4vtugwd0rnwurwujbqqvfil0jbuurbz1dntuiwr0exvwrkuvfxtujrr0ndc0dbuvvgqndnqkjnz3ikqmdfrkjry0rbakfnqmdovkhstujbzjhfqwpbqu1cmedbmvvkrgdrv0jcu1ljaxl3v3m5mzfjzmzjszrnvwlrdqpwq2j3nwpbzkjntlziu01fr0rbv2dcvgzyvljanlzeuhllm05ysm8yutf6cvhnekzfekfoqmdrcwhrauc5dzbcckfrc0zbqu9dqvffqvljtw5ydunmvev4rkwvrgrkuuyyt3pftex3y1hhofnmujjkrunxsni0qxpsd1juvuhjtdkkvgkxwxczzdi4twh0d09nekneyzm5zurlm2rhzgvlmfozefhxm0flvglrcfurznbcburmqw1mry9mdzk1yzzsmqoxexjin1dxv2pyrhjdzhvvn1jcqnhjt3rqoernng1mqk1uuehfqs9ry04yr1drc2zwrvpleelzwwc1b242z0racjdynwxtszzhtgzsww83emljy0nfak1zc281u1owbmjqyxzjuzz0tuy0u2nldwjkqupvserscfvmsnnqtwxtrw8ky01nqnvtd2zxunlrdu1hoxlnbhf0rdljzdjxtujsdu5tdlpwry80ejdvmkhrvjvkoc9uadftckladmzkndnmrqowotnyogdiamqvve5krjduvfy5zwphttlmzct1tuvjqzhnpt0kls0tls1ftkqgq0vsvelgsunbveutls0tlqo=

client-key-data: ls0tls1crudjtibsu0egufjjvkfursblrvktls0tlqpnsulfcefjqkfbs0nbuuvbdmlndul5s3q5q0lpm08vu28zd3h2zwtjc3nod3rtbkf3cdr0ufnpevdbc1qrwnk5cno4zwe4mdhyadhzeuxumwrjmdbkwuz2ofztuu02oununzqztzlqtvf4qjayd3cwws8wcldwcexvdnhzogpal1mkd3rizuc0k1mzodqwmknialnwq2ptrlhrzgxnmlrnn2ljc3g3uhhoehlmv2lma2k0qtb2sg9lqjnrt01cm1bscwpithmvogyvy2xyn2t5zeozazdhzzfioelzvjuxaflpzznjcfi0djfhbm80atrvbe82aku1c3m1z0znb2mybezdcjzbvm9jr1hhsxniqnb3ykh4ustzd1a0vkhnakl2cflczhfzd1e0sukzowvun09hnzd5q1vkzuhvs1dkrmt0d1akbkvjywlvmwvktlvxdehhekftcmuwt3rzz214wm9sk21ndurkzndjrefrqujbb0lcqvfdwkxjsjnyl0t3b2vldwpvczfceevav2x6ejfqnxazzvfiqvnlchrnu0hjnkyvwlvydgdinunyd0fwenhmsfjubeh4r0frng5pszfmt3vqcjkxkzbfr3pseitztctqvxjrchdhnefxnwpxvxo1ugczcuxdbegychvqy1punddxuy9rb2vbbfnwmzbpb2j2ewckk2tdwwhhqxvqc1u5vfztee1ractmmgppvvrqq1vachu2v0rlsvhivzfqwu5mdwo5bvbutlhwmtnvowr6vfplmapgsm1pd085vvd0ws81z0fcvdbqqmxhwhpcsvd1qli5qnmxchzts1bsnnbrvxjmmmvkzjjkr2farexswhvxb0crckp5rutkngrkrfzur0zzcfvsc0ncyxboytg4rjnnnzbmss9dekr2mg1mb1z4ce10rgrqrlljedhxb0pzrdngeuqkrfarmvhznejbb0dcqvbivehxmmhankjsbjvxnitem1f1u3nindazwmjul09naysyb3juk1fqnexyr3o0cvbssqp4yxfhcelycm9xyu05vzjjueo1dvjzdnm0ntzucmhwlzl0cwfxossrowtlrejsale2wi9kqkjxstdruhurdtvxcnpik0rxchg1tvgwvy8rs1dydflkvheyahbwqlqxk0lvztj6stn4mxniuk4zq3d0vw9cz2z4ykqvqw9hqkfnvtakbxl1shl3mgviy21in3dvtzf5v05nbvnpb0tqk1fjmev4u2zcblf1uwnjnfl1dfditufuywjgtxhjazzznnhpnwppvvdprwvlck4zbxdjm3nsn3dguhrwnguyq3lwzlzjkzjiv2xml3jztgp6eugrbzm1zvdll2naaw9ut1y2yzjwcmfla01tzlr1oxdnbm5un20vkzlsk0tpdvhubfbrevg4s3j5nelgt0jbb0dbwkz1twtlsge5nvdzbepivuu1wkykwtlpdu5gngvqsjn6v1brq2tddhdsymvhmmzmmujin3hxqi9pbldtwfu1sw16r1zqzud1uhzzz1jpttrgtdfxnwpivuvnzdbvmjz2ythzdxaydznoakrjtdawkytjzwnwvlkvuk9mnwniwnlnddnoetfzl3r3blnxb0jmrujtmfi0cmdzl2q0q0hrnitrd1ntz2jqqlbyrnrnq2dzqversjrck1fxngmwy00rcvp2cnlkrxpbbnlmwffwcu9qvlb2dlkkbufqejnksli2rddyofy1b2pjt1dcvu5arwzpskvqs1dfy3zvugvqz1rsy2phrufcvk9lkzn0axj2wkd6mkd5napjeti0ww1ynfe3nexzafflmva5uisxc1bwmjhmawlrznfemznuamtvtxbttnzvtjvuuxlnevhqsdu5azzbnkdkcjddnmnbuutcz1fdsedibtjodxpeaucymejvcurqvwr1dk9ycmyxv3p6thd0l05tzufgogdmngngcuy2wdaxouokctfuv0vksku5uflecw9tq1i4awovtmxsbvzhvudjnzdwlytwudezqtnwl1zwdndetu5ak2fdrdjwqkyvuxbutworumdtthhdbwpaehvbtvlbd0v1c3d2dw5lng9jewnwwjbfshpvstznbljdnkxqvk1izvqxz0e9pqotls0tluvorcbsu0egufjjvkfursblrvktls0tlqo=

4.设置当前上下文

当前上下文就是admin这个用户访问当前集群 use-context kubernetes 这里kubernetes是上下文的名字

[root@master01 work ]#kubectl config use-context kubernetes --kubeconfig=kube.config

switched to context "kubernetes".

[root@master01 work ]#cat kube.config

apiversion: v1

clusters:

- cluster:

certificate-authority-data: ls0tls1crudjtibdrvjusuzjq0furs0tls0tck1jsur0akndqxa2z0f3sujbz0lvukv4rxhidjq1zlvby1hcqlpengzeznrqzmtnd0rrwuplb1pjahzjtkfrruwkqlfbd1luruxnqwthqtfvrujotunrmdr4rgpbtujntlzcqwduqlvomvltvnbnutr3refzrfzruuhfd1zyzfdoaapiakvntufvr0exvuvdae1eyxpoek1rohdeuvlevlfrtev3wnplwe4wwlcweev6qvjcz05wqkfnventddfzbvz5cmjtvjbawe13sghjtk1qsxhnrekxturnmu5eqxdxagnotxpjee1esxlnrgcxtkrbd1dqqmhnuxn3q1fzrfzruuckrxdkrfrqru9nqxdhqtfvrunctuztsfzpwldreerqqu1cz05wqkfjvejwzdfhr0z1tvf3d0nnwurwuvflrxdocgppse14rhpbtkjntlzcqxnuqm5onwmzumxivevutujfr0exvuvbee1lytnwavpysnvawfjsy3pdq0ftsxdeuvlkcktvwklodmnoqvffqkjrqurnz0vqqurdq0frb0nnz0vcquxvv2lycgzpmfz6dtbrek8xyllavuo2dfvnthp2tduktljwwnpos2pnz3pdbmzym0phwum0swpfdgoxrwnhugdfouerbkz1undcwlk0a0u3nke1rjdhas8rnzdiak5tmapjcwdzy3o2vmdzm0jumezssxvmmlfkqm9oumnlwwhxl011z0zwulzrsuvqtjruzgxcvufschpnbdk4clpxsvu5ckxtmkz1wwrwytrrsi8wevp3ywlyyllxazfduk5jukrbmuzjrdh6vephexhrdmzoz05wrgjtqkhqvlhqk3oxzw4ktu5xqzvdmgt1qwcya3izcexhukg3t2dmr1dhv0rurxvwmuzkmujondl2v3iwtw8zqwd6te1tctdarnrgys9kygpvn3lowmzdznd5ntzqti9rckc5ess0afbonhvrdg16em1ybujyt2jjtff2sxhen2lkytvwb0i4q0f3rufbyu5tck1huxdez1levliwuefrsc9cqvfeqwdfr01csudbmvvkrxdfqi93uulnqvlcqwy4q0frsxdiuvlevliwt0jcwuukrk4rdfzgbnbvts9jcmmyc21qwkryt3blre1vve1coedbmvvksxdrwu1cyufgtit0vkzucfvnl0lyyzjzbwparapyt3blre1vve1bmeddu3fhu0lim0rrrujdd1vbqtrjqkfrqjk3nxbmody5thn6nkddwkdxcknywe1gmm00aenxcmfirgx4uwqvk3npqu5neesxunv1qwz5d2lmu2prtgfaymxstnzbmwq1cgvndxbmcudyqxljsje1mkpyugq1ae8knkpgmmlhmwxjms80bno4ofe1stbzcepjc0fsudzbtsttb2rrwjfkm3v5uhfzbfvmsnbju1plslhpqstervjcaqo1eehjzwmywvlpthjqk2xtmk90euj4k3zprnlmwfu0agkrn08zrvrwnjfjrwvkuhe0yzcvwe95ulnnmwzudffrckn5sc81ehfbsstsymhxmza4txdvwgh1m2qxcxfyrtbouxpovdvoow4zshn6v0f3dgthqvuzohezmezqzfdwyxgkefddcurbdyt5uelmc2jzdjdbmnvntukxy1o0zk9uwmkwk1myrhfxdnz4uez6enzza013ss9bnjqkls0tls1ftkqgq0vsvelgsunbveutls0tlqo=

server: https://10.10.0.10:6443

name: kubernetes

contexts:

- context:

cluster: kubernetes

user: admin

name: kubernetes

current-context: kubernetes

kind: config

preferences: {}

users:

- name: admin

user:

client-certificate-data: ls0tls1crudjtibdrvjusuzjq0furs0tls0tck1jsuqxvendqxiyz0f3sujbz0lvvgprmediug1crwnrvvhgefzrl21wb05pry9rd0rrwuplb1pjahzjtkfrruwkqlfbd1luruxnqwthqtfvrujotunrmdr4rgpbtujntlzcqwduqlvomvltvnbnutr3refzrfzruuhfd1zyzfdoaapiakvntufvr0exvuvdae1eyxpoek1rohdeuvlevlfrtev3wnplwe4wwlcweev6qvjcz05wqkfnventddfzbvz5cmjtvjbawe13sghjtk1qsxhnrekyturvd09uqxdxagnotxpjee1esxpnrfv3t1rbd1dqqm5nuxn3q1fzrfzruuckrxdkrfrqru9nqxdhqtfvrunctuztsfzpwldreerqqu1cz05wqkfjvejwzdfhr0z1tvjjd0zrwurwuvflrxc1egplwe4wwlcwnmjxrnpkr1z5y3pfue1bmedbmvvfq3hnr2mzbhpkr1z0tve0d0rbwurwuvferxdwafphmxbiakndckftsxdeuvlks29aswh2y05buuvcqlfbrgdnrvbbrendqvfvq2dnrujbtdrqtglnaxjmuwljdhp2mhfooe1im3aksexmrgnmvxb3tutltfqwb3nsz0xfl21jdmmvsg12tlblngzmtwk1ovhytk5iv0jil0zva0rpdlfwkytoenzzegpftvfktnnntkdqousxbgftmuw4v1bjmmywc0xsm2h1ugt0l09otmdondbxuw81afywsfpztms0tzrpte1lejhzcmnjbjfvatvjdufothg2q2dknurqqwr6nwjhetdql0gvm0phkzvnblnknu8yb05sl0nmrmvkwvdjb055s1vltdkkv3a2t0l1s0pudw94t2jmt1lcwutitnbsuxvnrmfiqmx4auxcd2fjr3g4vvbttuqrrli0sxlmnldbwgfttuvpqwpdti9yayt6bxurogdsq1homunsbljatgnenxhdr29stlhpvfzgcliyc3dkctn0rhjxsupzv2fkznbvtgczwdhdckf3rufbyu4vtugwd0rnwurwujbqqvfil0jbuurbz1dntuiwr0exvwrkuvfxtujrr0ndc0dbuvvgqndnqkjnz3ikqmdfrkjry0rbakfnqmdovkhstujbzjhfqwpbqu1cmedbmvvkrgdrv0jcu1ljaxl3v3m5mzfjzmzjszrnvwlrdqpwq2j3nwpbzkjntlziu01fr0rbv2dcvgzyvljanlzeuhllm05ysm8yutf6cvhnekzfekfoqmdrcwhrauc5dzbcckfrc0zbqu9dqvffqvljtw5ydunmvev4rkwvrgrkuuyyt3pftex3y1hhofnmujjkrunxsni0qxpsd1juvuhjtdkkvgkxwxczzdi4twh0d09nekneyzm5zurlm2rhzgvlmfozefhxm0flvglrcfurznbcburmqw1mry9mdzk1yzzsmqoxexjin1dxv2pyrhjdzhvvn1jcqnhjt3rqoernng1mqk1uuehfqs9ry04yr1drc2zwrvpleelzwwc1b242z0racjdynwxtszzhtgzsww83emljy0nfak1zc281u1owbmjqyxzjuzz0tuy0u2nldwjkqupvserscfvmsnnqtwxtrw8ky01nqnvtd2zxunlrdu1hoxlnbhf0rdljzdjxtujsdu5tdlpwry80ejdvmkhrvjvkoc9uadftckladmzkndnmrqowotnyogdiamqvve5krjduvfy5zwphttlmzct1tuvjqzhnpt0kls0tls1ftkqgq0vsvelgsunbveutls0tlqo=

client-key-data: ls0tls1crudjtibsu0egufjjvkfursblrvktls0tlqpnsulfcefjqkfbs0nbuuvbdmlndul5s3q5q0lpm08vu28zd3h2zwtjc3nod3rtbkf3cdr0ufnpevdbc1qrwnk5cno4zwe4mdhyadhzeuxumwrjmdbkwuz2ofztuu02oununzqztzlqtvf4qjayd3cwws8wcldwcexvdnhzogpal1mkd3rizuc0k1mzodqwmknialnwq2ptrlhrzgxnmlrnn2ljc3g3uhhoehlmv2lma2k0qtb2sg9lqjnrt01cm1bscwpithmvogyvy2xyn2t5zeozazdhzzfioelzvjuxaflpzznjcfi0djfhbm80atrvbe82aku1c3m1z0znb2mybezdcjzbvm9jr1hhsxniqnb3ykh4ustzd1a0vkhnakl2cflczhfzd1e0sukzowvun09hnzd5q1vkzuhvs1dkrmt0d1akbkvjywlvmwvktlvxdehhekftcmuwt3rzz214wm9sk21ndurkzndjrefrqujbb0lcqvfdwkxjsjnyl0t3b2vldwpvczfceevav2x6ejfqnxazzvfiqvnlchrnu0hjnkyvwlvydgdinunyd0fwenhmsfjubeh4r0frng5pszfmt3vqcjkxkzbfr3pseitztctqvxjrchdhnefxnwpxvxo1ugczcuxdbegychvqy1punddxuy9rb2vbbfnwmzbpb2j2ewckk2tdwwhhqxvqc1u5vfztee1ractmmgppvvrqq1vachu2v0rlsvhivzfqwu5mdwo5bvbutlhwmtnvowr6vfplmapgsm1pd085vvd0ws81z0fcvdbqqmxhwhpcsvd1qli5qnmxchzts1bsnnbrvxjmmmvkzjjkr2farexswhvxb0crckp5rutkngrkrfzur0zzcfvsc0ncyxboytg4rjnnnzbmss9dekr2mg1mb1z4ce10rgrqrlljedhxb0pzrdngeuqkrfarmvhznejbb0dcqvbivehxmmhankjsbjvxnitem1f1u3nindazwmjul09naysyb3juk1fqnexyr3o0cvbssqp4yxfhcelycm9xyu05vzjjueo1dvjzdnm0ntzucmhwlzl0cwfxossrowtlrejsale2wi9kqkjxstdruhurdtvxcnpik0rxchg1tvgwvy8rs1dydflkvheyahbwqlqxk0lvztj6stn4mxniuk4zq3d0vw9cz2z4ykqvqw9hqkfnvtakbxl1shl3mgviy21in3dvtzf5v05nbvnpb0tqk1fjmev4u2zcblf1uwnjnfl1dfditufuywjgtxhjazzznnhpnwppvvdprwvlck4zbxdjm3nsn3dguhrwnguyq3lwzlzjkzjiv2xml3jztgp6eugrbzm1zvdll2naaw9ut1y2yzjwcmfla01tzlr1oxdnbm5un20vkzlsk0tpdvhubfbrevg4s3j5nelgt0jbb0dbwkz1twtlsge5nvdzbepivuu1wkykwtlpdu5gngvqsjn6v1brq2tddhdsymvhmmzmmujin3hxqi9pbldtwfu1sw16r1zqzud1uhzzz1jpttrgtdfxnwpivuvnzdbvmjz2ythzdxaydznoakrjtdawkytjzwnwvlkvuk9mnwniwnlnddnoetfzl3r3blnxb0jmrujtmfi0cmdzl2q0q0hrnitrd1ntz2jqqlbyrnrnq2dzqversjrck1fxngmwy00rcvp2cnlkrxpbbnlmwffwcu9qvlb2dlkkbufqejnksli2rddyofy1b2pjt1dcvu5arwzpskvqs1dfy3zvugvqz1rsy2phrufcvk9lkzn0axj2wkd6mkd5napjeti0ww1ynfe3nexzafflmva5uisxc1bwmjhmawlrznfemznuamtvtxbttnzvtjvuuxlnevhqsdu5azzbnkdkcjddnmnbuutcz1fdsedibtjodxpeaucymejvcurqvwr1dk9ycmyxv3p6thd0l05tzufgogdmngngcuy2wdaxouokctfuv0vksku5uflecw9tq1i4awovtmxsbvzhvudjnzdwlytwudezqtnwl1zwdndetu5ak2fdrdjwqkyvuxbutworumdtthhdbwpaehvbtvlbd0v1c3d2dw5lng9jewnwwjbfshpvstznbljdnkxqvk1izvqxz0e9pqotls0tluvorcbsu0egufjjvkfursblrvktls0tlqo=

将config文件拷贝到/root/.kube/config

[root@master01 work ]#mkdir ~/.kube -p

[root@master01 work ]#cp kube.config ~/.kube/config

5.授权kubernetes证书访问kubelet api权限

如果想通过kubectl创建资源,还需要授权,将kubernetes用户绑定到clusterrole

kubernetes用户是ca-csr.json中cn定义的

[root@master01 work ]#kubectl create clusterrolebinding kube-apiserver:kubelet-apis --clusterrole=system:kubelet-api-admin --user kubernetes

clusterrolebinding.rbac.authorization.k8s.io/kube-apiserver:kubelet-apis created

#查看集群组件状态

[root@master01 work ]#kubectl cluster-info

kubernetes control plane is running at https://10.10.0.10:6443

to further debug and diagnose cluster problems, use ‘kubectl cluster-info dump’.

[root@master01 work ]#kubectl get componentstatuses

warning: v1 componentstatus is deprecated in v1.19+

name status message error

scheduler unhealthy get "http://127.0.0.1:10251/healthz": dial tcp 127.0.0.1:10251: connect: connection refused

controller-manager unhealthy get "http://127.0.0.1:10252/healthz": dial tcp 127.0.0.1:10252: connect: connection refused

etcd-1 healthy {"health":"true"}

etcd-0 healthy {"health":"true"}

etcd-2 healthy {"health":"true"}

[root@master01 work ]#kubectl get all --all-namespaces

namespace name type cluster-ip external-ip port(s) age

default service/kubernetes clusterip 10.255.0.1 <none> 443/tcp 147m

#同步kubectl文件到其他节点,为了防止单台机器故障,其他机器,仍然能够操作集群

[root@master02 ~ ]#mkdir /root/.kube/

[root@master03 kubernetes ]#mkdir /root/.kube/

[root@master01 work ]#rsync -vaz /root/.kube/config master02:/root/.kube/

sending incremental file list

config

sent 4,193 bytes received 35 bytes 8,456.00 bytes/sec

total size is 6,234 speedup is 1.47

[root@master01 work ]#rsync -vaz /root/.kube/config master03:/root/.kube/

sending incremental file list

config

sent 4,193 bytes received 35 bytes 2,818.67 bytes/sec

total size is 6,234 speedup is 1.47

#配置kubectl子命令补全

[root@master01 work ]#yum install -y bash-completion

[root@master01 work ]#source /usr/share/bash-completion/bash_completion

[root@master01 work ]#source <(kubectl completion bash)

[root@master01 work ]#kubectl completion bash > ~/.kube/completion.bash.inc

[root@master01 work ]#source '/root/.kube/completion.bash.inc'

[root@master01 work ]#source $home/.bash_profile

按两下tab键会将所有向查的东西列出来

[root@master01 work ]#kubectl get

apiservices.apiregistration.k8s.io namespaces

certificatesigningrequests.certificates.k8s.io networkpolicies.networking.k8s.io

clusterrolebindings.rbac.authorization.k8s.io nodes

clusterroles.rbac.authorization.k8s.io persistentvolumeclaims

componentstatuses persistentvolumes

configmaps poddisruptionbudgets.policy

controllerrevisions.apps pods

cronjobs.batch podsecuritypolicies.policy

csidrivers.storage.k8s.io podtemplates

csinodes.storage.k8s.io priorityclasses.scheduling.k8s.io

customresourcedefinitions.apiextensions.k8s.io prioritylevelconfigurations.flowcontrol.apiserver.k8s.io

daemonsets.apps replicasets.apps

deployments.apps replicationcontrollers

endpoints resourcequotas

endpointslices.discovery.k8s.io rolebindings.rbac.authorization.k8s.io

events roles.rbac.authorization.k8s.io

events.events.k8s.io runtimeclasses.node.k8s.io

flowschemas.flowcontrol.apiserver.k8s.io secrets

horizontalpodautoscalers.autoscaling serviceaccounts

ingressclasses.networking.k8s.io services

ingresses.extensions statefulsets.apps

ingresses.networking.k8s.io storageclasses.storage.k8s.io

jobs.batch storageversions.internal.apiserver.k8s.io

leases.coordination.k8s.io validatingwebhookconfigurations.admissionregistration.k8s.io

limitranges volumeattachments.storage.k8s.io

mutatingwebhookconfigurations.admissionregistration.k8s.io

kubectl官方备忘单: 安装补全命令参考地址:

https://kubernetes.io/zh/docs/reference/kubectl/cheatsheet/

5.4 部署kube-controller-manager组件

#创建csr请求文件

[root@master01 work ]#cat kube-controller-manager-csr.json

{

"cn": "system:kube-controller-manager",

"key": {

"algo": "rsa",

"size": 2048

},

"hosts": [

"127.0.0.1",

"10.10.0.10",

"10.10.0.11",

"10.10.0.12",

"10.10.0.100"

],

"names": [

{

"c": "cn",

"st": "hubei",

"l": "wuhan",

"o": "system:kube-controller-manager",

"ou": "system"

}

]

}

注: hosts 列表包含所有 kube-controller-manager 节点 ip;

cn 为 system:kube-controller-manager、

o 为 system:kube-controller-manager,

kubernetes 内置的 clusterrolebindings system:kube-controller-manager 赋予 kube-controller-manager 工作所需的权限

#生成证书

[root@master01 work ]#cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-controller-manager-csr.json | cfssljson -bare kube-controller-manager

2022/10/26 14:27:54 [info] generate received request

2022/10/26 14:27:54 [info] received csr

2022/10/26 14:27:54 [info] generating key: rsa-2048

2022/10/26 14:27:54 [info] encoded csr

2022/10/26 14:27:54 [info] signed certificate with serial number 721895207641224154550279977497269955483545721536

2022/10/26 14:27:54 [warning] this certificate lacks a "hosts" field. this makes it unsuitable for

websites. for more information see the baseline requirements for the issuance and management

of publicly-trusted certificates, v.1.1.6, from the ca/browser forum (https://cabforum.org);

specifically, section 10.2.3 ("information requirements").

#创建kube-controller-manager的kubeconfig

1.设置集群参数

[root@master01 work ]#kubectl config set-cluster kubernetes --certificate-authority=ca.pem --embed-certs=true --server=https://10.10.0.10:6443 --kubeconfig=kube-controller-manager.kubeconfig

cluster "kubernetes" set.

[root@master01 work ]#cat kube-controller-manager.kubeconfig

apiversion: v1

clusters:

- cluster:

certificate-authority-data: ls0tls1crudjtibdrvjusuzjq0furs0tls0tck1jsur0akndqxa2z0f3sujbz0lvukv4rxhidjq1zlvby1hcqlpengzeznrqzmtnd0rrwuplb1pjahzjtkfrruwkqlfbd1luruxnqwthqtfvrujotunrmdr4rgpbtujntlzcqwduqlvomvltvnbnutr3refzrfzruuhfd1zyzfdoaapiakvntufvr0exvuvdae1eyxpoek1rohdeuvlevlfrtev3wnplwe4wwlcweev6qvjcz05wqkfnventddfzbvz5cmjtvjbawe13sghjtk1qsxhnrekxturnmu5eqxdxagnotxpjee1esxlnrgcxtkrbd1dqqmhnuxn3q1fzrfzruuckrxdkrfrqru9nqxdhqtfvrunctuztsfzpwldreerqqu1cz05wqkfjvejwzdfhr0z1tvf3d0nnwurwuvflrxdocgppse14rhpbtkjntlzcqxnuqm5onwmzumxivevutujfr0exvuvbee1lytnwavpysnvawfjsy3pdq0ftsxdeuvlkcktvwklodmnoqvffqkjrqurnz0vqqurdq0frb0nnz0vcquxvv2lycgzpmfz6dtbrek8xyllavuo2dfvnthp2tduktljwwnpos2pnz3pdbmzym0phwum0swpfdgoxrwnhugdfouerbkz1undcwlk0a0u3nke1rjdhas8rnzdiak5tmapjcwdzy3o2vmdzm0jumezssxvmmlfkqm9oumnlwwhxl011z0zwulzrsuvqtjruzgxcvufschpnbdk4clpxsvu5ckxtmkz1wwrwytrrsi8wevp3ywlyyllxazfduk5jukrbmuzjrdh6vephexhrdmzoz05wrgjtqkhqvlhqk3oxzw4ktu5xqzvdmgt1qwcya3izcexhukg3t2dmr1dhv0rurxvwmuzkmujondl2v3iwtw8zqwd6te1tctdarnrgys9kygpvn3lowmzdznd5ntzqti9rckc5ess0afbonhvrdg16em1ybujyt2jjtff2sxhen2lkytvwb0i4q0f3rufbyu5tck1huxdez1levliwuefrsc9cqvfeqwdfr01csudbmvvkrxdfqi93uulnqvlcqwy4q0frsxdiuvlevliwt0jcwuukrk4rdfzgbnbvts9jcmmyc21qwkryt3blre1vve1coedbmvvksxdrwu1cyufgtit0vkzucfvnl0lyyzjzbwparapyt3blre1vve1bmeddu3fhu0lim0rrrujdd1vbqtrjqkfrqjk3nxbmody5thn6nkddwkdxcknywe1gmm00aenxcmfirgx4uwqvk3npqu5neesxunv1qwz5d2lmu2prtgfaymxstnzbmwq1cgvndxbmcudyqxljsje1mkpyugq1ae8knkpgmmlhmwxjms80bno4ofe1stbzcepjc0fsudzbtsttb2rrwjfkm3v5uhfzbfvmsnbju1plslhpqstervjcaqo1eehjzwmywvlpthjqk2xtmk90euj4k3zprnlmwfu0agkrn08zrvrwnjfjrwvkuhe0yzcvwe95ulnnmwzudffrckn5sc81ehfbsstsymhxmza4txdvwgh1m2qxcxfyrtbouxpovdvoow4zshn6v0f3dgthqvuzohezmezqzfdwyxgkefddcurbdyt5uelmc2jzdjdbmnvntukxy1o0zk9uwmkwk1myrhfxdnz4uez6enzza013ss9bnjqkls0tls1ftkqgq0vsvelgsunbveutls0tlqo=

server: https://10.10.0.10:6443

name: kubernetes

contexts: null

current-context: ""

kind: config

preferences: {}

users: null

2.设置客户端认证参数

用户system:kube-controller-manager 就是kube-controller-manager-csr.json cn设置的值

[root@master01 work ]#kubectl config set-credentials system:kube-controller-manager --client-certificate=kube-controller-manager.pem --client-key=kube-controller-manager-key.pem --embed-certs=true --kubeconfig=kube-controller-manager.kubeconfig

user “system:kube-controller-manager” set.

[root@master01 work ]#cat kube-controller-manager.kubeconfig

apiversion: v1

clusters:

- cluster:

certificate-authority-data: ls0tls1crudjtibdrvjusuzjq0furs0tls0tck1jsur0akndqxa2z0f3sujbz0lvukv4rxhidjq1zlvby1hcqlpengzeznrqzmtnd0rrwuplb1pjahzjtkfrruwkqlfbd1luruxnqwthqtfvrujotunrmdr4rgpbtujntlzcqwduqlvomvltvnbnutr3refzrfzruuhfd1zyzfdoaapiakvntufvr0exvuvdae1eyxpoek1rohdeuvlevlfrtev3wnplwe4wwlcweev6qvjcz05wqkfnventddfzbvz5cmjtvjbawe13sghjtk1qsxhnrekxturnmu5eqxdxagnotxpjee1esxlnrgcxtkrbd1dqqmhnuxn3q1fzrfzruuckrxdkrfrqru9nqxdhqtfvrunctuztsfzpwldreerqqu1cz05wqkfjvejwzdfhr0z1tvf3d0nnwurwuvflrxdocgppse14rhpbtkjntlzcqxnuqm5onwmzumxivevutujfr0exvuvbee1lytnwavpysnvawfjsy3pdq0ftsxdeuvlkcktvwklodmnoqvffqkjrqurnz0vqqurdq0frb0nnz0vcquxvv2lycgzpmfz6dtbrek8xyllavuo2dfvnthp2tduktljwwnpos2pnz3pdbmzym0phwum0swpfdgoxrwnhugdfouerbkz1undcwlk0a0u3nke1rjdhas8rnzdiak5tmapjcwdzy3o2vmdzm0jumezssxvmmlfkqm9oumnlwwhxl011z0zwulzrsuvqtjruzgxcvufschpnbdk4clpxsvu5ckxtmkz1wwrwytrrsi8wevp3ywlyyllxazfduk5jukrbmuzjrdh6vephexhrdmzoz05wrgjtqkhqvlhqk3oxzw4ktu5xqzvdmgt1qwcya3izcexhukg3t2dmr1dhv0rurxvwmuzkmujondl2v3iwtw8zqwd6te1tctdarnrgys9kygpvn3lowmzdznd5ntzqti9rckc5ess0afbonhvrdg16em1ybujyt2jjtff2sxhen2lkytvwb0i4q0f3rufbyu5tck1huxdez1levliwuefrsc9cqvfeqwdfr01csudbmvvkrxdfqi93uulnqvlcqwy4q0frsxdiuvlevliwt0jcwuukrk4rdfzgbnbvts9jcmmyc21qwkryt3blre1vve1coedbmvvksxdrwu1cyufgtit0vkzucfvnl0lyyzjzbwparapyt3blre1vve1bmeddu3fhu0lim0rrrujdd1vbqtrjqkfrqjk3nxbmody5thn6nkddwkdxcknywe1gmm00aenxcmfirgx4uwqvk3npqu5neesxunv1qwz5d2lmu2prtgfaymxstnzbmwq1cgvndxbmcudyqxljsje1mkpyugq1ae8knkpgmmlhmwxjms80bno4ofe1stbzcepjc0fsudzbtsttb2rrwjfkm3v5uhfzbfvmsnbju1plslhpqstervjcaqo1eehjzwmywvlpthjqk2xtmk90euj4k3zprnlmwfu0agkrn08zrvrwnjfjrwvkuhe0yzcvwe95ulnnmwzudffrckn5sc81ehfbsstsymhxmza4txdvwgh1m2qxcxfyrtbouxpovdvoow4zshn6v0f3dgthqvuzohezmezqzfdwyxgkefddcurbdyt5uelmc2jzdjdbmnvntukxy1o0zk9uwmkwk1myrhfxdnz4uez6enzza013ss9bnjqkls0tls1ftkqgq0vsvelgsunbveutls0tlqo=

server: https://10.10.0.10:6443

name: kubernetes

contexts: null

current-context: ""

kind: config

preferences: {}

users:

- name: system:kube-controller-manager

user:

client-certificate-data: ls0tls1crudjtibdrvjusuzjq0furs0tls0tck1jsuvlakndqxhlz0f3sujbz0lvzm5mbwtpdddmzejuy2lmde5pq01fdjz0v3nbd0rrwuplb1pjahzjtkfrruwkqlfbd1luruxnqwthqtfvrujotunrmdr4rgpbtujntlzcqwduqlvomvltvnbnutr3refzrfzruuhfd1zyzfdoaapiakvntufvr0exvuvdae1eyxpoek1rohdeuvlevlfrtev3wnplwe4wwlcweev6qvjcz05wqkfnventddfzbvz5cmjtvjbawe13sghjtk1qsxhnrekyturzeu16qxdxagnotxpjee1esxpnrfl5txpbd1dqq0jrrevmtufrr0exvuukqmhnq1ewnhheakfnqmdovkjbz1rcvwgxww1wce1rnhdeqvlevlfrsev3vlhkv2hoympfbk1dvudbmvvfq2hnzqpjm2x6zedwde9tddfzbvv0wti5dwrisnzir3hsy2kxdflxnwhamlz5tve4d0rrwurwuvfmrxdaemvytjbavzb4ckp6qwxcz05wqkfnvehutjvjm1jsylrwcmrxsmxmv052ym5sewiyehnawel0yldgdvlxzgxjakndqvnjd0rrwuoks29aswh2y05buuvcqlfbrgdnrvbbrendqvfvq2dnrujbs01rqwfjzelxuez3k29lckr1atdqcmxnathpt1hkmworttbtzundaflmzjd5du9vwlrzv0wyzk81umzmq1rrodfcwxvqouxry0k4afrirhzmzkq3zmdwcmziovmyauz3cllhsgncc2zjnfzgvvg5yuldu0vlm0rry3ndwg55vnjevkqzuvuvy3vzqvruzemxdfmvzu1pnhfevfvktu1mafukqnr1l3nwumy4bgpkowvtdk1zqzzkq3dit1fszmf0wthlchjxzjdytutkswjrdnhssehll3rayw1rwgo0si9tagpqnhrcyw1uckqrnep2dxpdy3zoaehtc09pbxa5mkkxd3lnnlnwqupjm2zptfrrakfgzgq3mnjruho4elhhk3nkclpzvwr3sxnzbgp5bw5ncudawlzuu1dyl2rxq2vxqzrxcnzybmo4dmn2nle4ovvbtjnsu2pmdtbdqxdfqufht0ikcvrdqnbqqu9cz05wsfe4qkfmoevcqu1dqmfbd0hrwurwujbsqkjzd0zbwulld1lcqlfvsef3ruddq3nhqvfvrgpcd01dtuf3r0exvwrfd0vcl3drq01bqxdiuvlevliwt0jcwuvgtktgsjhqqxivanqyumn4mwnnbstfnujvrtnsck1coedbmvvksxdrwu1cyufgtit0vkzucfvnl0lyyzjzbwparfhpcgvetvvutunjr0exvwrfuvfntui2sejioeekqufhsejbb0tbqxfiqkfvs0fbduhcqw9lquf5sejbb0tbr1f3rffzsktvwklodmnoqvfftejrqurnz0vcqufkcqo4ce4roxdkdujfrvpdt2fen2mrz0tmk3ztl2ljtzdql3zlbfgrnzd0cvgrttbqauwwa2ivtmczrwnuvuhiedveci9haujlzu1rrvltug5rc2hhwng1eghmn0oraflkowsrnm5wogrmuk80bhlpeencmwnyodbomfhzbjbmz2n0ee8kd0rcavhncui2oeo4wnkvyvbosvy0ogpcauzzzljmcupxzwzemex2nfh2rvpiskt6bvpzdnlwv3dyrwfzrfi0bqo4cgrswetoakrxtxkythi1wgnrlznkafrcz2g4cev4czbfauqwtuxdumnwagdjk3p0undxzmr0t2fqr0tjnupdcis3aevjqmzjz1mwnte5bmt5yjg0dejpwmrhk21pqvb4rtnqvtrlk01hrwxzk3pfnhr6a1pmogjkbev0vm9jb2yksvf2bel6akjxsze4vg9tumnmqt0kls0tls1ftkqgq0vsvelgsunbveutls0tlqo=

client-key-data: ls0tls1crudjtibsu0egufjjvkfursblrvktls0tlqpnsulfb3djqkfbs0nbuuvbb3hbqnb4mglvofhenmdxc082thmrdvddthljnwnuzjr6uko0sutgz3qvdks0nvjsck5owxzaoddsrjhzsk5eelvgatqvmhvsd2p5rk1jtzh0ofb0k0jxddhmmuxhsvhcz1lkd0d4oxpovvzszjfvz0oksvi3y05cexdkzwzkv3novvbkqlq5etvnqk9kmexxmuw5nhlmaw9otlfrd3grrlfhmjcrewxgl3lxtjmxnme4eapnthawtefjnunwoxexandxbxvwl3rjd29rahrdl0dvy2q3kzfscvpczvbnbitht1bpmezxywvzuddnbss3tup5cjgyrwvhdzq2yw4zwwpyrel6cepvqwx6zdg0de9ttufwmtn2yxrbl1b6tmrynngxbxhsm0fpeglxuethy3lvwmwkbfdkslpmotjvsjzvtglxdst1zvb5oxkvcer6mvfbm2rgs04rn1fjrefrqujbb0lcquc4wfu1anz2nddhq0lcbap2d3r1sjnlvgj3ci9qulhryulbr0tqtkkzcvraovzmdzrizgtpkzewumgzy3bldwphwgizrvdkelljqvjsb3vhcly4musrwu5seeu3v09tzjnzs0pirfgru215c1dpywlwetjwmkpomllbzvlctu93v0vvbc9xz1ring9evtb4q3okmnnlufrpnfvjrw1mc1plv1g0bk00elewm2qzdvc4qi90r3btchhvnk1da2fpnw9yvvryufyvuhptdna5r3l1vwpzune3b3j0qjdnznrjtxlwduuzwwvxyljybwrfslk3cwpbww9qvxvjdejznnvlshhubgrknwnwdzq2axbprze4cnhbze5xlznnrvl2b21tbzhjqxjuwtbic0o4djlxq0vhunf5zvawthb5eddiamxdb0zhrnhtutdxtct0vjlpunekzmxivnlxrunnwuvbmgdlk3h0amtmoxdfvkpkzhbrektfwgjtc09tahhya0v6blhny0ztnthkq0hjs0tyalziagpuadv4k2ezwkpuctdurdjnuvrzewcxymneruhqmw0vngdrqnrjs0zvofgvcjdem1c2ohfrnezjtlzjrnvozk9vcmfmc3rnqkf4znjqagvyylrvdutjttrvouxzt3lvn1lhctbfttcxwmryzdg5qvlsvuxibelxrgtdz1lfqxhzq1okn210tg5wzzhazljzewrhveh4dmtbwtfwnvi3s2jmq0pmmdhhb0c2n1c0uwf3txvnzhvrz3m1qmvwtunidkrvtqovnmjnquvncjfennjttdvll3biyjzkq0diyvrhsvfszmdqnlzpakpdzznlazbtzew2l2q1uedsl3n3zhb5azljcmmyl1nzahdoufpjk1evd3fqngw5mgrkblfbwtbbwmhvwexsnhhgvunnwuvbdgzncddvu0xamy9iyktmoge1suskwe5rek1ebldoru5yqzcznku3vuvxyuwvqudkk3bkmde4rc9tagvsk3c1mefvunlluvazqkjcuwtjs1l3zvz6bgoxww9xuw5nmllvnxd6r3peb2vvt1lwd1vtnkvnyu1nanvuyjy3cktjlznyqu43n2hgdvhzrmjlr3poyvhgc29scnv3avfpv2o5v2rdsm5al1dbd3vpre5rq2dzqtdnulvtk25gmdldwfl2mkxlq2n1ykvqvmzlufpcm0yrefnszkkkd0lougkycmxjshpqt2svrkfqmg8vveftcffjogxsz2y4mvqzqulkoudjvhvqek8vwxjkditoahzbdytya3gwtqpyeexlszrxl25cofy0endytkhlndjucwmyuephdkrqdwrua3nybefbqmrfwgnqeenymugzy3mxzk5mbtdgk0rzckv5othxuutcz0jycnfmzjc4we91swn5k0rnywkwq3fkdmg3ru9pmfbpn3bdwgtvuhfqm2q4vlurcffyv0qytg8kqndoavh6dzvuq0y5uxq3bljxuw1brxjjdmjbchg4uek5te9hz3hybmppthvnwmrwq2h6mjg3t0tebittl0jhlwppqvjjz0r6yxltulluucs5z3lbqm4rngv0ts9pwffvyww0risyyu5wog1ov0d2tvhydcsxci0tls0tru5eifjtqsbquklwqvrfietfws0tls0tcg==

3.设置上下文参数

[root@master01 work ]#kubectl config set-context system:kube-controller-manager --cluster=kubernetes --user=system:kube-controller-manager --kubeconfig=kube-controller-manager.kubeconfig

context "system:kube-controller-manager" created.

4.设置当前上下文

[root@master01 work ]#kubectl config use-context system:kube-controller-manager --kubeconfig=kube-controller-manager.kubeconfig

switched to context "system:kube-controller-manager".

[root@master01 work ]#cat kube-controller-manager.kubeconfig

apiversion: v1

clusters:

- cluster:

certificate-authority-data: ls0tls1crudjtibdrvjusuzjq0furs0tls0tck1jsur0akndqxa2z0f3sujbz0lvukv4rxhidjq1zlvby1hcqlpengzeznrqzmtnd0rrwuplb1pjahzjtkfrruwkqlfbd1luruxnqwthqtfvrujotunrmdr4rgpbtujntlzcqwduqlvomvltvnbnutr3refzrfzruuhfd1zyzfdoaapiakvntufvr0exvuvdae1eyxpoek1rohdeuvlevlfrtev3wnplwe4wwlcweev6qvjcz05wqkfnventddfzbvz5cmjtvjbawe13sghjtk1qsxhnrekxturnmu5eqxdxagnotxpjee1esxlnrgcxtkrbd1dqqmhnuxn3q1fzrfzruuckrxdkrfrqru9nqxdhqtfvrunctuztsfzpwldreerqqu1cz05wqkfjvejwzdfhr0z1tvf3d0nnwurwuvflrxdocgppse14rhpbtkjntlzcqxnuqm5onwmzumxivevutujfr0exvuvbee1lytnwavpysnvawfjsy3pdq0ftsxdeuvlkcktvwklodmnoqvffqkjrqurnz0vqqurdq0frb0nnz0vcquxvv2lycgzpmfz6dtbrek8xyllavuo2dfvnthp2tduktljwwnpos2pnz3pdbmzym0phwum0swpfdgoxrwnhugdfouerbkz1undcwlk0a0u3nke1rjdhas8rnzdiak5tmapjcwdzy3o2vmdzm0jumezssxvmmlfkqm9oumnlwwhxl011z0zwulzrsuvqtjruzgxcvufschpnbdk4clpxsvu5ckxtmkz1wwrwytrrsi8wevp3ywlyyllxazfduk5jukrbmuzjrdh6vephexhrdmzoz05wrgjtqkhqvlhqk3oxzw4ktu5xqzvdmgt1qwcya3izcexhukg3t2dmr1dhv0rurxvwmuzkmujondl2v3iwtw8zqwd6te1tctdarnrgys9kygpvn3lowmzdznd5ntzqti9rckc5ess0afbonhvrdg16em1ybujyt2jjtff2sxhen2lkytvwb0i4q0f3rufbyu5tck1huxdez1levliwuefrsc9cqvfeqwdfr01csudbmvvkrxdfqi93uulnqvlcqwy4q0frsxdiuvlevliwt0jcwuukrk4rdfzgbnbvts9jcmmyc21qwkryt3blre1vve1coedbmvvksxdrwu1cyufgtit0vkzucfvnl0lyyzjzbwparapyt3blre1vve1bmeddu3fhu0lim0rrrujdd1vbqtrjqkfrqjk3nxbmody5thn6nkddwkdxcknywe1gmm00aenxcmfirgx4uwqvk3npqu5neesxunv1qwz5d2lmu2prtgfaymxstnzbmwq1cgvndxbmcudyqxljsje1mkpyugq1ae8knkpgmmlhmwxjms80bno4ofe1stbzcepjc0fsudzbtsttb2rrwjfkm3v5uhfzbfvmsnbju1plslhpqstervjcaqo1eehjzwmywvlpthjqk2xtmk90euj4k3zprnlmwfu0agkrn08zrvrwnjfjrwvkuhe0yzcvwe95ulnnmwzudffrckn5sc81ehfbsstsymhxmza4txdvwgh1m2qxcxfyrtbouxpovdvoow4zshn6v0f3dgthqvuzohezmezqzfdwyxgkefddcurbdyt5uelmc2jzdjdbmnvntukxy1o0zk9uwmkwk1myrhfxdnz4uez6enzza013ss9bnjqkls0tls1ftkqgq0vsvelgsunbveutls0tlqo=

server: https://10.10.0.10:6443

name: kubernetes

contexts:

- context:

cluster: kubernetes